解决ollama官网安装超时及失败问题,在国产操作系统deepin/UOS环境安装ollama并成功拉取镜像

解决ollama官网安装超时及失败问题,在国产操作系统deepin/UOS环境安装ollama并成功拉取镜像

·

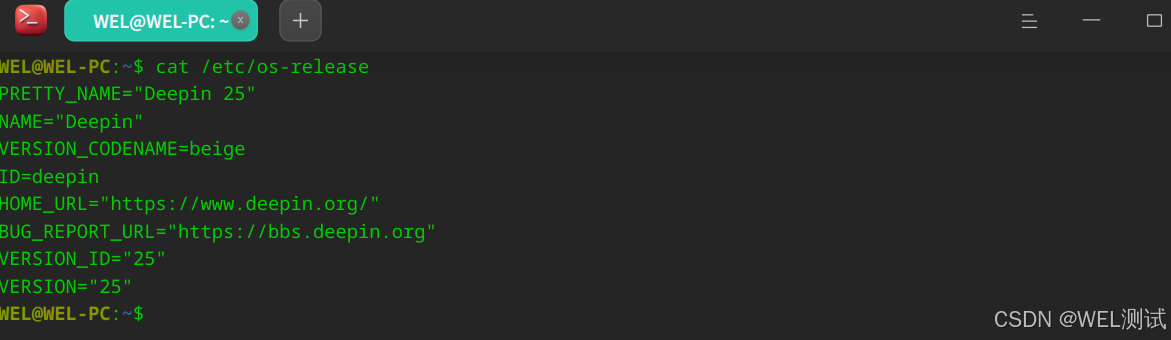

操作系统

WEL@WEL-PC:~$ cat /etc/os-release

PRETTY_NAME="Deepin 25"

NAME="Deepin"

VERSION_CODENAME=beige

ID=deepin

HOME_URL="https://www.deepin.org/"

BUG_REPORT_URL="https://bbs.deepin.org"

VERSION_ID="25"

VERSION="25"

WEL@WEL-PC:~$

操作步骤

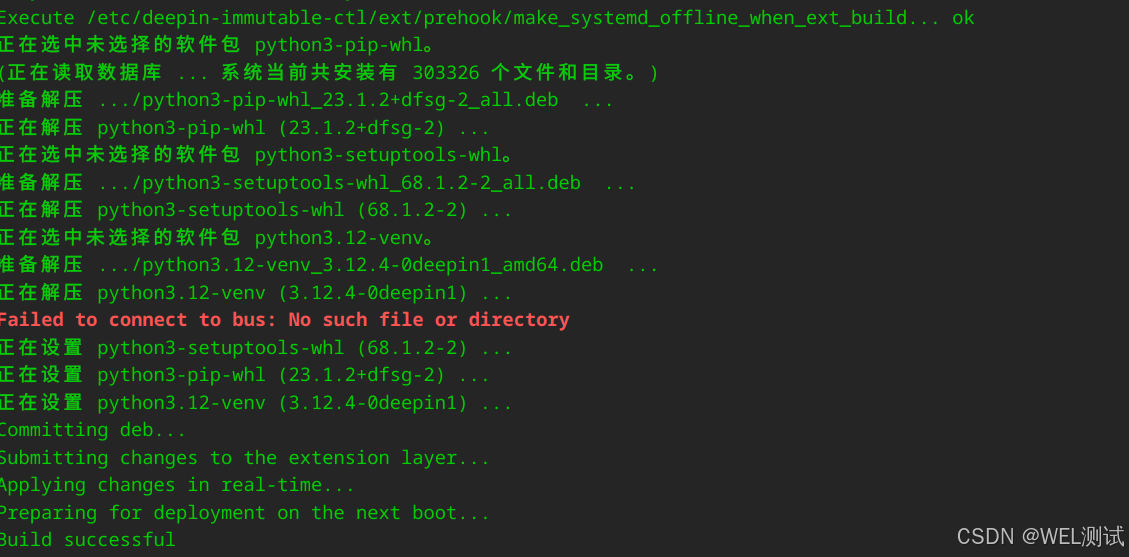

1、安装python3.12-venv

WEL@WEL-PC:~/workspace$ sudo apt install python3.12-venv

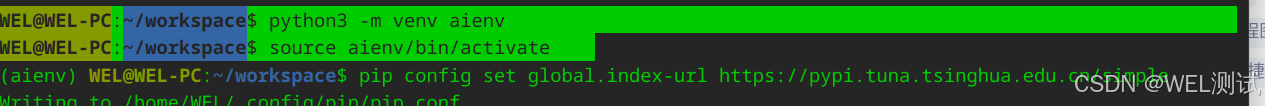

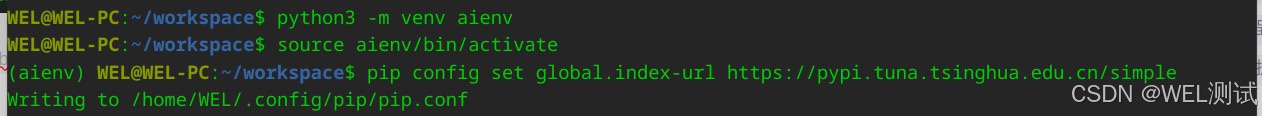

2、创建并激活虚拟环境

WEL@WEL-PC:~/workspace$ python3 -m venv aienv

WEL@WEL-PC:~/workspace$ source aienv/bin/activate

3、设置pip清华源

(aienv) WEL@WEL-PC:~/workspace$ pip config set global.index-url https://pypi.tuna.tsinghua.edu.cn/simple

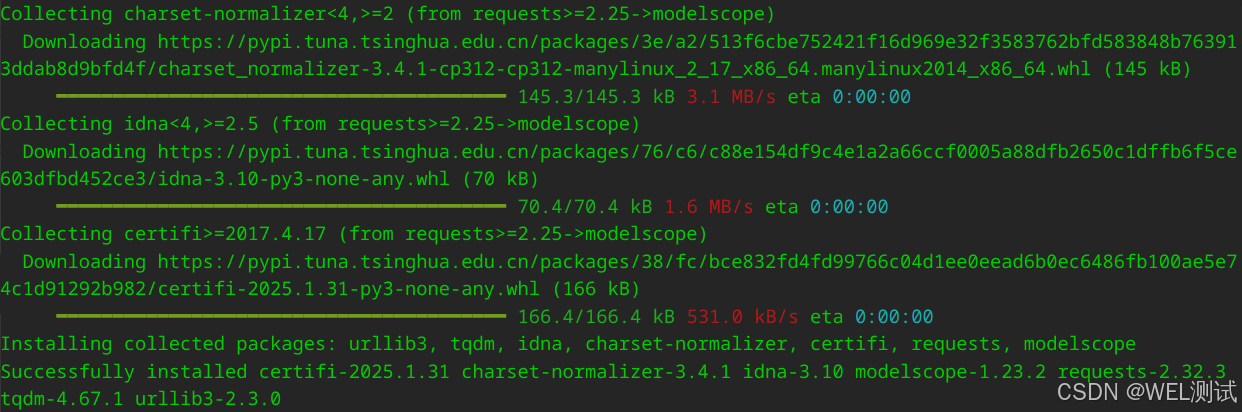

4、安装modelscope

(aienv) WEL@WEL-PC:~/workspace$ pip install modelscope

5、升级setuptool,解决下载时ModuleNotFoundError: No module named 'pkg_resources'错误

(aienv) WEL@WEL-PC:~/workspace$ pip install --upgrade setuptools

错误详情:

(aienv) WEL@WEL-PC:~/workspace$ modelscope download --model=modelscope/ollama-linux --local_dir ./ollama-linux --revision v0.5.13

Traceback (most recent call last):

File "/home/WEL/workspace/aienv/bin/modelscope", line 5, in <module>

from modelscope.cli.cli import run_cmd

File "/home/WEL/workspace/aienv/lib/python3.12/site-packages/modelscope/cli/cli.py", line 12, in <module>

from modelscope.cli.plugins import PluginsCMD

File "/home/WEL/workspace/aienv/lib/python3.12/site-packages/modelscope/cli/plugins.py", line 6, in <module>

from modelscope.utils.plugins import PluginsManager

File "/home/WEL/workspace/aienv/lib/python3.12/site-packages/modelscope/utils/plugins.py", line 18, in <module>

import pkg_resources

ModuleNotFoundError: No module named 'pkg_resources'

(aienv) WEL@WEL-PC:~/workspace$ pip install pkg_resources

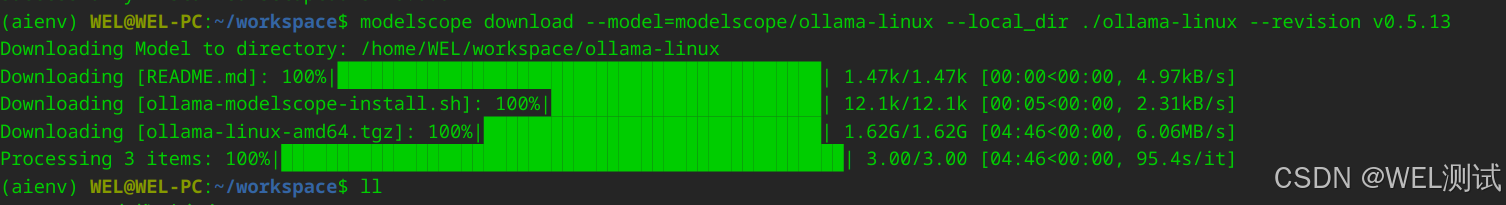

6、执行ollam下载

(aienv) WEL@WEL-PC:~/workspace$ modelscope download --model=modelscope/ollama-linux --local_dir ./ollama-linux --revision v0.5.13

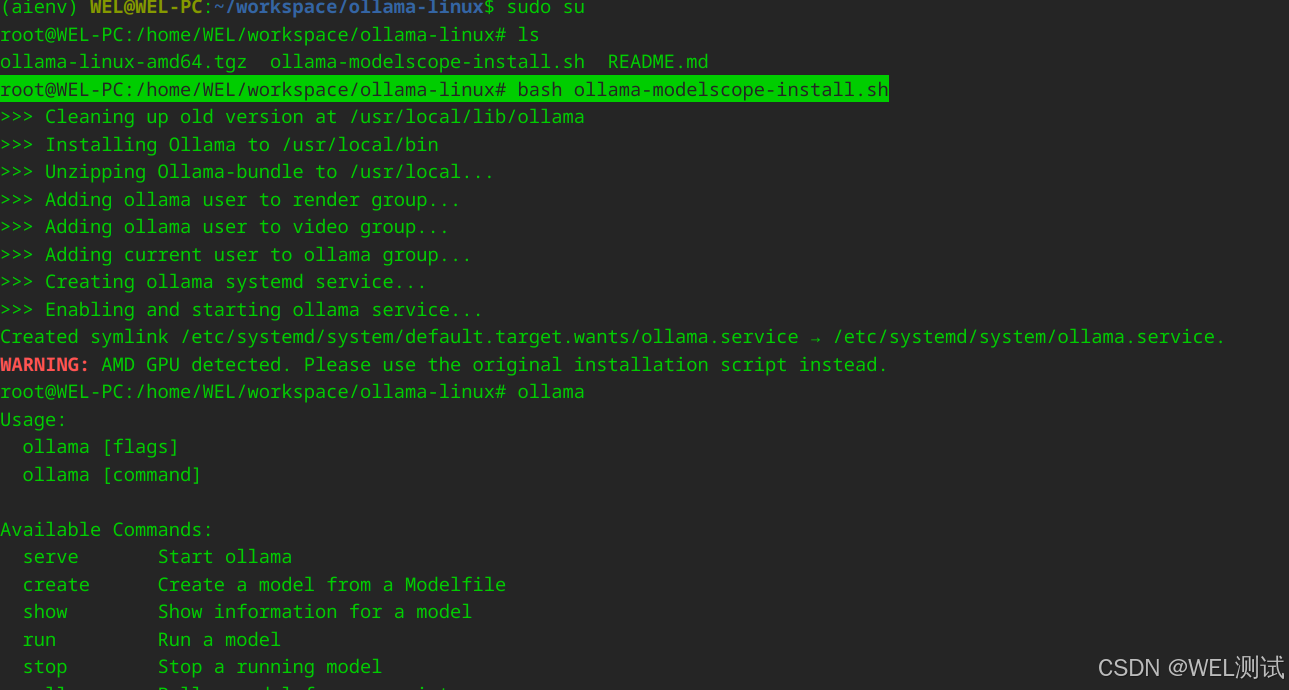

7、切换到root用户执行安装

(aienv) WEL@WEL-PC:~/workspace/ollama-linux$ sudo su

root@WEL-PC:/home/WEL/workspace/ollama-linux# bash ollama-modelscope-install.sh

tips:如果不用root用户,安装会提示i权限不足操作失败,即使使用sudo也会失败,失败详情如下:

(aienv) WEL@WEL-PC:~/workspace/ollama-linux$ sudo bash ollama-modelscope-install.sh

请输入密码:

验证成功

>>> Cleaning up old version at /usr/local/lib/ollama

>>> Installing Ollama to /usr/local/bin

>>> Unzipping Ollama-bundle to /usr/local...

>>> Creating ollama user...

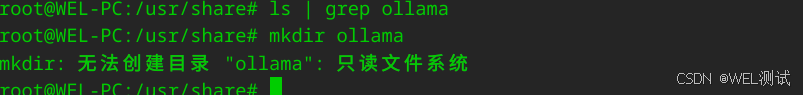

useradd:无法创建目录 /usr/share/ollama

>>> The Ollama API is now available at 127.0.0.1:11434.

>>> Install complete. Run "ollama" from the command line.

服务验证及问题处理

1、启动ollama服务并设置开机自启

root@WEL-PC:/home/WEL/workspace# systemctl stop ollama

root@WEL-PC:/home/WEL/workspace# systemctl start ollama

root@WEL-PC:/home/WEL/workspace# systemctl status ollama

● ollama.service - Ollama Service

Loaded: loaded (/etc/systemd/system/ollama.service; enabled; preset: enabled)

Active: activating (auto-restart) (Result: exit-code) since Tue 2025-03-11 15:53:26 CST; 1s ago

Process: 88906 ExecStart=/usr/local/bin/ollama serve (code=exited, status=1/FAILURE)

Main PID: 88906 (code=exited, status=1/FAILURE)

CPU: 43ms

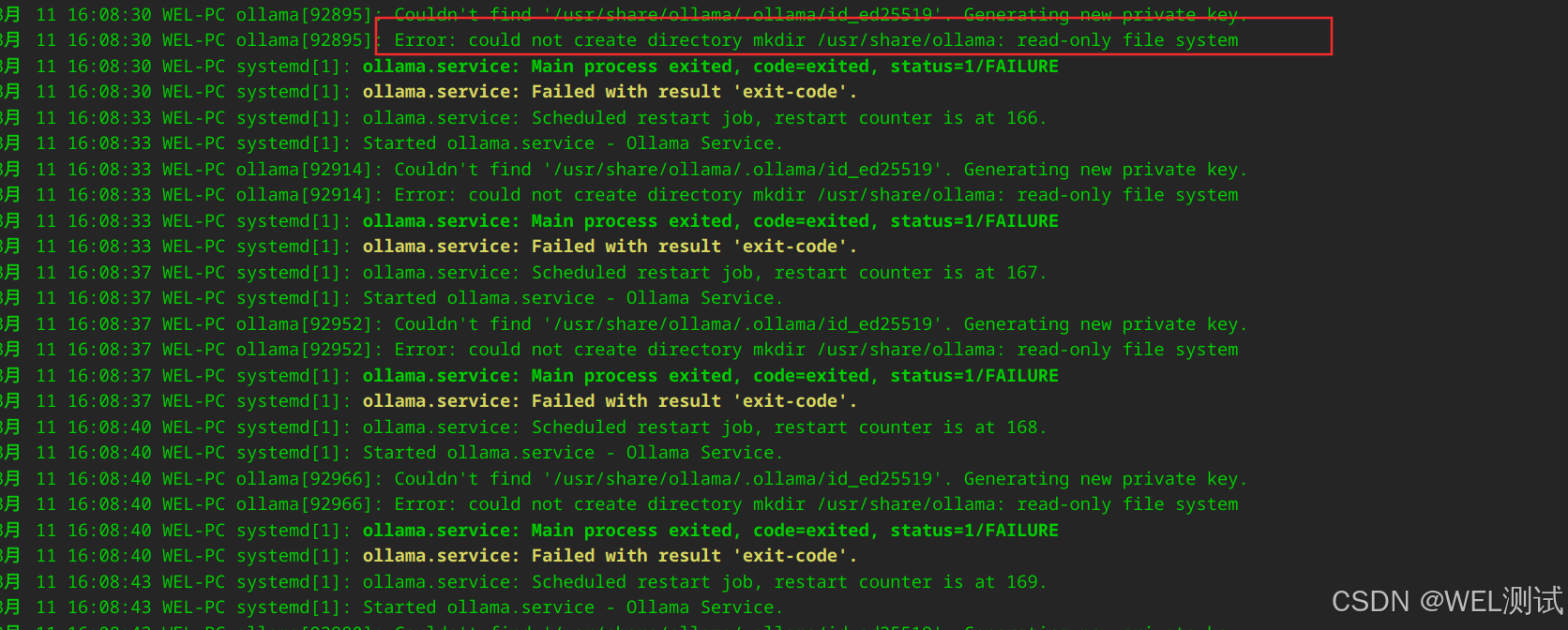

查看错误信息:

WEL@WEL-PC:/persistent/home/WEL/workspace/ollama-linux$ sudo journalctl -u ollama

tips:deepin操作系统/usr/share/为系统只读,无法创建ollama

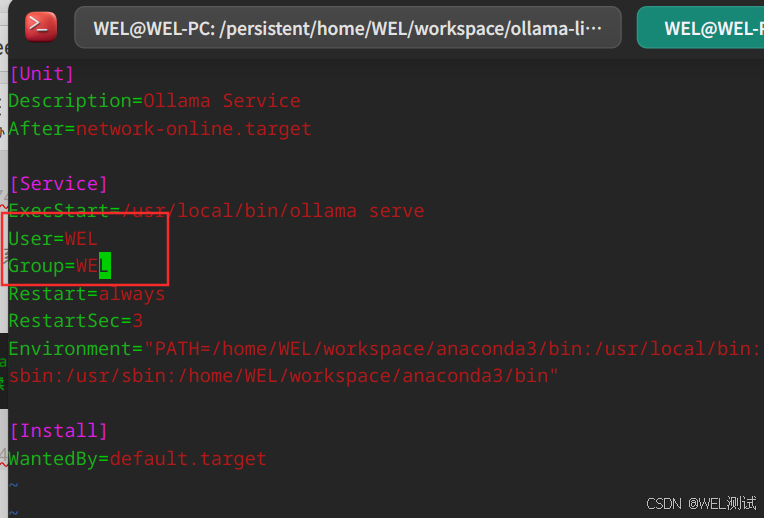

问题处理方案:ollama用户创建失败,使用当前已有用户及组

sudo vim /etc/systemd/system/ollama.service

重新加载配置及启动服务:

WEL@WEL-PC:~/.ollama$ sudo systemctl daemon-reload

WEL@WEL-PC:~/.ollama$ sudo systemctl start ollama

WEL@WEL-PC:~/.ollama$ sudo systemctl status ollama

● ollama.service - Ollama Service

Loaded: loaded (/etc/systemd/system/ollama.service; enabled; preset: enabled)

Active: active (running) since Tue 2025-03-11 17:28:24 CST; 17s ago

Main PID: 116981 (ollama)

Tasks: 12 (limit: 9168)

Memory: 11.0M (peak: 12.1M)

CPU: 90ms

CGroup: /system.slice/ollama.service

└─116981 /usr/local/bin/ollama serve

3月 11 17:28:24 WEL-PC systemd[1]: Started ollama.service - Ollama Service.

3月 11 17:28:24 WEL-PC ollama[116981]: 2025/03/11 17:28:24 routes.go:1215: INFO server config env="map[CUDA_V>

3月 11 17:28:24 WEL-PC ollama[116981]: time=2025-03-11T17:28:24.639+08:00 level=INFO source=images.go:432 msg>

3月 11 17:28:24 WEL-PC ollama[116981]: time=2025-03-11T17:28:24.640+08:00 level=INFO source=images.go:439 msg>

3月 11 17:28:24 WEL-PC ollama[116981]: time=2025-03-11T17:28:24.640+08:00 level=INFO source=routes.go:1277 ms>

3月 11 17:28:24 WEL-PC ollama[116981]: time=2025-03-11T17:28:24.640+08:00 level=INFO source=gpu.go:217 msg="l>

3月 11 17:28:24 WEL-PC ollama[116981]: time=2025-03-11T17:28:24.677+08:00 level=WARN source=amd_linux.go:61 m>

3月 11 17:28:24 WEL-PC ollama[116981]: time=2025-03-11T17:28:24.678+08:00 level=INFO source=amd_linux.go:402 >

3月 11 17:28:24 WEL-PC ollama[116981]: time=2025-03-11T17:28:24.678+08:00 level=INFO source=gpu.go:377 msg="n>

3月 11 17:28:24 WEL-PC ollama[116981]: time=2025-03-11T17:28:24.678+08:00 level=INFO source=types.

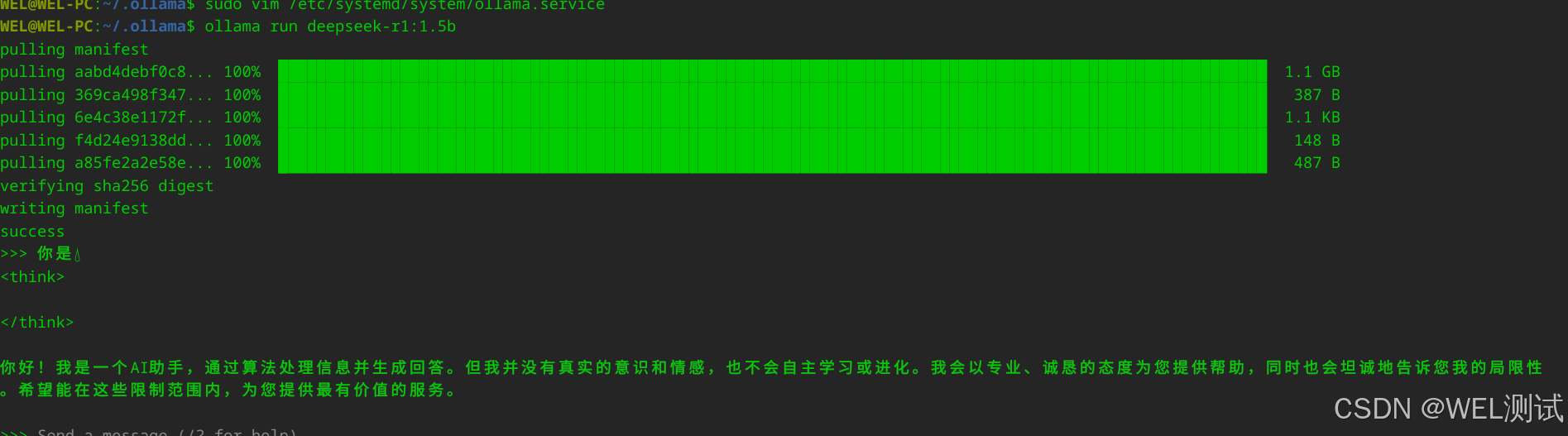

2、拉取模型:WEL@WEL-PC:~/.ollama$ ollama run deepseek-r1:1.5b

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献8条内容

已为社区贡献8条内容

所有评论(0)