opencv4+dnn+yolov8识别中国象棋

【代码】opencv4+dnn+yolov8识别中国象棋。

·

opencv4+dnn+yolov8识别中国象棋

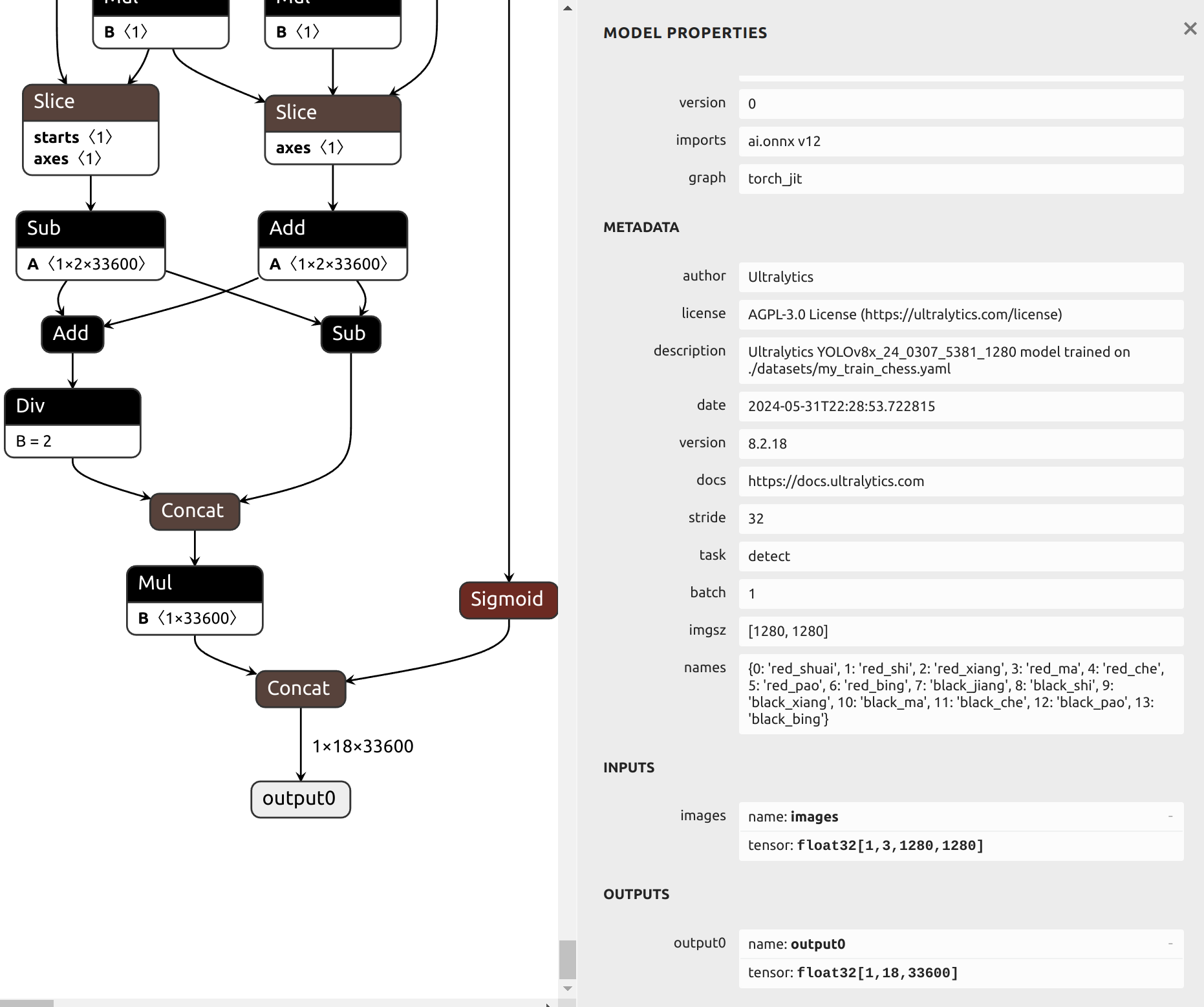

yolov8 pt模型转onnx模型

yolo export model=./weights/yolov8x_24_0307_5381_1280.pt format=onnx dynamic=False opset=12

代码

main.cpp

#include "opencv2/opencv.hpp"

#include <fstream>

#include <sstream>

#include <random>

using namespace cv;

using namespace dnn;

float inpWidth;

float inpHeight;

float confThreshold, scoreThreshold, nmsThreshold;

std::vector<std::string> classes;

std::vector<cv::Scalar> colors;

bool letterBoxForSquare = true;

cv::Mat formatToSquare(const cv::Mat &source);

void postprocess(Mat &frame, cv::Size inputSz, const std::vector<Mat> &out, Net &net);

void drawPred(int classId, float conf, int left, int top, int right, int bottom, Mat &frame);

std::random_device rd;

std::mt19937 gen(rd());

std::uniform_int_distribution<int> dis(100, 255);

int main()

{

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);;

String modelPath = "model.onnx";

String classesFile = "my_classes.txt";

// Open a video file or an image file or a camera stream.

VideoCapture cap;

cap.open(R"(data/IMG_1779.jpg)");

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

// 根据选择的检测模型文件进行配置

confThreshold = 0.25;

scoreThreshold = 0.45;

nmsThreshold = 0.5;

float scale = 1 / 255.0; // 0.00392

Scalar mean = {0, 0, 0};

bool swapRB = true;

inpWidth = 1280;

inpHeight = 1280;

String configPath;

String framework = "";

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

// int backendId = cv::dnn::DNN_BACKEND_OPENCV;

// int targetId = cv::dnn::DNN_TARGET_CPU; //cpu 350ms, opencl 310

int backendId = cv::dnn::DNN_BACKEND_CUDA;

int targetId = cv::dnn::DNN_TARGET_CUDA; // cuda 15ms

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

// Open file with classes names.

if (!classesFile.empty())

{

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

const std::string &file = classesFile;

std::ifstream ifs(file.c_str());

if (!ifs.is_open())

CV_Error(Error::StsError, "File " + file + " not found");

std::string line;

while (std::getline(ifs, line))

{

classes.push_back(line);

colors.push_back(cv::Scalar(dis(gen), dis(gen), dis(gen)));

}

}

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

// Load a model.

Net net = readNet(modelPath, configPath, framework);

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

net.setPreferableBackend(backendId);

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

net.setPreferableTarget(targetId);

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

std::vector<String> outNames = net.getUnconnectedOutLayersNames();

// std::vector<String> outNames{"output"};

if (backendId == cv::dnn::DNN_BACKEND_CUDA)

{

int dims[] = {1, 3, inpHeight, inpWidth};

cv::Mat tmp = cv::Mat::zeros(4, dims, CV_32F);

std::vector<cv::Mat> outs;

net.setInput(tmp);

for (int i = 0; i < 10; i++)

net.forward(outs, outNames); // warmup

}

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

// Create a window

static const std::string kWinName = "Deep learning object detection in OpenCV";

cv::namedWindow(kWinName, 0);

cv::TickMeter tk;

// Process frames.

Mat frame, blob;

while (waitKey(1) < 0)

{

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

// tk.reset();

// tk.start();

cap >> frame;

if (frame.empty())

{

waitKey();

break;

}

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

// Create a 4D blob from a frame.

cv::Mat modelInput = frame;

if (letterBoxForSquare && inpWidth == inpHeight)

modelInput = formatToSquare(modelInput);

blobFromImage(modelInput, blob, scale, cv::Size2f(inpWidth, inpHeight), mean, swapRB, false);

// Run a model.

net.setInput(blob);

std::vector<Mat> outs;

// tk.reset();

// tk.start();

auto tt1 = cv::getTickCount();

net.forward(outs, outNames);

auto tt2 = cv::getTickCount();

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

tk.stop();

postprocess(frame, modelInput.size(), outs, net);

// tk.stop();

// Put efficiency information.

// std::vector<double> layersTimes;

// double freq = getTickFrequency() / 1000;

// double t = net.getPerfProfile(layersTimes) / freq;

// std::string label = format("Inference time: %.2f ms (%.2f ms)", t, /*tk.getTimeMilli()*/ (tt2 - tt1) /

// cv::getTickFrequency() * 1000);

std::string label = format("Inference time: %.2f ms", (tt2 - tt1) / cv::getTickFrequency() * 1000);

cv::putText(frame, label, Point(0, 15), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0, 255, 0));

// printf("\r%s\t", label.c_str());

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

cv::imshow(kWinName, frame);

namedWindow(kWinName, 0); // 参数为零,则可以自由拖动

imshow(kWinName, frame);

waitKey(10);

imwrite("1.jpg", frame);

}

printf("[%s][%d] 运行到行号\n", __FUNCTION__, __LINE__);

return 0;

}

cv::Mat formatToSquare(const cv::Mat &source)

{

int col = source.cols;

int row = source.rows;

int _max = MAX(col, row);

cv::Mat result = cv::Mat::zeros(_max, _max, CV_8UC3);

source.copyTo(result(cv::Rect(0, 0, col, row)));

return result;

}

void postprocess(Mat &frame, cv::Size inputSz, const std::vector<Mat> &outs, Net &net)

{

// yolov8 has an output of shape (batchSize, 84, 8400) (Num classes + box[x,y,w,h] + confidence[c])

auto tt1 = cv::getTickCount();

float x_factor = inputSz.width / inpWidth;

float y_factor = inputSz.height / inpHeight;

std::vector<int> class_ids;

std::vector<float> confidences;

std::vector<cv::Rect> boxes;

// int rows = outs[0].size[1];

// int dimensions = outs[0].size[2];

// [1, 84, 8400] -> [8400,84]

int rows = outs[0].size[2];

int dimensions = outs[0].size[1];

auto tmp = outs[0].reshape(1, dimensions);

cv::transpose(tmp, tmp);

float *data = (float *)tmp.data;

for (int i = 0; i < rows; ++i)

{

// float confidence = data[4];

// if(confidence >= confThreshold) {

float *classes_scores = data + 4;

cv::Mat scores(1, classes.size(), CV_32FC1, classes_scores);

cv::Point class_id;

double max_class_score;

minMaxLoc(scores, 0, &max_class_score, 0, &class_id);

if (max_class_score > scoreThreshold)

{

confidences.push_back(max_class_score);

class_ids.push_back(class_id.x);

float x = data[0];

float y = data[1];

float w = data[2];

float h = data[3];

int left = int((x - 0.5 * w) * x_factor);

int top = int((y - 0.5 * h) * y_factor);

int width = int(w * x_factor);

int height = int(h * y_factor);

boxes.push_back(cv::Rect(left, top, width, height));

}

//}

data += dimensions;

}

std::vector<int> indices;

NMSBoxes(boxes, confidences, scoreThreshold, nmsThreshold, indices);

auto tt2 = cv::getTickCount();

std::string label = format("NMS time: %.2f ms", (tt2 - tt1) / cv::getTickFrequency() * 1000);

cv::putText(frame, label, Point(0, 30), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0, 255, 0));

for (size_t i = 0; i < indices.size(); ++i)

{

int idx = indices[i];

Rect box = boxes[idx];

drawPred(class_ids[idx], confidences[idx], box.x, box.y, box.x + box.width, box.y + box.height, frame);

}

}

void drawPred(int classId, float conf, int left, int top, int right, int bottom, Mat &frame)

{

rectangle(frame, Point(left, top), Point(right, bottom), Scalar(0, 255, 0));

std::string label = format("%.2f", conf);

Scalar color = Scalar::all(255);

if (!classes.empty())

{

CV_Assert(classId < (int)classes.size());

label = classes[classId] + ": " + label;

color = colors[classId];

}

int baseLine;

Size labelSize = getTextSize(label, FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine);

top = max(top, labelSize.height);

rectangle(frame, Point(left, top - labelSize.height), Point(left + labelSize.width, top + baseLine), color, FILLED);

cv::putText(frame, label, Point(left, top), FONT_HERSHEY_SIMPLEX, 0.5, Scalar());

}

my_classes.txt

red_shuai

red_shi

red_xiang

red_ma

red_che

red_pao

red_bing

black_jiang

black_shi

black_xiang

black_ma

black_che

black_pao

black_bing

编译

g++ -o main main.cpp `pkg-config opencv4 --cflags --libs`

运行

./main

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献12条内容

已为社区贡献12条内容

所有评论(0)