基于Pytorch用普通神经网络实现MNIST字符识别

steps:迭代次数,step相当于epochmodel.train() #更新w和b#xb(64,784) yb(64),xb和yb都是tensor#evaluate 模式,dropout和BatchNum不会工作model.eval() #不更新w和b#总的验证集的平均损失print("当前step:"+str(step),"验证集损失"+str(val_loss))#返回模型和优化器opti

·

第一种方法

1.读取数据

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

import random

import matplotlib.pyplot as plt

batchSize=64

transform=transforms.Compose([

transforms.ToTensor(),

#均值=0.1307,标准差=0.3081

transforms.Normalize((0.1307,),(0.3081,))

])

train_dataset=datasets.MNIST(root="data/mnist",

train=True,

download=True,

transform=transform)

train_loader=DataLoader(train_dataset,

shuffle=True,

batch_size=batchSize)

test_dataset=datasets.MNIST(root="data/mnist",

train=False,

download=True,

transform=transform)

test_loader=DataLoader(test_dataset,

shuffle=True,

batch_size=batchSize)2.随机显示一个数字

def showRandomPictures(train_loader):

data_iter=iter(train_loader)

images,labels=data_iter.__next__()

# Choose a random index from the batch

random_index = random.randint(0, batchSize - 1)

# Display the transformed image

transformed_image = images[random_index].squeeze().numpy()

transformed_image = (transformed_image * 0.3081) + 0.1307 # Inverse normalization

plt.imshow(transformed_image, cmap='gray') # Assuming MNIST images are grayscale

plt.title(f"Label: {labels[random_index].item()}")

plt.show()

showRandomPictures(train_loader)

3.定义模型和超参数

class Net(torch.nn.Module):

def __init__(self) -> None:

super(Net,self).__init__()

self.layer1=torch.nn.Linear(784,512)

self.layer2=torch.nn.Linear(512,256)

self.layer3=torch.nn.Linear(256,128)

self.layer4=torch.nn.Linear(128,64)

self.layer5=torch.nn.Linear(64,10)

def forward(self,x):

x=x.view(-1,784)

x=F.relu(self.layer1(x))

x=F.relu(self.layer2(x))

x=F.relu(self.layer3(x))

x=F.relu(self.layer4(x))

x=self.layer5(x)

return x

model=Net()

criterion=torch.nn.CrossEntropyLoss()

optimizer=optim.SGD(model.parameters(),lr=0.01,momentum=0.5)4.训练步骤

def train(epoch):

running_loss=0.0

for batch_idx,data in enumerate(train_loader,0):

inputs,target=data

optimizer.zero_grad()

outputs=model(inputs)

loss=criterion(outputs,target)

loss.backward()

optimizer.step()

running_loss+=loss.item()

if batch_idx%300==299:

print(f"[epoch={epoch+1}, batch_index={batch_idx+1}, training_loss={running_loss/300}]")

running_loss=0.0

5.测试步骤

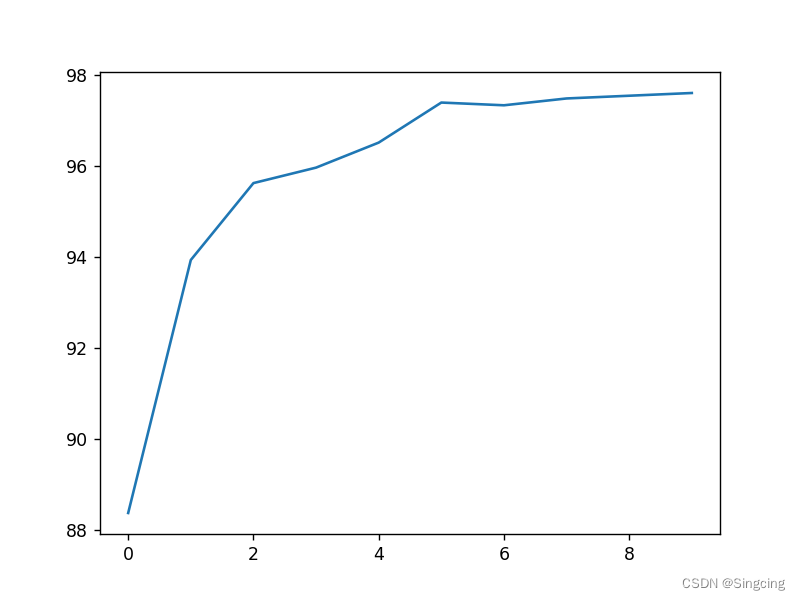

accuracyHistory=[]

def test():

correct=0

total=0

with torch.no_grad():

for data in test_loader:

images,labels=data

outputs=model(images)

#每一行的最大值的下标[max,maxIndex]

_,predicted=torch.max(outputs.data,dim=1)

#label.size(0)是batch_size

total+=labels.size(0)

correct+=(predicted==labels).sum().item()

print(f"Accuracy on test set:{100*correct/total}")

accuracyHistory.append(100*correct/total)6.主方法执行

if __name__=="__main__":

for epoch in range(10):

train(epoch)

test()

plt.plot(accuracyHistory)

plt.show()7.运行结果

[epoch=1, batch_index=300, training_loss=2.223298035860062]

[epoch=1, batch_index=600, training_loss=0.9641364443302155]

[epoch=1, batch_index=900, training_loss=0.43318099692463874]

Accuracy on test set:88.38

....

Accuracy on test set:97.34

[epoch=8, batch_index=300, training_loss=0.05266832955181599]

[epoch=8, batch_index=600, training_loss=0.05094206769950688]

[epoch=8, batch_index=900, training_loss=0.053400517796787124]

Accuracy on test set:97.49

[epoch=9, batch_index=300, training_loss=0.036180642331019044]

[epoch=9, batch_index=600, training_loss=0.04376721960259602]

[epoch=9, batch_index=900, training_loss=0.04324531762007003]

Accuracy on test set:97.55

[epoch=10, batch_index=300, training_loss=0.02999880815623328]

[epoch=10, batch_index=600, training_loss=0.0335875346181759]

[epoch=10, batch_index=900, training_loss=0.03751158087824782]

Accuracy on test set:97.61

第二种方法

1.下载mnist.pkl.gz

网址:http://www.iro.umontreal.ca/~lisa/deep/data/mnist/mnist.pkl.gz

数据集文件夹路径是data2/mnist/mnist.pkl.gz

2.读取数据

from pathlib import Path

import matplotlib.pyplot as plt

DATA_PATH=Path("./data2")

PATH=DATA_PATH / "mnist"

FILENAME="mnist.pkl.gz"

import pickle

import gzip

with gzip.open((PATH/FILENAME).as_posix(),"rb") as f:

((x_train,y_train),(x_valid,y_valid),_)=pickle.load(f,encoding="latin-1")

#x_train(500,784),y_train(5000,) x_valid(10000, 784),y_valid(10000,)随机显示一个数字

#==========28*28=784========随机显示数字5

import matplotlib.pyplot as plt

import numpy as np

plt.imshow(x_train[50].reshape((28,28)),cmap="gray")

plt.show()

数据转为tensor

#=================数据转为tensor才能参与建模训练===

import torch

x_train,y_train,x_valid,y_valid=map(

torch.tensor, (x_train,y_train,x_valid,y_valid)

)

3.设置损失函数为交叉熵函数

#=====torch.nn.functional==========

import torch.nn.functional as F

loss_func=F.cross_entropy

4.创建Model类

from torch import nn

class Mnist_NN(nn.Module):

def __init__(self):

super().__init__()

self.hidden1=nn.Linear(784,128)

self.hidden2=nn.Linear(128,256)

self.out=nn.Linear(256,10)

self.dropout=nn.Dropout(0.5)

def forward(self,x):

x=F.relu(self.hidden1(x))

#全连接层+droput,防止过拟合

x=self.dropout(x)

x=F.relu(self.hidden2(x))

x=self.dropout(x)

x=self.out(x)

return x

# Mnist_NN(

# (hidden1): Linear(in_features=784, out_features=128, bias=True)

# (hidden2): Linear(in_features=128, out_features=256, bias=True)

# (out): Linear(in_features=256, out_features=10, bias=True)

# (dropout): Dropout(p=0.5, inplace=False)

# )

# net=Mnist_NN()

# print(net)

打印一下这网络长什么样

net=Mnist_NN()

print(net)

#打印定义好的名字和w和b

for name,parameter in net.named_parameters():

print(name,parameter,parameter.size())Mnist_NN(

(hidden1): Linear(in_features=784, out_features=128, bias=True)

(hidden2): Linear(in_features=128, out_features=256, bias=True)

(out): Linear(in_features=256, out_features=10, bias=True)

(dropout): Dropout(p=0.5, inplace=False)

)

hidden1.weight Parameter containing:

tensor([[-1.7000e-02, -7.5721e-03, -1.7358e-03, ..., 7.6538e-03,

..........

out.weight Parameter containing:

tensor([[-0.0173, 0.0522, 0.0494, ..., -0.0579, -0.0439, -0.0522],

....

requires_grad=True) torch.Size([10, 256])

out.bias Parameter containing:

tensor([-0.0154, -0.0028, -0.0574, -0.0608, -0.0276, 0.0483, 0.0503, 0.0112,

-0.0352, -0.0498], requires_grad=True) torch.Size([10])

5.使用TensorDataset和DataLoader,封装成一个batch的数据集

from torch.utils.data import TensorDataset

from torch.utils.data import DataLoader

bs=64

train_ds=TensorDataset(x_train,y_train)

# train_dl=DataLoader(train_ds,batch_size=bs,shuffle=True)

valid_ds=TensorDataset(x_valid,y_valid)

# valid_dl=DataLoader(valid_ds,batch_size=bs*2)

def get_data(train_ds,valid_ds,bs):

return (

DataLoader(train_ds,batch_size=bs,shuffle=True),

DataLoader(valid_ds,batch_size=bs*2)

)

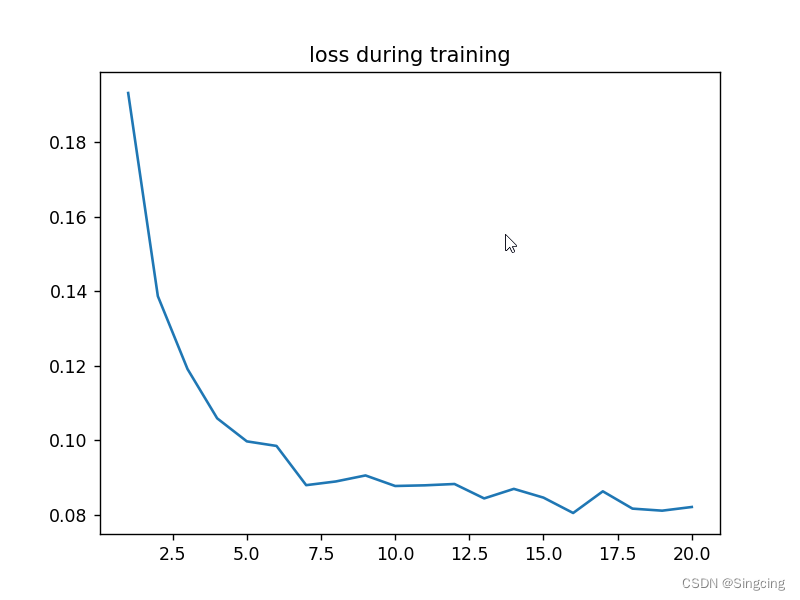

6.定义训练步骤

import numpy as np

val_losses=[]

#steps:迭代次数,step相当于epoch

def fit(steps,model,loss_func,opt,train_dl,valid_dl):

for step in range(steps):

model.train() #更新w和b

#xb(64,784) yb(64),xb和yb都是tensor

for xb,yb in train_dl:

loss_batch(model,loss_func,xb,yb,opt)

#evaluate 模式,dropout和BatchNum不会工作

model.eval() #不更新w和b

with torch.no_grad():

#losses:nums=(loss,batch),(loss,batch)....

losses,nums =zip(

*[loss_batch(model,loss_func,xb,yb) for xb,yb in valid_dl]

)

#总的验证集的平均损失

val_loss=np.sum(np.multiply(losses,nums)) / np.sum(nums)

val_losses.append(val_loss)

print("当前step:"+str(step),"验证集损失"+str(val_loss))

from torch import optim

def get_model():

model=Mnist_NN()

#返回模型和优化器optim.SGD(model.parameters() , lr=0.001)

return model,optim.Adam(model.parameters() , lr=0.001)

def loss_batch(model, loss_func ,xb,yb, opt=None):

#根据预测值和真实值计算loss

loss=loss_func( model(xb) , yb )

if opt is not None:

loss.backward() #反向传播求梯度

opt.step() #更新参数

opt.zero_grad() #梯度清零,避免影响下一次的更新参数

return loss.item(), len(xb)

7.开始训练模型

train_dl,valid_dl=get_data(train_ds,valid_ds,bs)

model,opt=get_model()

fit(20,model ,loss_func,opt,train_dl,valid_dl)

correct=0

total=0

#xb(128,784) , yb(128)

for xb,yb in valid_dl:

#output(128,10),每一批128个样例,10个概率

output=(model(xb))

# print(output.shape)

# print(output)

#predicted==预测概率中最大的值的索引

_,predicted=torch.max(output.data,1) #最大的值和索引

# print(predicted)

#size(0)==64,item()脱离tensor

total+=yb.size(0)

correct+=(predicted==yb).sum().item()

print("Accuracy of network on the 10000 test image :%d %%" %(

100*correct / total

))

plt.figure()

plt.title("loss during training")

plt.plot(np.arange(1,21,1),val_losses)

plt.show()

当前step:0 验证集损失0.19325110550522803

当前step:1 验证集损失0.13869898459613322

当前step:2 验证集损失0.11913147141262889

当前step:3 验证集损失0.10589157585203647

当前step:4 验证集损失0.09970801477096974

当前step:5 验证集损失0.09848284918610006

当前step:6 验证集损失0.08794679024070501

当前step:7 验证集损失0.08894123120522127

当前step:8 验证集损失0.0905570782547351

当前step:9 验证集损失0.0877237871955149

当前step:10 验证集损失0.08790379901565612

当前step:11 验证集损失0.08826288345884532

当前step:12 验证集损失0.08438722904250026

当前step:13 验证集损失0.08695273711904883

当前step:14 验证集损失0.08459821079988032

当前step:15 验证集损失0.08047270769253373

当前step:16 验证集损失0.0862937849830836

当前step:17 验证集损失0.08164657156261383

当前step:18 验证集损失0.08109720230847597

当前step:19 验证集损失0.08208743708985858

Accuracy of network on the 10000 test image :97 %

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)