森林火灾类——分割——如何构建和使用基于遥感图像的森林过火区域估计与严重程度评估数据集的详细步骤和代码。我们将使用Python和深度学习框架(如PyTorch)来实现这一任务。

森林火灾类——分割——如何构建和使用基于遥感图像的森林过火区域估计与严重程度评估数据集的详细步骤和代码。我们将使用Python和深度学习框架(如PyTorch)来实现这一任务。

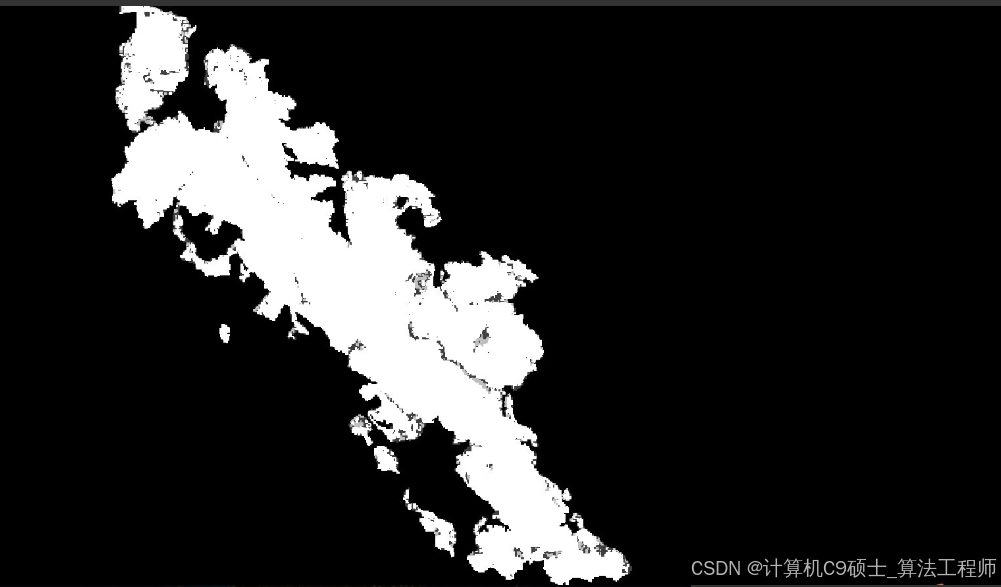

基于遥感图像的森林过火区域估计与严重程度评估数据集,提供过火前后的哨兵1和哨兵2图像,并提供过火区域mask图,共14GB数据。

如何构建和使用基于遥感图像的森林过火区域估计与严重程度评估数据集的详细步骤和代码。我们将使用Python和深度学习框架(如PyTorch)来实现这一任务。

项目结构

深色版本

ForestFireDetection/

├── data/

│ ├── sentinel1/

│ │ ├── pre_fire/

│ │ └── post_fire/

│ ├── sentinel2/

│ │ ├── pre_fire/

│ │ └── post_fire/

│ ├── masks/

│ └── metadata.csv

├── models/

│ └── unet/

├── utils/

│ ├── data_loader.py

│ ├── metrics.py

│ └── plot.py

├── main.py

├── train.py

├── infer.py

└── README.md

- 数据集准备

数据集格式

sentinel1/:包含哨兵1卫星的过火前后图像。

sentinel2/:包含哨兵2卫星的过火前后图像。

masks/:包含过火区域的掩码图。

metadata.csv:包含图像的元数据信息,如图像路径、过火区域面积等。

数据集目录结构

深色版本

data/

├── sentinel1/

│ ├── pre_fire/

│ └── post_fire/

├── sentinel2/

│ ├── pre_fire/

│ └── post_fire/

├── masks/

└── metadata.csv - 安装依赖

确保安装了必要的依赖项:

bash

深色版本

pip install torch torchvision pandas numpy matplotlib scikit-image

3. 数据加载

编写一个数据加载脚本utils/data_loader.py:

python

深色版本

import os

import pandas as pd

import numpy as np

import torch

from torch.utils.data import Dataset, DataLoader

from skimage import io

class ForestFireDataset(Dataset):

def init(self, csv_file, root_dir, transform=None):

self.metadata = pd.read_csv(csv_file)

self.root_dir = root_dir

self.transform = transform

def __len__(self):

return len(self.metadata)

def __getitem__(self, idx):

if torch.is_tensor(idx):

idx = idx.tolist()

img_name = os.path.join(self.root_dir, self.metadata.iloc[idx, 0])

mask_name = os.path.join(self.root_dir, self.metadata.iloc[idx, 1])

image = io.imread(img_name)

mask = io.imread(mask_name, as_gray=True)

if self.transform:

image = self.transform(image)

mask = self.transform(mask)

return image, mask

def get_data_loader(csv_file, root_dir, batch_size, transform=None, shuffle=True):

dataset = ForestFireDataset(csv_file, root_dir, transform=transform)

data_loader = DataLoader(dataset, batch_size=batch_size, shuffle=shuffle, num_workers=4)

return data_loader

4. 模型定义

编写一个UNet模型的定义脚本models/unet.py:

python

深色版本

import torch

import torch.nn as nn

import torch.nn.functional as F

class UNet(nn.Module):

def init(self, in_channels, out_channels):

super(UNet, self).init()

self.in_channels = in_channels

self.out_channels = out_channels

self.enc1 = self.conv_block(in_channels, 64)

self.enc2 = self.conv_block(64, 128)

self.enc3 = self.conv_block(128, 256)

self.enc4 = self.conv_block(256, 512)

self.pool = nn.MaxPool2d(2, 2)

self.bottleneck = self.conv_block(512, 1024)

self.upconv4 = nn.ConvTranspose2d(1024, 512, kernel_size=2, stride=2)

self.dec4 = self.conv_block(1024, 512)

self.upconv3 = nn.ConvTranspose2d(512, 256, kernel_size=2, stride=2)

self.dec3 = self.conv_block(512, 256)

self.upconv2 = nn.ConvTranspose2d(256, 128, kernel_size=2, stride=2)

self.dec2 = self.conv_block(256, 128)

self.upconv1 = nn.ConvTranspose2d(128, 64, kernel_size=2, stride=2)

self.dec1 = self.conv_block(128, 64)

self.out_conv = nn.Conv2d(64, out_channels, kernel_size=1)

def conv_block(self, in_channels, out_channels):

return nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=3, padding=1),

nn.BatchNorm2d(out_channels),

nn.ReLU(inplace=True),

nn.Conv2d(out_channels, out_channels, kernel_size=3, padding=1),

nn.BatchNorm2d(out_channels),

nn.ReLU(inplace=True)

)

def forward(self, x):

enc1 = self.enc1(x)

enc2 = self.enc2(self.pool(enc1))

enc3 = self.enc3(self.pool(enc2))

enc4 = self.enc4(self.pool(enc3))

bottleneck = self.bottleneck(self.pool(enc4))

dec4 = self.upconv4(bottleneck)

dec4 = torch.cat((dec4, enc4), dim=1)

dec4 = self.dec4(dec4)

dec3 = self.upconv3(dec4)

dec3 = torch.cat((dec3, enc3), dim=1)

dec3 = self.dec3(dec3)

dec2 = self.upconv2(dec3)

dec2 = torch.cat((dec2, enc2), dim=1)

dec2 = self.dec2(dec2)

dec1 = self.upconv1(dec2)

dec1 = torch.cat((dec1, enc1), dim=1)

dec1 = self.dec1(dec1)

return self.out_conv(dec1)

- 训练模型

编写一个训练脚本train.py:

python

深色版本

import os

import torch

import torch.optim as optim

from torch.utils.tensorboard import SummaryWriter

from utils.data_loader import get_data_loader

from models.unet import UNet

import numpy as np

import matplotlib.pyplot as plt

def train_model(data_loader, model, criterion, optimizer, num_epochs, device, writer):

model.train()

for epoch in range(num_epochs):

running_loss = 0.0

for inputs, masks in data_loader:

inputs, masks = inputs.to(device), masks.to(device)

optimizer.zero_grad()

outputs = model(inputs)

loss = criterion(outputs, masks)

loss.backward()

optimizer.step()

running_loss += loss.item() * inputs.size(0)

epoch_loss = running_loss / len(data_loader.dataset)

print(f'Epoch [{epoch+1}/{num_epochs}], Loss: {epoch_loss:.4f}')

writer.add_scalar('Training Loss', epoch_loss, epoch)

def main():

# 设置训练参数

csv_file = ‘data/metadata.csv’

root_dir = ‘data/’

batch_size = 4

num_epochs = 50

learning_rate = 0.001

device = torch.device(‘cuda’ if torch.cuda.is_available() else ‘cpu’)

writer = SummaryWriter(‘runs/forest_fire_detection’)

# 加载数据

data_loader = get_data_loader(csv_file, root_dir, batch_size, shuffle=True)

# 初始化模型、损失函数和优化器

model = UNet(in_channels=4, out_channels=1).to(device)

criterion = nn.BCEWithLogitsLoss()

optimizer = optim.Adam(model.parameters(), lr=learning_rate)

# 开始训练

train_model(data_loader, model, criterion, optimizer, num_epochs, device, writer)

# 保存模型

torch.save(model.state_dict(), 'models/forest_fire_unet.pth')

if name == ‘main’:

main()

6. 推理和可视化

编写一个推理脚本infer.py,用于加载训练好的模型并对新图像进行预测:

python

深色版本

import os

import torch

import numpy as np

import cv2

from models.unet import UNet

from utils.data_loader import get_data_loader

import matplotlib.pyplot as plt

def load_model(weights_path, device):

model = UNet(in_channels=4, out_channels=1)

model.load_state_dict(torch.load(weights_path, map_location=device))

model.to(device)

model.eval()

return model

def infer_image(model, img_path, device):

img = cv2.imread(img_path)

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

img = img.transpose(2, 0, 1)

img = img.astype(np.float32) / 255.0

img = torch.from_numpy(img).unsqueeze(0).to(device)

with torch.no_grad():

output = model(img)

output = torch.sigmoid(output)

output = output.squeeze().cpu().numpy()

return output

def visualize_results(original_img, predicted_mask):

fig, axs = plt.subplots(1, 2, figsize=(12, 6))

axs[0].imshow(original_img)

axs[0].set_title(‘Original Image’)

axs[1].imshow(predicted_mask, cmap=‘gray’)

axs[1].set_title(‘Predicted Mask’)

plt.show()

if name == ‘main’:

weights_path = ‘models/forest_fire_unet.pth’

img_path = ‘data/sentinel2/pre_fire/000001.jpg’

device = torch.device(‘cuda’ if torch.cuda.is_available() else ‘cpu’)

model = load_model(weights_path, device)

predicted_mask = infer_image(model, img_path, device)

original_img = cv2.imread(img_path)

original_img = cv2.cvtColor(original_img, cv2.COLOR_BGR2RGB)

visualize_results(original_img, predicted_mask)

- 评价指标

编写一个脚本evaluate.py来计算模型的评价指标(如IoU、Dice系数等):

python

深色版本

import os

import torch

from torch.utils.data import DataLoader

from models.unet import UNet

from utils.data_loader import get_data_loader

from utils.metrics import iou_score, dice_coefficient

def evaluate_model(data_loader, model, device):

model.eval()

ious = []

dices = []

with torch.no_grad():

for inputs, masks in data_loader:

inputs, masks = inputs.to(device), masks.to(device)

outputs = model(inputs)

outputs = torch.sigmoid(outputs)

outputs = (outputs > 0.5).float()

iou = iou_score(outputs, masks)

dice = dice_coefficient(outputs, masks)

ious.append(iou.item())

dices.append(dice.item())

avg_iou = np.mean(ious)

avg_dice = np.mean(dices)

print(f'Average IoU: {avg_iou:.4f}')

print(f'Average Dice Coefficient: {avg_dice:.4f}')

def main():

csv_file = ‘data/metadata.csv’

root_dir = ‘data/’

batch_size = 4

device = torch.device(‘cuda’ if torch.cuda.is_available() else ‘cpu’)

# 加载数据

data_loader = get_data_loader(csv_file, root_dir, batch_size, shuffle=False)

# 加载模型

model = UNet(in_channels=4, out_channels=1)

model.load_state_dict(torch.load('models/forest_fire_unet.pth', map_location=device))

model.to(device)

# 评估模型

evaluate_model(data_loader, model, device)

if name == ‘main’:

main()

8. 运行项目

确保数据集和标签文件已经准备好,并放在相应的目录中。

运行训练脚本:

bash

深色版本

python train.py

运行推理脚本:

bash

深色版本

python infer.py

运行评价脚本:

bash

深色版本

python evaluate.py

9. 代码说明

数据加载:utils/data_loader.py负责从CSV文件中读取元数据,并加载图像和掩码。

模型定义:models/unet.py定义了一个UNet模型,用于图像分割任务。

训练模型:train.py负责加载数据、初始化模型、损失函数和优化器,并进行训练。

推理和可视化:infer.py用于加载训练好的模型并对新图像进行预测,显示预测结果。

评价指标:evaluate.py计算模型的评价指标,如IoU和Dice系数。

希望这些代码和说明能帮助你完成基于遥感图像的森林过火区域估计与严重程度评估项目。

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献22条内容

已为社区贡献22条内容

所有评论(0)