Python + AI大模型应用开发实战:从零搭建智能对话系统

本文介绍了使用Python开发基于AI大模型的智能对话Web应用的完整流程。项目采用FastAPI框架,整合了OpenAI API,实现了对话管理、历史记录和用户设置等功能。文章详细讲解了环境配置、数据库设计、API开发和前端界面实现,并提供了Docker容器化部署方案。该应用具备生产环境部署能力,展示了Python在现代AI应用开发中的优势,为开发者提供了一个完整的学习范例。

目录

第一部分:技术背景与项目概述

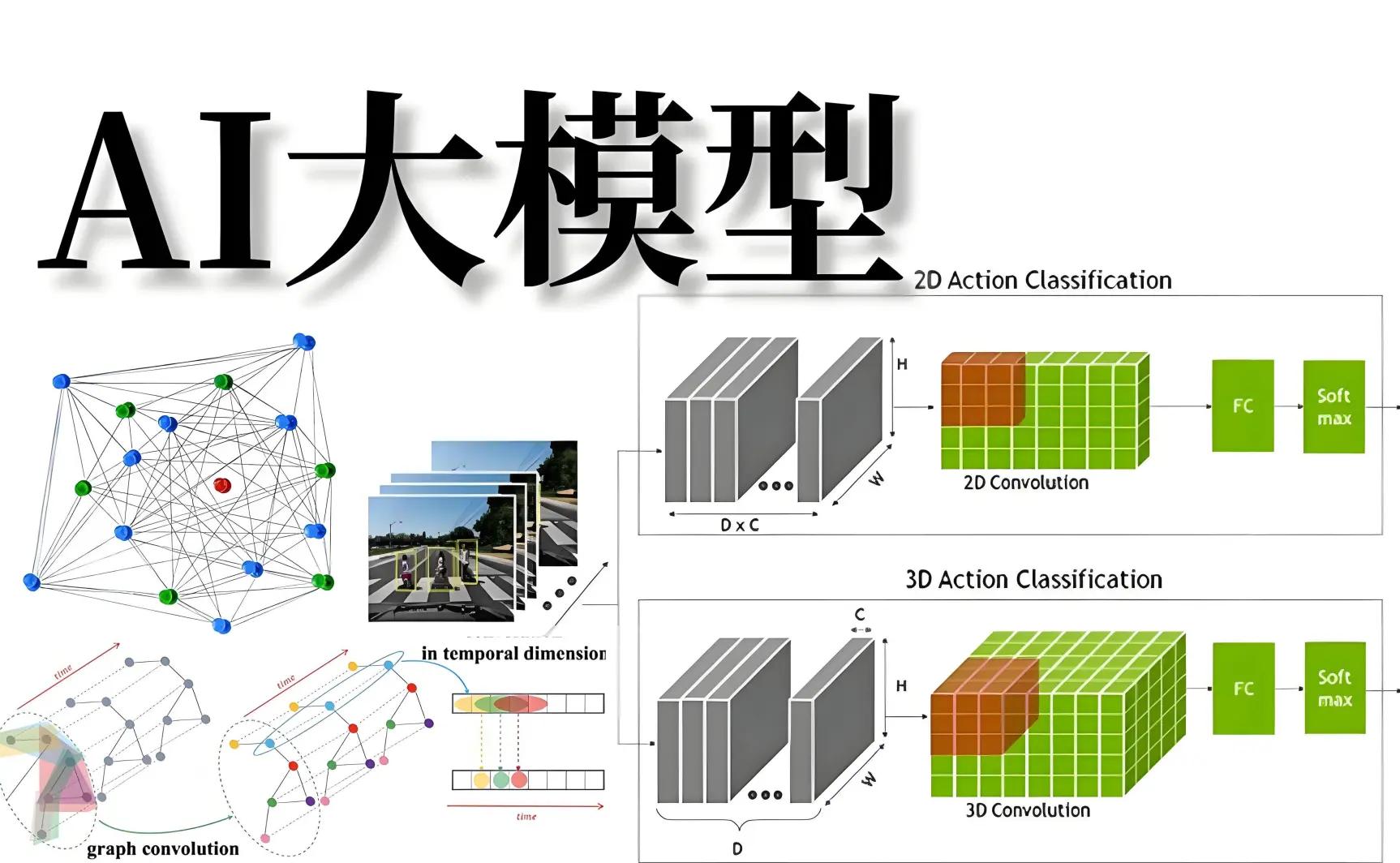

1.1 AI大模型发展现状

近年来,人工智能大模型技术取得了突破性进展。从GPT系列到国内的文心一言、通义千问等,大模型正在改变我们与计算机交互的方式。Python作为AI开发的首选语言,拥有丰富的生态系统和完善的工具链,是开发AI应用的最佳选择。

1.2 为什么选择Python?

- 丰富的AI库:TensorFlow、PyTorch、OpenAI等

- 强大的Web框架:FastAPI、Django、Flask

- 完善的工具链:虚拟环境、包管理、测试框架

- 活跃的社区:海量开源项目和教程资源

1.3 项目目标

在本教程中,我们将使用Python开发一个完整的智能对话Web应用,具备以下功能:

- 基于大模型的智能对话

- 多轮对话上下文管理

- 用户对话历史记录

- 现代化的Web界面

- 生产环境部署能力

第二部分:环境准备与基础配置

2.1 Python环境搭建

首先确保你的系统已安装Python 3.8或更高版本:

# 检查Python版本

python --version

# 或

python3 --version

2.2 创建虚拟环境

使用虚拟环境隔离项目依赖:

# 创建项目目录

mkdir ai-chat-app

cd ai-chat-app

# 创建虚拟环境

python -m venv venv

# 激活虚拟环境

# Windows

venv\Scripts\activate

# Linux/Mac

source venv/bin/activate

2.3 安装必要依赖

创建requirements.txt文件:

fastapi==0.104.1

uvicorn==0.24.0

openai==1.3.0

sqlalchemy==2.0.23

alembic==1.12.1

python-multipart==0.0.6

python-dotenv==1.0.0

安装依赖:

pip install -r requirements.txt

第三部分:核心功能开发实战

3.1 项目结构设计

让我们先规划项目结构:

ai-chat-app/

├── app/

│ ├── __init__.py

│ ├── main.py # FastAPI应用入口

│ ├── models.py # 数据模型

│ ├── database.py # 数据库配置

│ ├── api/

│ │ ├── __init__.py

│ │ └── chat.py # 聊天API

│ └── services/

│ ├── __init__.py

│ └── openai_service.py # OpenAI服务

├── static/ # 静态文件

├── templates/ # 模板文件

├── requirements.txt

├── .env # 环境变量

└── README.md

3.2 创建FastAPI应用

创建app/main.py文件:

from fastapi import FastAPI

from fastapi.staticfiles import StaticFiles

from fastapi.templating import Jinja2Templates

from fastapi.middleware.cors import CORSMiddleware

import os

# 创建FastAPI应用实例

app = FastAPI(

title="AI智能对话系统",

description="基于Python和AI大模型的智能对话应用",

version="1.0.0"

)

# 配置CORS中间件

app.add_middleware(

CORSMiddleware,

allow_origins=["*"],

allow_credentials=True,

allow_methods=["*"],

allow_headers=["*"],

)

# 配置静态文件和模板

app.mount("/static", StaticFiles(directory="static"), name="static")

templates = Jinja2Templates(directory="templates")

@app.get("/")

async def root():

return {"message": "AI智能对话系统API服务已启动"}

@app.get("/health")

async def health_check():

return {"status": "healthy"}

if __name__ == "__main__":

import uvicorn

uvicorn.run(app, host="0.0.0.0", port=8000)

3.3 数据库模型设计

创建app/models.py文件:

from sqlalchemy import Column, Integer, String, Text, DateTime, Boolean

from sqlalchemy.ext.declarative import declarative_base

from datetime import datetime

Base = declarative_base()

class Conversation(Base):

__tablename__ = "conversations"

id = Column(Integer, primary_key=True, index=True)

session_id = Column(String(100), index=True, nullable=False)

user_message = Column(Text, nullable=False)

ai_response = Column(Text, nullable=False)

created_at = Column(DateTime, default=datetime.utcnow)

model_used = Column(String(50), default="gpt-3.5-turbo")

class UserSettings(Base):

__tablename__ = "user_settings"

id = Column(Integer, primary_key=True, index=True)

session_id = Column(String(100), unique=True, index=True)

temperature = Column(Integer, default=7) # 0-10, 默认7

max_tokens = Column(Integer, default=1000)

model_preference = Column(String(50), default="gpt-3.5-turbo")

created_at = Column(DateTime, default=datetime.utcnow)

updated_at = Column(DateTime, default=datetime.utcnow, onupdate=datetime.utcnow)

3.4 数据库配置

创建app/database.py文件:

from sqlalchemy import create_engine

from sqlalchemy.orm import sessionmaker

from app.models import Base

import os

# 数据库配置

DATABASE_URL = os.getenv("DATABASE_URL", "sqlite:///./chat_app.db")

# 创建数据库引擎

engine = create_engine(

DATABASE_URL,

connect_args={"check_same_thread": False} # SQLite专用参数

)

# 创建SessionLocal类

SessionLocal = sessionmaker(autocommit=False, autoflush=False, bind=engine)

# 创建数据库表

def create_tables():

Base.metadata.create_all(bind=engine)

# 数据库依赖

def get_db():

db = SessionLocal()

try:

yield db

finally:

db.close()

3.5 OpenAI服务封装

创建app/services/openai_service.py文件:

import openai

import os

from typing import List, Dict, Optional

from openai import OpenAI

class OpenAIService:

def __init__(self):

# 从环境变量获取API密钥

api_key = os.getenv("OPENAI_API_KEY")

if not api_key:

raise ValueError("OPENAI_API_KEY环境变量未设置")

self.client = OpenAI(api_key=api_key)

async def chat_completion(

self,

messages: List[Dict[str, str]],

model: str = "gpt-3.5-turbo",

temperature: float = 0.7,

max_tokens: int = 1000

) -> str:

"""

调用OpenAI聊天补全API

"""

try:

response = self.client.chat.completions.create(

model=model,

messages=messages,

temperature=temperature,

max_tokens=max_tokens,

stream=False

)

return response.choices[0].message.content

except Exception as e:

raise Exception(f"OpenAI API调用失败: {str(e)}")

async def stream_chat_completion(

self,

messages: List[Dict[str, str]],

model: str = "gpt-3.5-turbo",

temperature: float = 0.7,

max_tokens: int = 1000

):

"""

流式聊天补全(用于实时打字效果)

"""

try:

response = self.client.chat.completions.create(

model=model,

messages=messages,

temperature=temperature,

max_tokens=max_tokens,

stream=True

)

for chunk in response:

if chunk.choices[0].delta.content is not None:

yield chunk.choices[0].delta.content

except Exception as e:

yield f"错误: {str(e)}"

# 创建全局实例

openai_service = OpenAIService()

3.6 聊天API实现

创建app/api/chat.py文件:

from fastapi import APIRouter, Depends, HTTPException

from sqlalchemy.orm import Session

from typing import List, Dict

import uuid

from datetime import datetime

from app.database import get_db

from app.models import Conversation, UserSettings

from app.services.openai_service import openai_service

router = APIRouter(prefix="/api/chat", tags=["chat"])

@router.post("/message")

async def send_message(

message: str,

session_id: str = None,

db: Session = Depends(get_db)

):

"""

发送消息并获取AI回复

"""

# 生成或使用现有的session_id

if not session_id:

session_id = str(uuid.uuid4())

# 获取用户设置

user_settings = db.query(UserSettings).filter(

UserSettings.session_id == session_id

).first()

if not user_settings:

# 创建默认用户设置

user_settings = UserSettings(

session_id=session_id,

temperature=7,

max_tokens=1000,

model_preference="gpt-3.5-turbo"

)

db.add(user_settings)

db.commit()

db.refresh(user_settings)

# 构建对话历史

conversation_history = await get_conversation_history(session_id, db)

# 添加用户新消息

messages = conversation_history + [{"role": "user", "content": message}]

try:

# 调用OpenAI服务

response = await openai_service.chat_completion(

messages=messages,

model=user_settings.model_preference,

temperature=user_settings.temperature / 10.0, # 转换为0-1范围

max_tokens=user_settings.max_tokens

)

# 保存对话记录

conversation = Conversation(

session_id=session_id,

user_message=message,

ai_response=response,

model_used=user_settings.model_preference

)

db.add(conversation)

db.commit()

return {

"session_id": session_id,

"user_message": message,

"ai_response": response,

"timestamp": datetime.utcnow().isoformat()

}

except Exception as e:

raise HTTPException(status_code=500, detail=str(e))

@router.get("/history/{session_id}")

async def get_chat_history(

session_id: str,

limit: int = 50,

db: Session = Depends(get_db)

):

"""

获取指定会话的聊天历史

"""

conversations = db.query(Conversation).filter(

Conversation.session_id == session_id

).order_by(Conversation.created_at.desc()).limit(limit).all()

return [

{

"id": conv.id,

"user_message": conv.user_message,

"ai_response": conv.ai_response,

"created_at": conv.created_at.isoformat(),

"model_used": conv.model_used

}

for conv in reversed(conversations) # 按时间正序返回

]

async def get_conversation_history(session_id: str, db: Session) -> List[Dict[str, str]]:

"""

获取格式化后的对话历史

"""

conversations = db.query(Conversation).filter(

Conversation.session_id == session_id

).order_by(Conversation.created_at.asc()).limit(10).all() # 最近10条对话

messages = []

for conv in conversations:

messages.append({"role": "user", "content": conv.user_message})

messages.append({"role": "assistant", "content": conv.ai_response})

return messages

3.7 前端界面实现

创建templates/index.html文件:

<!DOCTYPE html>

<html lang="zh-CN">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>AI智能对话系统</title>

<script src="https://unpkg.com/vue@3/dist/vue.global.js"></script>

<link rel="stylesheet" href="https://unpkg.com/element-plus/dist/index.css">

<script src="https://unpkg.com/element-plus"></script>

<style>

body {

margin: 0;

padding: 0;

font-family: -apple-system, BlinkMacSystemFont, 'Segoe UI', Roboto, sans-serif;

background: linear-gradient(135deg, #667eea 0%, #764ba2 100%);

min-height: 100vh;

}

.chat-container {

max-width: 800px;

margin: 0 auto;

background: white;

border-radius: 10px;

box-shadow: 0 10px 30px rgba(0,0,0,0.1);

overflow: hidden;

height: 90vh;

display: flex;

flex-direction: column;

}

.chat-header {

background: #2c3e50;

color: white;

padding: 20px;

text-align: center;

}

.chat-messages {

flex: 1;

padding: 20px;

overflow-y: auto;

background: #f8f9fa;

}

.message {

margin-bottom: 15px;

display: flex;

}

.user-message {

justify-content: flex-end;

}

.ai-message {

justify-content: flex-start;

}

.message-bubble {

max-width: 70%;

padding: 12px 16px;

border-radius: 18px;

word-wrap: break-word;

}

.user-bubble {

background: #007bff;

color: white;

}

.ai-bubble {

background: white;

border: 1px solid #e9ecef;

}

.chat-input {

padding: 20px;

border-top: 1px solid #e9ecef;

background: white;

}

.typing-indicator {

display: flex;

align-items: center;

color: #6c757d;

font-style: italic;

}

.dot {

width: 8px;

height: 8px;

border-radius: 50%;

background: #6c757d;

margin: 0 2px;

animation: bounce 1.4s infinite ease-in-out;

}

.dot:nth-child(1) { animation-delay: -0.32s; }

.dot:nth-child(2) { animation-delay: -0.16s; }

@keyframes bounce {

0%, 80%, 100% { transform: scale(0); }

40% { transform: scale(1); }

}

</style>

</head>

<body>

<div id="app">

<div class="chat-container">

<div class="chat-header">

<h2>🤖 AI智能对话系统</h2>

<p>基于Python和AI大模型的智能对话应用</p>

</div>

<div class="chat-messages" ref="messagesContainer">

<div v-for="message in messages" :key="message.id"

:class="['message', message.role === 'user' ? 'user-message' : 'ai-message']">

<div :class="['message-bubble', message.role === 'user' ? 'user-bubble' : 'ai-bubble']">

<div v-if="message.role === 'user'">

<strong>你:</strong> {{ message.content }}

</div>

<div v-else>

<strong>AI助手:</strong>

<span v-if="message.isTyping" class="typing-indicator">

<span class="dot"></span>

<span class="dot"></span>

<span class="dot"></span>

</span>

<span v-else>{{ message.content }}</span>

</div>

</div>

</div>

</div>

<div class="chat-input">

<el-input

v-model="inputMessage"

placeholder="输入你的问题..."

@keyup.enter="sendMessage"

:disabled="isLoading"

>

<template #append>

<el-button

@click="sendMessage"

:loading="isLoading"

type="primary"

>

发送

</el-button>

</template>

</el-input>

</div>

</div>

</div>

<script>

const { createApp, ref, onMounted, nextTick } = Vue;

const { ElMessage } = ElementPlus;

createApp({

setup() {

const messages = ref([]);

const inputMessage = ref('');

const isLoading = ref(false);

const sessionId = ref('');

const messagesContainer = ref(null);

// 初始化会话ID

if (!sessionId.value) {

sessionId.value = generateSessionId();

loadChatHistory();

}

function generateSessionId() {

return 'session_' + Date.now() + '_' + Math.random().toString(36).substr(2, 9);

}

async function loadChatHistory() {

try {

const response = await fetch(`/api/chat/history/${sessionId.value}`);

if (response.ok) {

const history = await response.json();

messages.value = history.map(item => ({

id: item.id,

role: 'user',

content: item.user_message,

timestamp: item.created_at

})).concat(history.map(item => ({

id: item.id + '_ai',

role: 'assistant',

content: item.ai_response,

timestamp: item.created_at

}))).sort((a, b) => new Date(a.timestamp) - new Date(b.timestamp));

scrollToBottom();

}

} catch (error) {

console.error('加载聊天历史失败:', error);

}

}

async function sendMessage() {

if (!inputMessage.value.trim() || isLoading.value) return;

const userMessage = inputMessage.value.trim();

inputMessage.value = '';

// 添加用户消息

const userMsg = {

id: Date.now(),

role: 'user',

content: userMessage,

timestamp: new Date().toISOString()

};

messages.value.push(userMsg);

// 添加AI响应占位符

const aiMsg = {

id: Date.now() + 1,

role: 'assistant',

content: '',

isTyping: true,

timestamp: new Date().toISOString()

};

messages.value.push(aiMsg);

scrollToBottom();

isLoading.value = true;

try {

const response = await fetch('/api/chat/message', {

method: 'POST',

headers: {

'Content-Type': 'application/json',

},

body: JSON.stringify({

message: userMessage,

session_id: sessionId.value

})

});

if (response.ok) {

const data = await response.json();

aiMsg.content = data.ai_response;

aiMsg.isTyping = false;

} else {

throw new Error('发送消息失败');

}

} catch (error) {

aiMsg.content = '抱歉,发生错误:' + error.message;

aiMsg.isTyping = false;

ElMessage.error('发送消息失败');

} finally {

isLoading.value = false;

scrollToBottom();

}

}

function scrollToBottom() {

nextTick(() => {

if (messagesContainer.value) {

messagesContainer.value.scrollTop = messagesContainer.value.scrollHeight;

}

});

}

onMounted(() => {

scrollToBottom();

});

return {

messages,

inputMessage,

isLoading,

messagesContainer,

sendMessage

};

}

}).use(ElementPlus).mount('#app');

</script>

</body>

</html>

3.8 更新主应用文件

更新app/main.py文件,添加路由和模板支持:

from fastapi import FastAPI, Request

from fastapi.staticfiles import StaticFiles

from fastapi.templating import Jinja2Templates

from fastapi.middleware.cors import CORSMiddleware

from app.database import create_tables

from app.api import chat

import os

# 创建数据库表

create_tables()

# 创建FastAPI应用实例

app = FastAPI(

title="AI智能对话系统",

description="基于Python和AI大模型的智能对话应用",

version="1.0.0"

)

# 配置CORS中间件

app.add_middleware(

CORSMiddleware,

allow_origins=["*"],

allow_credentials=True,

allow_methods=["*"],

allow_headers=["*"],

)

# 配置静态文件和模板

app.mount("/static", StaticFiles(directory="static"), name="static")

templates = Jinja2Templates(directory="templates")

# 注册路由

app.include_router(chat.router)

@app.get("/")

async def root(request: Request):

return templates.TemplateResponse("index.html", {"request": request})

@app.get("/health")

async def health_check():

return {"status": "healthy"}

if __name__ == "__main__":

import uvicorn

uvicorn.run(app, host="0.0.0.0", port=8000)

第四部分:高级功能扩展

4.1 环境变量配置

创建.env文件:

# OpenAI API配置

OPENAI_API_KEY=your_openai_api_key_here

# 数据库配置

DATABASE_URL=sqlite:///./chat_app.db

# 应用配置

DEBUG=True

HOST=0.0.0.0

PORT=8000

创建config.py文件:

import os

from dotenv import load_dotenv

load_dotenv()

class Config:

OPENAI_API_KEY = os.getenv("OPENAI_API_KEY")

DATABASE_URL = os.getenv("DATABASE_URL", "sqlite:///./chat_app.db")

DEBUG = os.getenv("DEBUG", "False").lower() == "true"

HOST = os.getenv("HOST", "0.0.0.0")

PORT = int(os.getenv("PORT", 8000))

4.2 流式响应实现

更新app/api/chat.py,添加流式响应支持:

from fastapi import Response

from fastapi.responses import StreamingResponse

import json

@router.post("/message/stream")

async def stream_message(

message: str,

session_id: str = None,

db: Session = Depends(get_db)

):

"""

流式消息响应(实时打字效果)

"""

if not session_id:

session_id = str(uuid.uuid4())

# 获取用户设置(代码同上)

# ...

async def generate():

try:

full_response = ""

async for chunk in openai_service.stream_chat_completion(

messages=messages,

model=user_settings.model_preference,

temperature=user_settings.temperature / 10.0,

max_tokens=user_settings.max_tokens

):

full_response += chunk

yield f"data: {json.dumps({'content': chunk})}\n\n"

# 保存完整对话记录

conversation = Conversation(

session_id=session_id,

user_message=message,

ai_response=full_response,

model_used=user_settings.model_preference

)

db.add(conversation)

db.commit()

except Exception as e:

yield f"data: {json.dumps({'error': str(e)})}\n\n"

return StreamingResponse(

generate(),

media_type="text/event-stream",

headers={"Cache-Control": "no-cache"}

)

第五部分:部署与优化

5.1 Docker容器化部署

创建Dockerfile:

FROM python:3.9-slim

WORKDIR /app

# 安装系统依赖

RUN apt-get update && apt-get install -y \

gcc \

&& rm -rf /var/lib/apt/lists/*

# 复制依赖文件

COPY requirements.txt .

# 安装Python依赖

RUN pip install --no-cache-dir -r requirements.txt

# 复制应用代码

COPY . .

# 创建非root用户

RUN useradd -m -u 1000 user

USER user

# 暴露端口

EXPOSE 8000

# 启动命令

CMD ["uvicorn", "app.main:app", "--host", "0.0.0.0", "--port", "8000"]

创建docker-compose.yml:

version: '3.8'

services:

ai-chat-app:

build: .

ports:

- "8000:8000"

environment:

- OPENAI_API_KEY=${OPENAI_API_KEY}

- DATABASE_URL=sqlite:///./chat_app.db

volumes:

- ./data:/app/data

restart: unless-stopped

5.2 生产环境配置

创建gunicorn_conf.py:

import multiprocessing

# 服务器绑定

bind = "0.0.0.0:8000"

# 工作进程数

workers = multiprocessing.cpu_count() * 2 + 1

# 工作模式

worker_class = "uvicorn.workers.UvicornWorker"

# 日志配置

accesslog = "-"

errorlog = "-"

loglevel = "info"

# 超时设置

timeout = 120

keepalive = 5

5.3 启动脚本

创建start.sh:

#!/bin/bash

# 激活虚拟环境

source venv/bin/activate

# 启动应用

if [ "$ENVIRONMENT" = "production" ]; then

gunicorn -c gunicorn_conf.py app.main:app

else

uvicorn app.main:app --host 0.0.0.0 --port 8000 --reload

fi

完整项目运行指南

1. 环境准备

# 克隆项目(如果有Git仓库)

git clone <repository-url>

cd ai-chat-app

# 创建虚拟环境

python -m venv venv

source venv/bin/activate # Windows: venv\Scripts\activate

# 安装依赖

pip install -r requirements.txt

2. 配置环境变量

# 复制环境变量模板

cp .env.example .env

# 编辑.env文件,设置你的OpenAI API密钥

OPENAI_API_KEY=sk-your-actual-api-key-here

3. 启动应用

# 开发模式

python -m uvicorn app.main:app --reload

# 或使用启动脚本

chmod +x start.sh

./start.sh

4. 访问应用

打开浏览器访问:http://localhost:8000

总结

通过本教程,我们完整地实现了一个基于Python和AI大模型的智能对话系统。这个项目展示了:

- 现代化Python开发:使用FastAPI、SQLAlchemy等现代框架

- AI集成能力:与OpenAI API的无缝集成

- 完整功能实现:对话管理、历史记录、用户设置等

- 生产就绪:Docker部署、性能优化、安全配置

这个项目不仅是一个可用的智能对话应用,更是一个优秀的学习范例,可以帮助开发者掌握Python AI应用开发的核心技能。

引用文献

- FastAPI官方文档:https://fastapi.tiangolo.com/

- OpenAI API文档:https://platform.openai.com/docs

- SQLAlchemy文档:https://docs.sqlalchemy.org/

- Vue.js官方文档:https://vuejs.org/

- Docker官方文档:https://docs.docker.com/

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)