10-1【mmaction2 行为识别商用级别】ava数据集缩小版 与 slowfast训练

ava数据集可视化B站视频:https://www.bilibili.com/video/BV1yL411c7pR?spm_id_from=333.999.0.0前言这个训练的跟踪过程使用的数据集是缩小版的,我只去了ava数据集中的2个视频,2个视频加起来也有30分钟,这个也不小了。1 mmaction2 安装1.1 安装在AI平台中选择如下版本镜像:安装命令如下:cd homegit clone

mmaction2官方github:https://github.com/open-mmlab/mmaction2

GPU平台:https://cloud.videojj.com/auth/register?inviter=18452&activityChannel=student_invite

ava数据集可视化B站视频:https://www.bilibili.com/video/BV1yL411c7pR?spm_id_from=333.999.0.0

本系列的链接

00【mmaction2 行为识别商用级别】快速搭建mmaction2 pytorch 1.6.0与 pytorch 1.8.0 版本

03【mmaction2 行为识别商用级别】使用mmaction搭建faster rcnn批量检测图片输出为via格式

04【mmaction2 行为识别商用级别】slowfast检测算法使用yolov3来检测人

!!!等论文发布后公开 !!!!05【mmaction2 行为识别商用级别】slowfast 与 yolov5融合(即检测部分使用yolov5)

!!!等论文发布后公开 !!!!06【mmaction2 行为识别商用级别】slowfast 与 yolov5与deepsort融合(即追踪部分使用deepsort)

!!!等论文发布后公开 !!!!07【mmaction2 行为识别商用级别】 yolov5 采用 yolov5-crowdhuman

08【mmaction2 行为识别商用级别】自定义ava数据集 之 将视频裁剪为帧

!!!等论文发布后公开 !!!!10-1【mmaction2 行为识别商用级别】ava数据集缩小版 与 slowfast训练

!!!等论文发布后公开 !!!!10-2【mmaction2 行为识别商用级别】修改ava数据集的帧ID 分析slowfast训练的数据集如何输入的

12【mmaction2 行为识别商用级别】X3D复现 demo实现 检测自己的视频 Expanding Architecturesfor Efficient Video Recognition

目录

前言

这个训练的跟踪过程使用的数据集是缩小版的,我只去了ava数据集中的2个视频,2个视频加起来也有30分钟,对我们普通人的GPU来说,这个也不小了。

1 mmaction2 安装

1.1 安装

在AI平台中选择如下版本镜像:

pytorch 1.8.0,python 3.8,CUDA 11.1.1

安装命令如下:

cd home

git clone https://gitee.com/YFwinston/mmaction2.git

pip install mmcv-full==1.3.17 -f https://download.openmmlab.com/mmcv/dist/cu111/torch1.8.0/index.html

pip install opencv-python-headless==4.1.2.30

pip install moviepy

cd mmaction2

pip install -r requirements/build.txt

pip install -v -e .

mkdir -p ./data/ava

cd ..

git clone https://gitee.com/YFwinston/mmdetection.git

cd mmdetection

pip install -r requirements/build.txt

pip install -v -e .

cd ../mmaction2

1.2 测试

有问题

python demo/demo_spatiotemporal_det.py --video demo/demo.mp4 \

--config configs/detection/ava/slowonly_omnisource_pretrained_r101_8x8x1_20e_ava_rgb.py \

--checkpoint https://download.openmmlab.com/mmaction/detection/ava/slowonly_omnisource_pretrained_r101_8x8x1_20e_ava_rgb/slowonly_omnisource_pretrained_r101_8x8x1_20e_ava_rgb_20201217-16378594.pth \

--det-config demo/faster_rcnn_r50_fpn_2x_coco.py \

--det-checkpoint http://download.openmmlab.com/mmdetection/v2.0/faster_rcnn/faster_rcnn_r50_fpn_2x_coco/faster_rcnn_r50_fpn_2x_coco_bbox_mAP-0.384_20200504_210434-a5d8aa15.pth \

--det-score-thr 0.9 \

--action-score-thr 0.5 \

--label-map tools/data/ava/label_map.txt \

--predict-stepsize 8 \

--output-stepsize 4 \

--output-fps 6

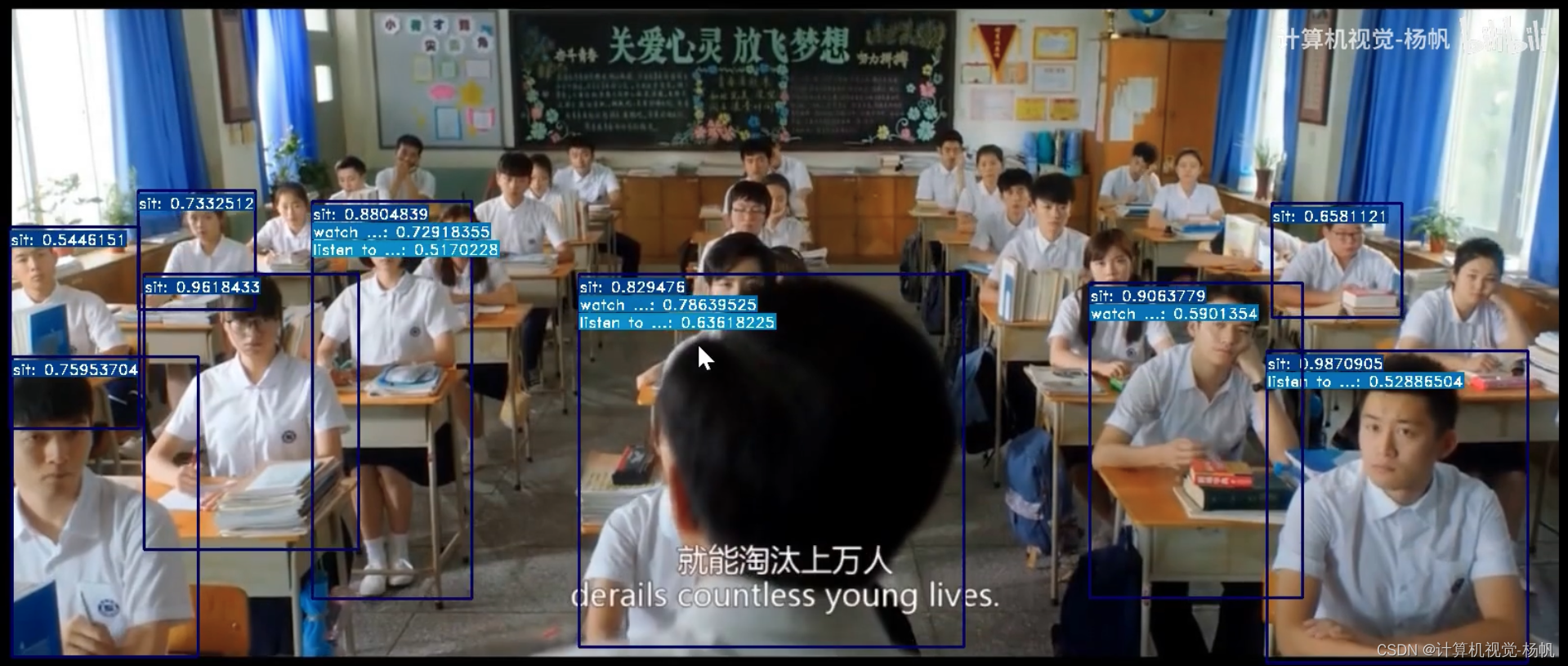

我上传视频后的检测结果

2 制作缩小版ava数据集

wget https://research.google.com/ava/download/ava_v${VERSION}.zip

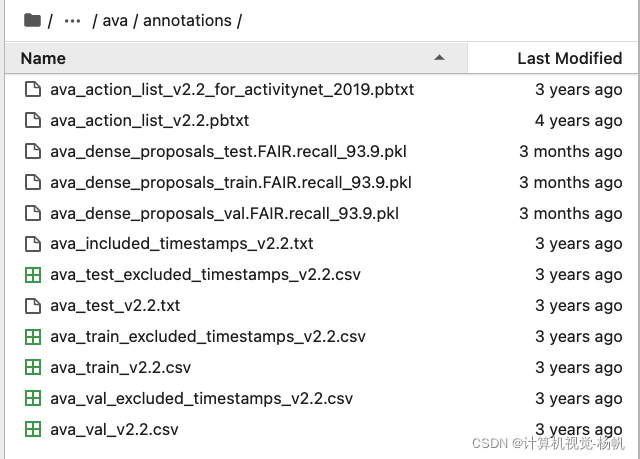

2.1 标注文件

mkdir -p ./data/ava/annotations

mkdir -p ./data/ava/videos

mkdir -p ./data/ava/videos_15min

mkdir -p ./data/ava/rawframes

cd ./data/ava/annotations

wget https://download.openmmlab.com/mmaction/dataset/ava/ava_dense_proposals_train.FAIR.recall_93.9.pkl

wget https://download.openmmlab.com/mmaction/dataset/ava/ava_dense_proposals_val.FAIR.recall_93.9.pkl

wget https://download.openmmlab.com/mmaction/dataset/ava/ava_dense_proposals_test.FAIR.recall_93.9.pkl

#下面这一步需要用到google,所以大家可以先本地下载好,然后上传

wget https://research.google.com/ava/download/ava_v2.2.zip

unzip ava_v2.2.zip

rm ava_v2.2.zip

cd ../../../

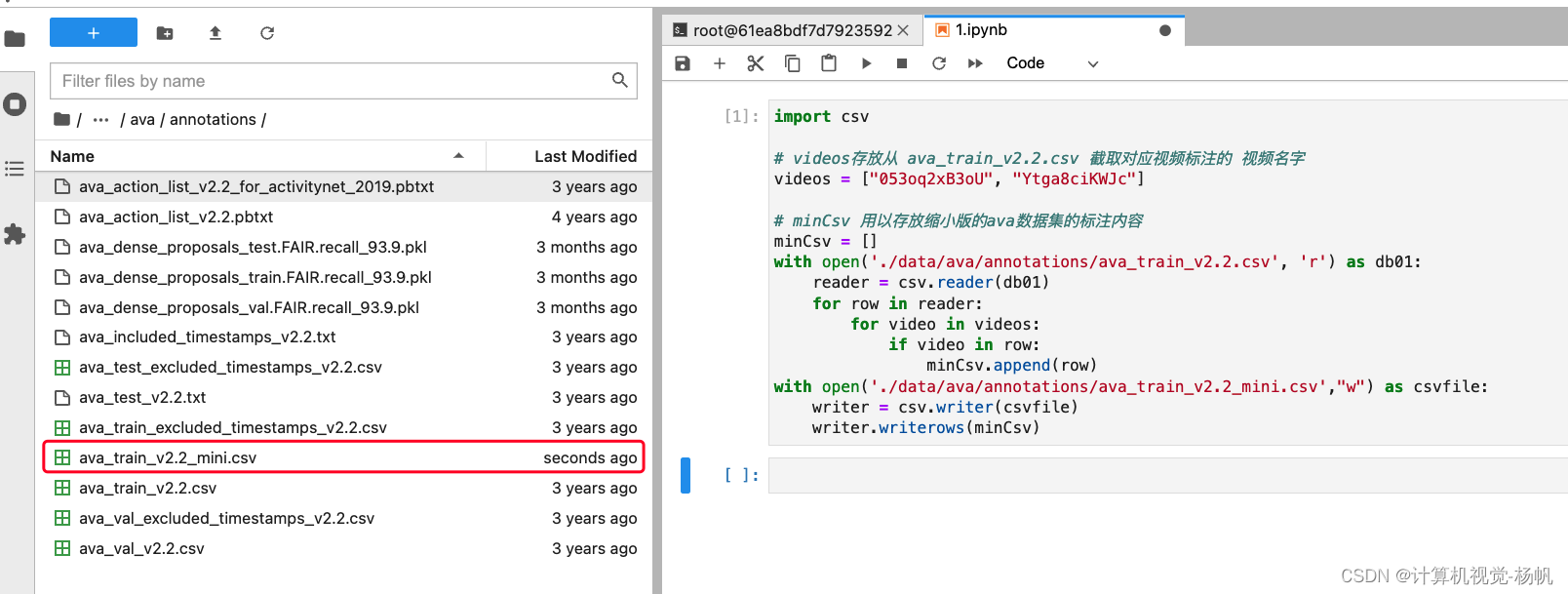

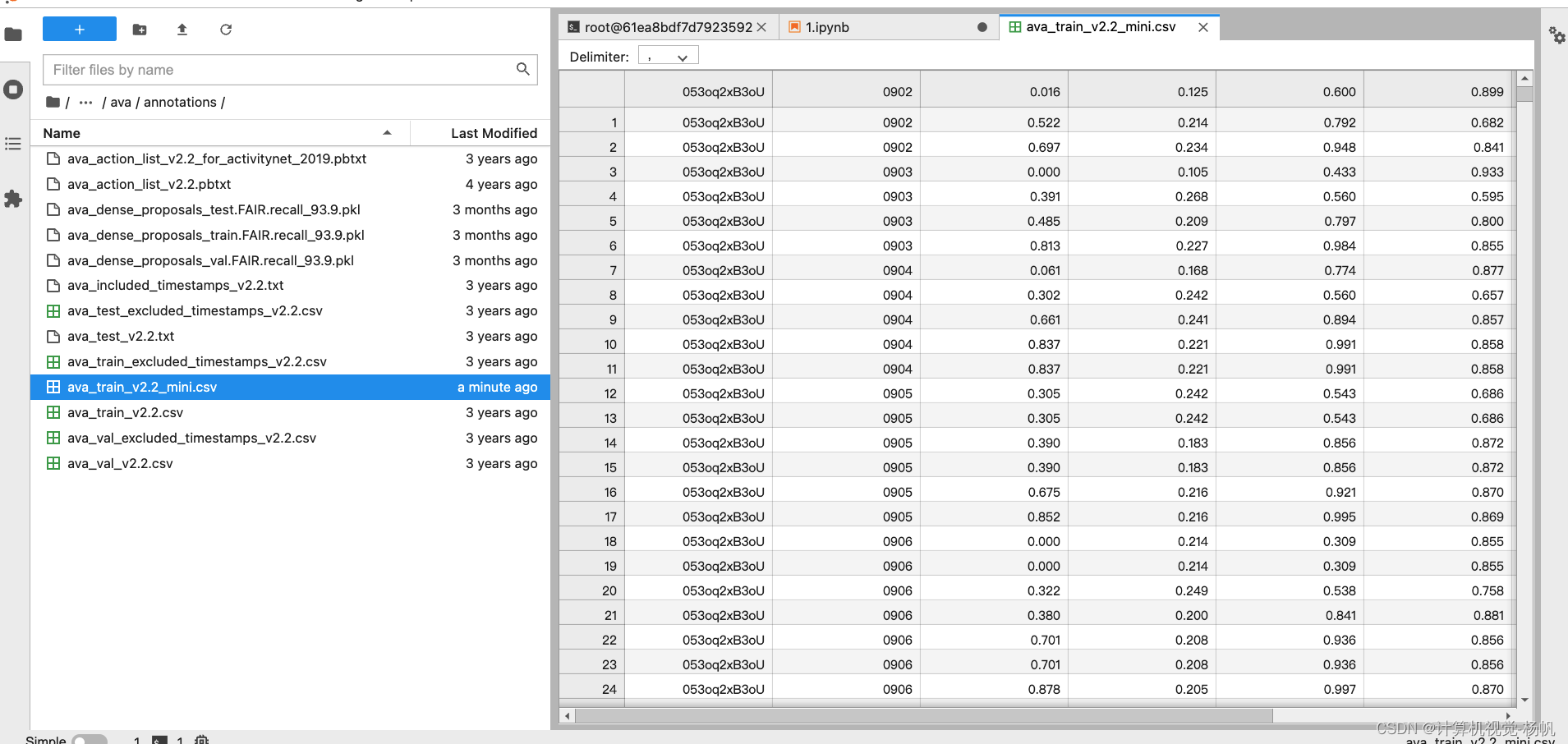

2.2 缩小版的ava数据集

我们只要中ava数据集中的两个:053oq2xB3oU 、Ytga8ciKWJc

在/home/mmaction2/data/ava/下

生成缩小版的ava数据集代码如下:

import csv

# videos存放从 ava_train_v2.2.csv 截取对应视频标注的 视频名字

videos = ["053oq2xB3oU", "Ytga8ciKWJc"]

# minCsv 用以存放缩小版的ava数据集的标注内容

minCsv = []

with open('./data/ava/annotations/ava_train_v2.2.csv', 'r') as db01:

reader = csv.reader(db01)

for row in reader:

for video in videos:

if video in row:

minCsv.append(row)

with open('./data/ava/annotations/ava_train_v2.2_mini.csv',"w") as csvfile:

writer = csv.writer(csvfile)

writer.writerows(minCsv)

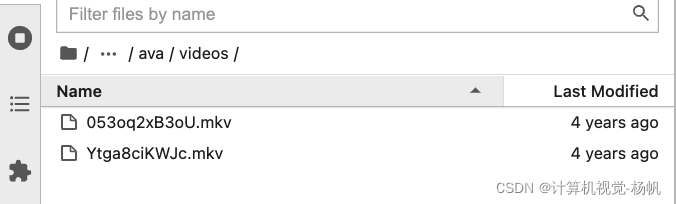

2.3 视频下载

我们只需要2个视频

在/home/mmaction2/data/ava/下

下载命令如下:

cd ./data/ava/videos

wget https://s3.amazonaws.com/ava-dataset/trainval/053oq2xB3oU.mkv

wget https://s3.amazonaws.com/ava-dataset/trainval/Ytga8ciKWJc.mkv

cd ../../../

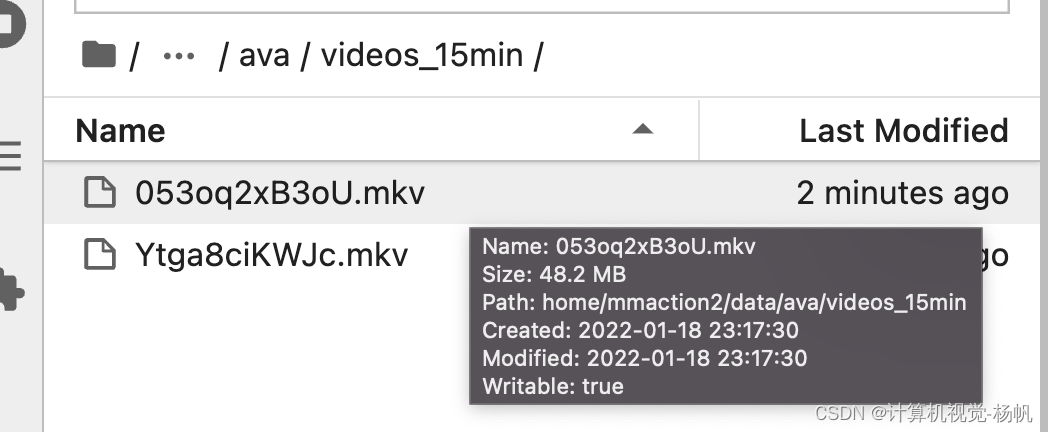

2.4 裁剪15分钟视频

首先安装ffmpeg(安装时间稍长):

conda install x264 ffmpeg -c conda-forge

参考:https://www.jianshu.com/p/3b0291b1ae3b

终端输入:

cd ./tools/data/ava/

bash cut_videos.sh

cd ../../../

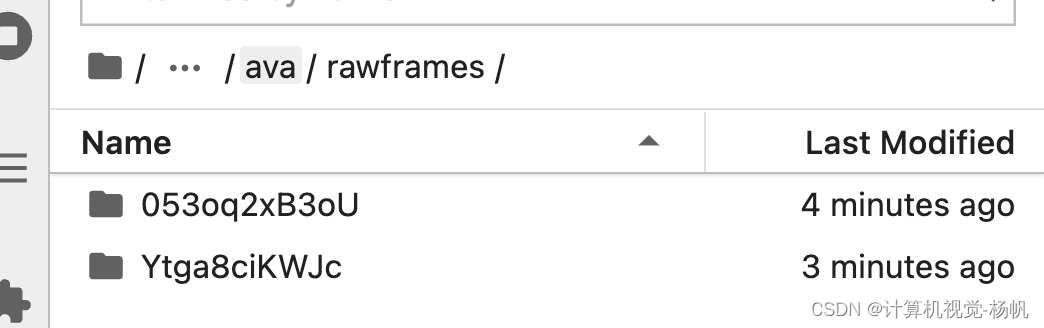

2.5 视频抽帧

终端输入:

cd ./tools/data/ava/

bash extract_rgb_frames_ffmpeg.sh

cd ../../../

走到这里,缩小版ava数据集就已经做好了

最后目录层级如下

mmaction2

├── mmaction

├── tools

├── configs

├── data

│ ├── ava

│ │ ├── annotations

│ │ | ├── ava_action_list_v2.2_for_activitynet_2019.pbtxt

│ │ | ├── ava_action_list_v2.2.pbtxt

│ │ | ├── ava_dense_proposals_test.FAIR.recall_93.9.pkl

│ │ | ├── ava_dense_proposals_train.FAIR.recall_93.9.pkl

│ │ | ├── ava_dense_proposals_val.FAIR.recall_93.9.pkl

│ │ | ├── ava_included_timestamps_v2.2.txt

│ │ | ├── ava_test_excluded_timestamps_v2.2.csv

│ │ | ├── ava_train_excluded_timestamps_v2.2.csv

│ │ | ├── ava_train_v2.2_mini.csv

│ │ | ├── ava_train_v2.2.csv

│ │ | ├── ava_val_excluded_timestamps_v2.2.csv

│ │ | ├── ava_val_v2.2.csv

│ │ ├── videos

│ │ │ ├── 053oq2xB3oU.mkv

│ │ │ ├── Ytga8ciKWJc.mkv

│ │ ├── videos_15min

│ │ │ ├── 053oq2xB3oU.mkv

│ │ │ ├── Ytga8ciKWJc.mkv

│ │ ├── rawframes

│ │ │ ├── 053oq2xB3oU

| │ │ │ ├── img_00001.jpg

| │ │ │ ├── img_00002.jpg

| │ │ │ ├── ...

│ │ │ ├── Ytga8ciKWJc

| │ │ │ ├── img_00001.jpg

| │ │ │ ├── img_00002.jpg

| │ │ │ ├── ...

3 训练

3.1 slowonly

3.1.1 配置文件

在 /mmaction2/configs/detection/ava/下创建 my_slowonly_kinetics_pretrained_r50_4x16x1_20e_ava_rgb.py

内容如下:

# model setting

model = dict(

type='FastRCNN',

backbone=dict(

type='ResNet3dSlowOnly',

depth=50,

pretrained=None,

pretrained2d=False,

lateral=False,

num_stages=4,

conv1_kernel=(1, 7, 7),

conv1_stride_t=1,

pool1_stride_t=1,

spatial_strides=(1, 2, 2, 1)),

roi_head=dict(

type='AVARoIHead',

bbox_roi_extractor=dict(

type='SingleRoIExtractor3D',

roi_layer_type='RoIAlign',

output_size=8,

with_temporal_pool=True),

bbox_head=dict(

type='BBoxHeadAVA',

in_channels=2048,

num_classes=81,

multilabel=True,

dropout_ratio=0.5)),

train_cfg=dict(

rcnn=dict(

assigner=dict(

type='MaxIoUAssignerAVA',

pos_iou_thr=0.9,

neg_iou_thr=0.9,

min_pos_iou=0.9),

sampler=dict(

type='RandomSampler',

num=32,

pos_fraction=1,

neg_pos_ub=-1,

add_gt_as_proposals=True),

pos_weight=1.0,

debug=False)),

test_cfg=dict(rcnn=dict(action_thr=0.002)))

dataset_type = 'AVADataset'

data_root = 'data/ava/rawframes'

anno_root = 'data/ava/annotations'

#ann_file_train = f'{anno_root}/ava_train_v2.1.csv'

ann_file_train = f'{anno_root}/ava_train_v2.2_mini.csv'

#ann_file_val = f'{anno_root}/ava_val_v2.1.csv'

ann_file_val = f'{anno_root}/ava_train_v2.2_mini.csv'

#exclude_file_train = f'{anno_root}/ava_train_excluded_timestamps_v2.1.csv'

#exclude_file_val = f'{anno_root}/ava_val_excluded_timestamps_v2.1.csv'

exclude_file_train = f'{anno_root}/ava_train_excluded_timestamps_v2.2.csv'

exclude_file_val = f'{anno_root}/ava_val_excluded_timestamps_v2.2.csv'

#label_file = f'{anno_root}/ava_action_list_v2.1_for_activitynet_2018.pbtxt'

label_file = f'{anno_root}/ava_action_list_v2.2_for_activitynet_2019.pbtxt'

proposal_file_train = (f'{anno_root}/ava_dense_proposals_train.FAIR.'

'recall_93.9.pkl')

proposal_file_val = f'{anno_root}/ava_dense_proposals_val.FAIR.recall_93.9.pkl'

img_norm_cfg = dict(

mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_bgr=False)

train_pipeline = [

dict(type='SampleAVAFrames', clip_len=4, frame_interval=16),

dict(type='RawFrameDecode'),

dict(type='RandomRescale', scale_range=(256, 320)),

dict(type='RandomCrop', size=256),

dict(type='Flip', flip_ratio=0.5),

dict(type='Normalize', **img_norm_cfg),

dict(type='FormatShape', input_format='NCTHW', collapse=True),

# Rename is needed to use mmdet detectors

dict(type='Rename', mapping=dict(imgs='img')),

dict(type='ToTensor', keys=['img', 'proposals', 'gt_bboxes', 'gt_labels']),

dict(

type='ToDataContainer',

fields=[

dict(key=['proposals', 'gt_bboxes', 'gt_labels'], stack=False)

]),

dict(

type='Collect',

keys=['img', 'proposals', 'gt_bboxes', 'gt_labels'],

meta_keys=['scores', 'entity_ids'])

]

# The testing is w/o. any cropping / flipping

val_pipeline = [

dict(type='SampleAVAFrames', clip_len=4, frame_interval=16),

dict(type='RawFrameDecode'),

dict(type='Resize', scale=(-1, 256)),

dict(type='Normalize', **img_norm_cfg),

dict(type='FormatShape', input_format='NCTHW', collapse=True),

# Rename is needed to use mmdet detectors

dict(type='Rename', mapping=dict(imgs='img')),

dict(type='ToTensor', keys=['img', 'proposals']),

dict(type='ToDataContainer', fields=[dict(key='proposals', stack=False)]),

dict(

type='Collect',

keys=['img', 'proposals'],

meta_keys=['scores', 'img_shape'],

nested=True)

]

data = dict(

videos_per_gpu=16,

workers_per_gpu=2,

val_dataloader=dict(videos_per_gpu=1),

test_dataloader=dict(videos_per_gpu=1),

train=dict(

type=dataset_type,

ann_file=ann_file_train,

exclude_file=exclude_file_train,

pipeline=train_pipeline,

label_file=label_file,

proposal_file=proposal_file_train,

person_det_score_thr=0.9,

data_prefix=data_root),

val=dict(

type=dataset_type,

ann_file=ann_file_val,

exclude_file=exclude_file_val,

pipeline=val_pipeline,

label_file=label_file,

proposal_file=proposal_file_val,

person_det_score_thr=0.9,

data_prefix=data_root))

data['test'] = data['val']

optimizer = dict(type='SGD', lr=0.2, momentum=0.9, weight_decay=0.00001)

# this lr is used for 8 gpus

optimizer_config = dict(grad_clip=dict(max_norm=40, norm_type=2))

# learning policy

lr_config = dict(

policy='step',

step=[10, 15],

warmup='linear',

warmup_by_epoch=True,

warmup_iters=5,

warmup_ratio=0.1)

total_epochs = 20

checkpoint_config = dict(interval=1)

workflow = [('train', 1)]

evaluation = dict(interval=1, save_best='mAP@0.5IOU')

log_config = dict(

interval=20, hooks=[

dict(type='TextLoggerHook'),

])

dist_params = dict(backend='nccl')

log_level = 'INFO'

work_dir = ('./work_dirs/ava/'

'slowonly_kinetics_pretrained_r50_4x16x1_20e_ava_rgb')

load_from = ('https://download.openmmlab.com/mmaction/recognition/slowonly/'

'slowonly_r50_4x16x1_256e_kinetics400_rgb/'

'slowonly_r50_4x16x1_256e_kinetics400_rgb_20200704-a69556c6.pth')

resume_from = None

find_unused_parameters = False

3.1.2 训练命令

python tools/train.py configs/detection/ava/my_slowonly_kinetics_pretrained_r50_4x16x1_20e_ava_rgb.py --validate

3.2 slowfast

3.2.1 配置文件

# model setting

model = dict(

type='FastRCNN',

backbone=dict(

type='ResNet3dSlowFast',

pretrained=None,

resample_rate=8,

speed_ratio=8,

channel_ratio=8,

slow_pathway=dict(

type='resnet3d',

depth=50,

pretrained=None,

lateral=True,

conv1_kernel=(1, 7, 7),

dilations=(1, 1, 1, 1),

conv1_stride_t=1,

pool1_stride_t=1,

inflate=(0, 0, 1, 1),

spatial_strides=(1, 2, 2, 1)),

fast_pathway=dict(

type='resnet3d',

depth=50,

pretrained=None,

lateral=False,

base_channels=8,

conv1_kernel=(5, 7, 7),

conv1_stride_t=1,

pool1_stride_t=1,

spatial_strides=(1, 2, 2, 1))),

roi_head=dict(

type='AVARoIHead',

bbox_roi_extractor=dict(

type='SingleRoIExtractor3D',

roi_layer_type='RoIAlign',

output_size=8,

with_temporal_pool=True),

bbox_head=dict(

type='BBoxHeadAVA',

in_channels=2304,

num_classes=81,

multilabel=True,

dropout_ratio=0.5)),

train_cfg=dict(

rcnn=dict(

assigner=dict(

type='MaxIoUAssignerAVA',

pos_iou_thr=0.9,

neg_iou_thr=0.9,

min_pos_iou=0.9),

sampler=dict(

type='RandomSampler',

num=32,

pos_fraction=1,

neg_pos_ub=-1,

add_gt_as_proposals=True),

pos_weight=1.0,

debug=False)),

test_cfg=dict(rcnn=dict(action_thr=0.002)))

dataset_type = 'AVADataset'

data_root = 'data/ava/rawframes'

anno_root = 'data/ava/annotations'

#ann_file_train = f'{anno_root}/ava_train_v2.1.csv'

ann_file_train = f'{anno_root}/ava_train_v2.2_mini.csv'

#ann_file_val = f'{anno_root}/ava_val_v2.1.csv'

ann_file_val = f'{anno_root}/ava_train_v2.2_mini.csv'

#exclude_file_train = f'{anno_root}/ava_train_excluded_timestamps_v2.1.csv'

#exclude_file_val = f'{anno_root}/ava_val_excluded_timestamps_v2.1.csv'

exclude_file_train = f'{anno_root}/ava_train_excluded_timestamps_v2.2.csv'

exclude_file_val = f'{anno_root}/ava_val_excluded_timestamps_v2.2.csv'

#label_file = f'{anno_root}/ava_action_list_v2.1_for_activitynet_2018.pbtxt'

label_file = f'{anno_root}/ava_action_list_v2.2_for_activitynet_2019.pbtxt'

proposal_file_train = (f'{anno_root}/ava_dense_proposals_train.FAIR.'

'recall_93.9.pkl')

proposal_file_val = f'{anno_root}/ava_dense_proposals_val.FAIR.recall_93.9.pkl'

img_norm_cfg = dict(

mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_bgr=False)

train_pipeline = [

dict(type='SampleAVAFrames', clip_len=32, frame_interval=2),

dict(type='RawFrameDecode'),

dict(type='RandomRescale', scale_range=(256, 320)),

dict(type='RandomCrop', size=256),

dict(type='Flip', flip_ratio=0.5),

dict(type='Normalize', **img_norm_cfg),

dict(type='FormatShape', input_format='NCTHW', collapse=True),

# Rename is needed to use mmdet detectors

dict(type='Rename', mapping=dict(imgs='img')),

dict(type='ToTensor', keys=['img', 'proposals', 'gt_bboxes', 'gt_labels']),

dict(

type='ToDataContainer',

fields=[

dict(key=['proposals', 'gt_bboxes', 'gt_labels'], stack=False)

]),

dict(

type='Collect',

keys=['img', 'proposals', 'gt_bboxes', 'gt_labels'],

meta_keys=['scores', 'entity_ids'])

]

# The testing is w/o. any cropping / flipping

val_pipeline = [

dict(type='SampleAVAFrames', clip_len=32, frame_interval=2),

dict(type='RawFrameDecode'),

dict(type='Resize', scale=(-1, 256)),

dict(type='Normalize', **img_norm_cfg),

dict(type='FormatShape', input_format='NCTHW', collapse=True),

# Rename is needed to use mmdet detectors

dict(type='Rename', mapping=dict(imgs='img')),

dict(type='ToTensor', keys=['img', 'proposals']),

dict(type='ToDataContainer', fields=[dict(key='proposals', stack=False)]),

dict(

type='Collect',

keys=['img', 'proposals'],

meta_keys=['scores', 'img_shape'],

nested=True)

]

data = dict(

#videos_per_gpu=9,

#workers_per_gpu=2,

videos_per_gpu=5,

workers_per_gpu=2,

val_dataloader=dict(videos_per_gpu=1),

test_dataloader=dict(videos_per_gpu=1),

train=dict(

type=dataset_type,

ann_file=ann_file_train,

exclude_file=exclude_file_train,

pipeline=train_pipeline,

label_file=label_file,

proposal_file=proposal_file_train,

person_det_score_thr=0.9,

data_prefix=data_root),

val=dict(

type=dataset_type,

ann_file=ann_file_val,

exclude_file=exclude_file_val,

pipeline=val_pipeline,

label_file=label_file,

proposal_file=proposal_file_val,

person_det_score_thr=0.9,

data_prefix=data_root))

data['test'] = data['val']

optimizer = dict(type='SGD', lr=0.1125, momentum=0.9, weight_decay=0.00001)

# this lr is used for 8 gpus

optimizer_config = dict(grad_clip=dict(max_norm=40, norm_type=2))

# learning policy

lr_config = dict(

policy='step',

step=[10, 15],

warmup='linear',

warmup_by_epoch=True,

warmup_iters=5,

warmup_ratio=0.1)

total_epochs = 20

checkpoint_config = dict(interval=1)

workflow = [('train', 1)]

evaluation = dict(interval=1, save_best='mAP@0.5IOU')

log_config = dict(

interval=20, hooks=[

dict(type='TextLoggerHook'),

])

dist_params = dict(backend='nccl')

log_level = 'INFO'

work_dir = ('./work_dirs/ava/'

'slowfast_kinetics_pretrained_r50_4x16x1_20e_ava_rgb')

load_from = ('https://download.openmmlab.com/mmaction/recognition/slowfast/'

'slowfast_r50_4x16x1_256e_kinetics400_rgb/'

'slowfast_r50_4x16x1_256e_kinetics400_rgb_20200704-bcde7ed7.pth')

resume_from = None

find_unused_parameters = False

3.2.2 训练命令

python tools/train.py configs/detection/ava/my_slowfast_kinetics_pretrained_r50_4x16x1_20e_ava_rgb.py --validate

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献24条内容

已为社区贡献24条内容

所有评论(0)