Rockylinux9.6部署ELK日志收集(9.0.0)

本文介绍了ELK(Elasticsearch、Logstash、Kibana)日志分析系统的部署方案,用于解决多环境场景下的日志管理问题。主要内容包括:1)ELK架构概述,说明三个核心组件的功能;2)系统环境准备,包括Java环境配置和系统优化;3)详细部署步骤,涵盖Elasticsearch集群配置、Logstash数据处理管道搭建以及Kibana可视化平台安装;4)常见故障排查方法和日志轮转配

摘要

由于日常工作中环境场景过多,传统环境,docker,k8s过于混乱,日志查询消耗时间过长,所以采用ELK日志收集,来处理手动进入服务器寻找的繁琐操作。

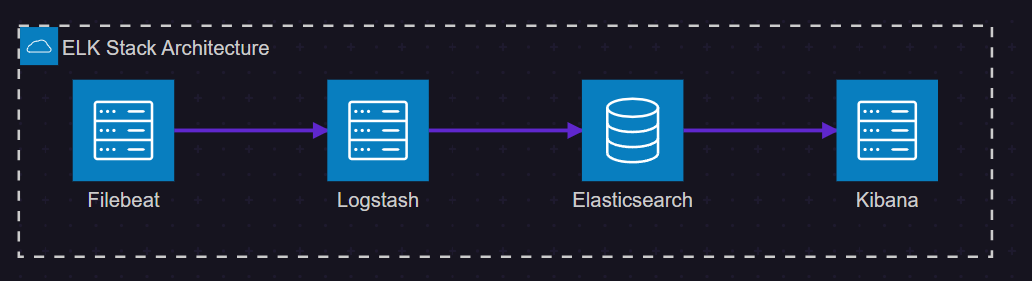

1. ELK 架构概览

1.1 核心组件介绍

ELK 是由三个开源项目组成的强大日志分析解决方案:

Elasticsearch:分布式搜索和分析引擎,负责存储和索引日志数据

Logstash:数据处理管道,负责收集、转换和输出日志数据

Kibana:数据可视化平台,提供搜索和图表功能

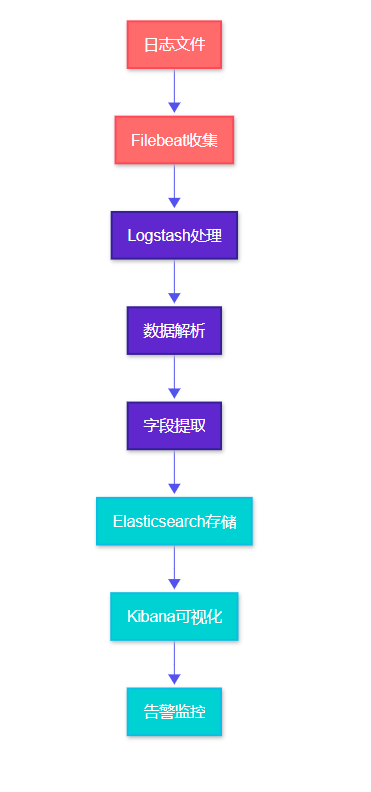

1.2 数据流处理过程

1.2 数据流处理过程

2. 环境准备与基础配置

2.1 系统要求

在开始部署之前,我们需要确保系统满足 ELK 的运行要求:

|

组件 |

最小内存 |

推荐内存 |

磁盘空间 |

Java版本 |

|

Elasticsearch |

2GB |

8GB |

50GB+ |

JDK 11+ |

|

Logstash |

1GB |

4GB |

10GB |

JDK 11+ |

|

Kibana |

1GB |

2GB |

5GB |

Node.js 14+ |

2.2 Java 环境配置

#解压

tar zxvf openjdk-11.0.2_linux-x64_bin.tar.gz -C /usr/local/

mv openjdk-11.0.2 java11

#编辑环境变量

nano /etc/profile

# 配置 JAVA_HOME 环境变量

JAVA_HOME=/usr/local/java11PATH=$JAVA_HOME/bin:$PATH

#生效

source /etc/profile

#验证jdk

java -version

javac -version

2.3 系统优化配置

# 优化系统参数以支持 Elasticsearch

echo 'vm.max_map_count=262144' | sudo tee -a /etc/sysctl.conf

echo 'fs.file-max=65536' | sudo tee -a /etc/sysctl.conf

# 配置用户限制

echo 'elasticsearch soft nofile 65536' | sudo tee -a /etc/security/limits.conf

echo 'elasticsearch hard nofile 65536' | sudo tee -a /etc/security/limits.conf

echo 'elasticsearch soft nproc 4096' | sudo tee -a /etc/security/limits.conf

echo 'elasticsearch hard nproc 4096' | sudo tee -a /etc/security/limits.conf

# 应用配置

sudo sysctl -p

这些优化配置对于 Elasticsearch 的稳定运行至关重要,特别是 vm.max_map_count 参数,它决定了进程可以拥有的内存映射区域的最大数量。

3. Elasticsearch 集群部署

3.1 安装与基础配置

# 下载并安装 Elasticsearch

wget https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-9.0.0-linux-x86_64.tar.gz

tar -xzf elasticsearch-9.0.0-linux-x86_64.tar.gz

sudo mv elasticsearch-9.0.0 /opt/elasticsearch

# 创建专用用户

sudo useradd -r -s /bin/false elasticsearch

sudo chown -R elasticsearch:elasticsearch /opt/elasticsearch

3.2 Elasticsearch 配置文件

#常用配置修改

nano /opt/elasticsearch/config/elasticsearch.yml

cluster.name: personal-monitoring

node.name: node-1

path.data: /opt/elasticsearch/data

path.logs: /opt/elasticsearch/logs

# 网络配置

network.host: 0.0.0.0

http.port: 9200

transport.port: 9300

# 集群配置

discovery.type: single-node

cluster.initial_master_nodes: ["node-1"]

# 安全配置

xpack.security.enabled: false

xpack.security.enrollment.enabled: false

xpack.security.http.ssl.enabled: false

xpack.security.transport.ssl.enabled: false

# 性能优化

indices.memory.index_buffer_size: 10%

indices.memory.min_index_buffer_size: 48mb

这个配置文件针对单节点部署进行了优化,关闭了 X-Pack 安全功能以简化初始配置。在生产环境中,建议启用安全功能。

3.3 启动服务脚本

#!/bin/bash

# /opt/elasticsearch/bin/startelasticsearch.sh

# 设置 JVM 堆内存

export ES_JAVA_OPTS="-Xms2g -Xmx2g"

# 启动 Elasticsearch

sudo -u elasticsearch /opt/elasticsearch/bin/elasticsearch -d

# 等待服务启动

sleep 30

# 检查服务状态

curl -X GET "https://192.168.0.70:9200/_cluster/health?pretty"

JVM 堆内存设置遵循"不超过系统内存的50%,且不超过32GB"的原则。-d 参数表示以守护进程方式运行。

3.4修改密码

cd /opt/elasticsearch/bin/

./elasticsearch-reset-password -u elastic -i

4. Logstash 数据处理管道

4.1 Logstash 安装配置

#!/bin/bash

# 下载并安装 Logstash

wget https://artifacts.elastic.co/downloads/logstash/logstash-9.0.0-linux-x86_64.tar.gz

tar -xzf logstash-9.0.0-linux-x86_64.tar.gz

sudo mv logstash-9.0.0 /opt/logstash# 创建专用用户

sudo useradd -r -s /bin/false logstash

sudo chown -R logstash:logstash /opt/logstash

4.2 日志处理配置

# Sample Logstash configuration for creating a simple

# Beats -> Logstash -> Elasticsearch pipeline.input {

#beats {

# port => 5044

#}

tcp {

port => 5044

codec => "json_lines"

}

# 直接读取日志文件

file {

path => "/opt/elasticsearch/logs/*.log"

start_position => "beginning"

type => "es-log"

}file {

path => "/opt/elasticsearch/logs/*.log.*"

start_position => "beginning"

type => "es-log"

}

file {

path => "/opt/elasticsearch/logs/*.error.log"

start_position => "beginning"

type => "es-error"

}

}filter {

# 处理 Elasticsearch 日志

if [type] == "es-log" or [type] == "es-error" {

# 使用 grok 解析 ES 日志内容

grok {

match => { "message" => "%{TIMESTAMP_ISO8601:timestamp} %{LOGLEVEL:loglevel} %{DATA:thread} --- \[%{DATA:logger}\] \: %{GREEDYDATA:message}" }

}

# 解析时间戳

date {

match => [ "timestamp", "ISO8601" ]

target => "@timestamp"

}

}

# 处理 Nginx 访问日志

#if [type] == "nginx-access" {

# grok {

# match => {

# "message" => "%{NGINXACCESS}"

#}

#}

# 解析时间戳

date {

match => [ "timestamp", "dd/MMM/yyyy:HH:mm:ss Z" ]

}

# 转换数据类型

mutate {

convert => {

"response" => "integer"

"bytes" => "integer"

"responsetime" => "float"

}

}

# 添加地理位置信息

geoip {

source => "clientip"

target => "geoip"

}

# 处理应用程序日志

if [type] == "application" {

# 解析 JSON 格式日志

json {

source => "message"

}

# 提取错误级别

if [level] {

mutate {

uppercase => [ "level" ]

}

}

}

}output {

# 根据类型路由到不同的索引,并在所有索引名中加入 appname

if [type] == "es-error" {

elasticsearch {

hosts => ["https://192.168.0.70:9200"]

index => "%{[appname]}-%{+YYYY.MM.dd}"

user => "elastic"

password => "@#SDzs0325./"

ssl_verification_mode => "none"

}

} else if [type] == "es-log" {

elasticsearch {

hosts => ["https://192.168.0.70:9200"]

index => "%{[appname]}-%{+YYYY.MM.dd}"

user => "elastic"

password => "@#SDzs0325./"

ssl_verification_mode => "none"

}

} else if [type] == "error" {

elasticsearch {

hosts => ["https://192.168.0.70:9200"]

index => "%{[appname]}-%{+YYYY.MM.dd}"

user => "elastic"

password => "@#SDzs0325./"

ssl_verification_mode => "none"

}

} else if [type] == "info" {

elasticsearch {

hosts => ["https://192.168.0.70:9200"]

index => "%{[appname]}-%{+YYYY.MM.dd}"

user => "elastic"

password => "@#SDzs0325./"

ssl_verification_mode => "none"

}

} else {

# 默认输出(处理未匹配到上述类型的JSON输入)

# 统一添加 appname 到索引名中

elasticsearch {

hosts => ["https://192.168.0.70:9200"]

# 这里假设你的JSON数据中总是包含 appname 字段

index => "%{[appname]}-%{+YYYY.MM.dd}"

user => "elastic"

password => "@#SDzs0325./"

ssl_verification_mode => "none"

}

}

# 调试输出

stdout {

codec => rubydebug

}

}

这个配置文件定义了完整的数据处理管道:输入阶段接收多种数据源,过滤阶段进行数据解析和转换,输出阶段将处理后的数据发送到 Elasticsearch。

4.3启动logstash

sudo -u logstash /opt/logstash/bin/logstash -f /opt/logstash/config/logstash-sample.conf &

4.4使用 systemd

sudo tee /etc/systemd/system/logstash.service << EOF

[Unit]

Description=Logstash

After=network.target[Service]

Type=simple

User=logstash

Group=logstash

ExecStart=/opt/logstash/bin/logstash -f /opt/logstash/config/logstash-sample.conf

Restart=always

RestartSec=10

WorkingDirectory=/opt/logstash

Environment=LS_HOME=/opt/logstash

Environment=LS_SETTINGS_DIR=/opt/logstash/config# 如果需要Java选项

Environment=JAVA_HOME=/usr/lib/jvm/java-11-openjdk

Environment=LS_JAVA_OPTS="-Xms256m -Xmx256m"# 安全限制

LimitNOFILE=65536

LimitNPROC=4096[Install]

WantedBy=multi-user.target

EOF

然后启用并启动:

sudo systemctl daemon-reload

sudo systemctl enable logstash

sudo systemctl start logstash

sudo systemctl status logstash

5. Kibana 可视化平台搭建

5.1 Kibana 安装与配置

#!/bin/bash

# 下载并安装 Kibana

wget https://artifacts.elastic.co/downloads/kibana/kibana-9.0.0-linux-x86_64.tar.gz

tar -xzf kibana-9.0.0-linux-x86_64.tar.gz

sudo mv kibana-9.0.0 /opt/kibana# 创建专用用户

sudo useradd -r -s /bin/false kibana

sudo chown -R kibana:kibana /opt/kibana

5.2 Kibana 配置文件

nano /opt/kibana/config/kibana.yml

server.port: 5601

server.host: "0.0.0.0"

server.name: "kibana"

# Elasticsearch 连接配置

elasticsearch.hosts: ["https://192.168.0.70:9200"]

elasticsearch.requestTimeout: 30000

elasticsearch.shardTimeout: 30000

# 日志配置

logging.appenders.file.type: file

logging.appenders.file.fileName: /opt/kibana/logs/kibana.log

logging.appenders.file.layout.type: json

# 性能优化

server.maxPayload: 1048576

elasticsearch.pingTimeout: 1500

5.3创建kibana连接elasticsearch的token并进行修改kibana.yml

#创建elasticsearch证书

echo | openssl s_client -connect 192.168.0.70:9200 -showcerts 2>/dev/null | sed -ne '/-BEGIN CERTIFICATE-/,/-END CERTIFICATE-/p' > es_server_ca.pem

#创建token

curl -X POST -u elastic:'@#SDzs0325./' --cacert ./es_server_ca.pem "https://192.168.0.70:9200/_security/service/elastic/kibana/credential/token/my-kibana-token"

#修改kibana.yml

nano /opt/kibana/config/kibana.yml

elasticsearch.serviceAccountToken: "AAEAAWVsYXN0aWMva2liYW5hL215LWtpYmFuYS10b2tlbjpDd2k3VFh4NFN3QzRZVnBwWFJLaHZB"

5.3 启动脚本

nano /opt/kibana/bin/kibanastart.sh

#!/bin/bash

# 设置 Node.js 内存限制

export NODE_OPTIONS="--max-old-space-size=2048"

# 启动 Kibana

sudo -u kibana /opt/kibana/bin/kibana &

# 等待服务启动

echo "等待 Kibana 启动..."

sleep 60

# 检查服务状态

curl -I http://192.168.0.70:5601

5.4创建systemd服务

sudo nano /etc/systemd/system/kibana.service[Unit]

Description=Kibana

After=network.target[Service]

Type=simple

User=kibana

Environment=NODE_OPTIONS="--max-old-space-size=2048"

WorkingDirectory=/opt/kibana

ExecStart=/opt/kibana/bin/kibana

Restart=always

RestartSec=10[Install]

WantedBy=multi-user.target

然后启用并启动:

sudo systemctl daemon-reload

sudo systemctl enable kibana

sudo systemctl start kibana

sudo systemctl status kibana

6. 故障排查与维护

6.1 常见问题诊断

|

问题类型 |

症状 |

可能原因 |

解决方案 |

|

内存不足 |

服务频繁重启 |

JVM堆内存设置过小 |

调整ES_JAVA_OPTS参数 |

|

磁盘空间 |

索引创建失败 |

磁盘空间不足 |

清理旧索引或扩容 |

|

网络连接 |

组件间通信失败 |

防火墙阻断 |

检查端口配置 |

|

配置错误 |

服务启动失败 |

配置文件语法错误 |

验证YAML语法 |

6.2 日志轮转配置

#!/bin/bash

# /etc/logrotate.d/elklogs

/opt/elasticsearch/logs/*.log {

daily

missingok

rotate 30

compress

delaycompress

notifempty

create 644 elasticsearch elasticsearch

postrotate

/bin/kill -USR1 `cat /opt/elasticsearch/logs/elasticsearch.pid 2> /dev/null` 2> /dev/null || true

endscript

}

/opt/logstash/logs/*.log {

daily

missingok

rotate 14

compress

delaycompress

notifempty

create 644 elasticsearch elasticsearch

}

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)