迷你R1:重现Deepseek R1的“顿悟时刻”强化学习教程

在我们的迷你R1实验中,我们使用了GRPO,并采用了两个基于规则的奖励,但已经需要大量的计算资源:4块H100 GPU运行6小时,才能完成一个30亿参数模型的450个训练步骤。在这个阶段,DeepSeek-R1-Zero(DeepSeek-R1的首次测试)学会了在没有任何人类反馈或描述如何进行操作的数据的情况下,通过重新评估其初始方法,为问题分配更多的思考时间。模型开始学习一种新的“格式”,它以类

背景

Deepseek R1的发布震惊了整个行业。为什么呢?DeepSeek-R1是一个开源模型,在复杂推理任务中能够与OpenAI的o1相媲美,它采用了基于群体相对策略优化(GRPO)和专注于强化学习的多阶段训练方法。他们不仅发布了该模型,还发布了一篇关于其开发过程的研究论文。

在论文中,他们描述了使用纯强化学习训练模型时的一个“顿悟时刻”。在这个阶段,DeepSeek-R1-Zero(DeepSeek-R1的首次测试)学会了在没有任何人类反馈或描述如何进行操作的数据的情况下,通过重新评估其初始方法,为问题分配更多的思考时间。他们将这种行为描述为“顿悟时刻”,因为:

这种行为不仅是模型推理能力不断提升的有力证明,也是强化学习可能导致意想不到且复杂结果的一个迷人例证。

在本篇博客文章中,我们希望利用群体相对策略优化(GRPO)和倒计时游戏来重现DeepSeek-R1的这个小“顿悟时刻”。我们将通过强化学习训练一个开源模型,尝试让它自行学习自我验证和搜索能力,以解决倒计时游戏。倒计时游戏是一种数字谜题,玩家需要使用一组随机抽取的数字和基本算术运算(+、-、×、÷)来达到或尽可能接近一个目标数字。

Target Number: 952

Available Numbers: 25, 50, 75, 100, 3, 6

(100 × (3 × 3)) + (50 + 6 / 3) = 952

Yes该博客文章包含一个可以在Jupyter Notebook中运行的交互式代码,介绍如何使用GRPO和Q-Lora训练模型。这是一种学习使用TRL和GRPO的好方法,但它运行速度很慢且需要大量的计算资源。此外,我还添加了一个脚本和说明,用于在带有多个GPU的Node或SLURM集群上运行训练。

- Setup the development environment

- Generate training samples with reasoning prefix from the Countdown Game

- Train the model using GRPO (Educational part)

- Distributed Training example for GRPO using Deepspeed and vLLM

- Results and Training Observations

注:此博客灵感来源于潘佳逸,他最初探索了这一想法,并通过一个小模型进行了验证。

但在开始之前,我们先来了解一下群体相对策略优化(GRPO),并理解它是如何工作的。

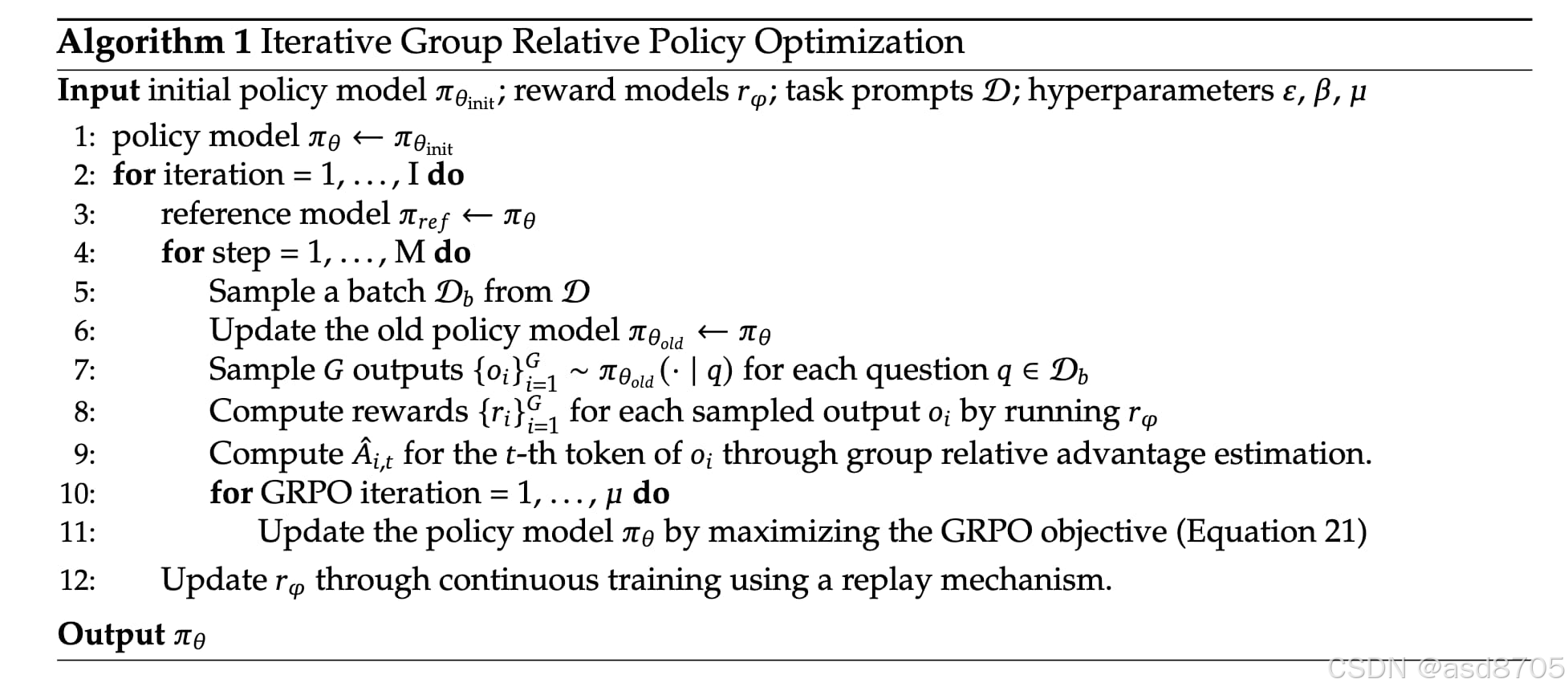

群体相对策略优化(GRPO)

群体相对策略优化(GRPO)是一种用于提升大语言模型推理能力的强化学习算法。它在 DeepSeekMath 论文中首次被提出,用于数学推理场景。GRPO 对传统的近端策略优化(PPO)进行了改进,去除了对价值函数模型的需求。相反,它通过群体得分来估计基线,从而减少了内存使用和计算开销。如今,GRPO 也被 Qwen 团队采用,可以与基于规则/二元的奖励以及通用奖励模型一起使用,以提升模型的有用性。

采样:使用当前策略为每个提示生成多个输出。

奖励评分:每个生成结果都通过奖励函数进行评分,可以是基于规则或基于结果的。

优势计算:以生成输出的平均奖励作为基线,然后计算群体中每个解决方案相对于此基线的优势。奖励在群体内进行归一化。

策略优化:策略试图最大化 GRPO 目标,该目标包括计算出的优势和一个 KL 散度项。这与 PPO 在奖励中实现 KL 项的方式不同。

1. 搭建开发环境

我们的第一步是安装 Hugging Face 库、Pytorch、vllm、trl、transformers 和 datasets。如果你还没有听说过 trl,不用担心。它是一个基于 transformers 和 datasets 的新库,能够更轻松地对开源大语言模型进行微调、强化学习人类反馈(rlhf)和对齐。

# Install Pytorch & other libraries, make sure to match your GPU driver version

%pip install "torch==2.5.1" tensorboard "setuptools<71.0.0" --index-url https://download.pytorch.org/whl/cu121

# Install flash-attn

%pip install flash-attn

# Install Hugging Face libraries

%pip install --upgrade \

"transformers==4.48.1" \

"datasets==3.1.0" \

"accelerate==1.3.0" \

"hf-transfer==0.1.9" \

"deepspeed==0.15.4" \

"trl==0.14.0"

# install vLLM

%pip install "vllm==0.7.0"

## IMPORTANT: If you want to run the notebook and the interactive cells you also need to install the following libraries:

# But first read it the blog post and then decide as they might conflict with the libraries for distributed training.

# %pip install "peft==0.14.0" "bitsandbytes==0.45.0"

注意:您可能需要重启内核以使用更新的包。

我们将使用 Hugging Face Hub 作为远程模型版本控制服务。这意味着在训练过程中,我们会自动将模型、日志和信息推送到 Hub。为此,您必须在 Hugging Face 上注册。注册账号后,我们将使用 huggingface_hub 包中的登录工具登录到我们的账户,并将我们的令牌(访问密钥)存储在磁盘上。

from huggingface_hub import login

login(token="", add_to_git_credential=True) # ADD YOUR TOKEN HERE2. 从倒计时游戏中生成带有推理前缀的训练样本

我们将使用 Jiayi-Pan/Countdown-Tasks-3to4 数据集,其中包含 3 到 4 个数字及其解决方案的样本。

作为模型,我们将使用 Qwen/Qwen2.5-3B-Instruct,这是一个具有 30 亿参数的指令微调模型。这使得展示“顿悟时刻”变得更加容易,因为它已经遵循提示格式。但你也可以使用 Qwen 的基础版本或其他模型。Jiayi-Pan 探索发现,模型需要具备一定的质量才能学习推理过程,起始参数需大于 15 亿。

from transformers import AutoTokenizer

from datasets import load_dataset

# Load dataset from Hugging Face Hub

dataset_id = "Jiayi-Pan/Countdown-Tasks-3to4"

dataset = load_dataset(dataset_id, split="train")

# select a random subset of 50k samples

dataset = dataset.shuffle(seed=42).select(range(50000))

# Load tokenizer from Hugging Face Hub to format the dataset to our "r1" prompt

tokenizer = AutoTokenizer.from_pretrained("Qwen/Qwen2.5-3B-Instruct")

# gemerate r1 prompt with a prefix for the model to already start with the thinking process

def generate_r1_prompt(numbers, target):

r1_prefix = [{

"role": "system",

"content": "You are a helpful assistant. You first thinks about the reasoning process in the mind and then provides the user with the answer."

},

{

"role": "user",

"content": f"Using the numbers {numbers}, create an equation that equals {target}. You can use basic arithmetic operations (+, -, *, /) and each number can only be used once. Show your work in <think> </think> tags. And return the final equation and answer in <answer> </answer> tags, for example <answer> (1 + 2) / 3 = 1 </answer>."

},

{

"role": "assistant",

"content": "Let me solve this step by step.\n<think>"

}]

return {"prompt": tokenizer.apply_chat_template(r1_prefix, tokenize=False, continue_final_message=True), "target": target}

# convert our dataset to the r1 prompt

dataset = dataset.map(lambda x: generate_r1_prompt(x["nums"], x["target"]))

# split the dataset into train and test

train_test_split = dataset.train_test_split(test_size=0.1)

train_dataset = train_test_split["train"]

test_dataset = train_test_split["test"]3. 使用 GRPO 训练模型(教育部分)

注意:第 3 节展示了如何使用 TRL 和 GRPO 的基本内容。如果要运行交互式代码单元,需要安装 bitsandbytes 和 peft,因为它们是 Trainer 类的必需项。本节主要用于教育目的。

TRL 通过专门的 GRPOTrainer 支持组相对策略优化(GRPO),用于根据偏好数据对 LLM 进行对齐,相关内容在 DeepSeekMath: 推动开放语言模型中数学推理的极限中有描述。GRPOTrainer 是 transformers 库中 Trainer 的子类,支持所有相同的功能,包括日志记录、检查点、分布式训练和参数高效微调(PEFT)。

GRPOTrainer 支持通用的结果奖励模型(ORM)和自定义奖励函数,可用于实现基于规则的奖励模型。在 Deepseek R1 论文中,他们实现了基于规则的奖励模型以验证生成解决方案的正确性。在我们的示例中,我们将采用类似的方法,创建 2 个奖励函数,分别如下:

格式奖励:检查生成的格式是否正确 <think> [thinking] </think><answer> [answer] </answer>

准确性奖励:从 <answer> 标签中提取方程,并将其与目标进行评估,检查每个数字是否只使用一次。

注意:在我们的示例中,正确的 <answer> 包括方程,例如 <answer> 55 + 36 - 7 - 19 </answer>

import re

def format_reward_func(completions, target, **kwargs):

"""

Format: <think>...</think><answer>...</answer>

Args:

completions (list[str]): Generated outputs

target (list[str]): Expected answers

Returns:

list[float]: Reward scores

"""

rewards = []

for completion, gt in zip(completions, target):

try:

# add synthetic <think> as its already part of the prompt and prefilled for the assistant to more easily match the regex

completion = "<think>" + completion

# Check if the format is correct

regex = r"^<think>([^<]*(?:<(?!/?think>)[^<]*)*)<\/think>\n<answer>([\s\S]*?)<\/answer>$"

match = re.search(regex, completion, re.DOTALL)

# if the format is not correct, reward is 0

if match is None or len(match.groups()) != 2:

rewards.append(0.0)

else:

rewards.append(1.0)

except Exception:

rewards.append(0.0)

return rewards

def equation_reward_func(completions, target, nums, **kwargs):

"""

Evaluates completions based on:

2. Mathematical correctness of the answer

Args:

completions (list[str]): Generated outputs

target (list[str]): Expected answers

nums (list[str]): Available numbers

Returns:

list[float]: Reward scores

"""

rewards = []

for completion, gt, numbers in zip(completions, target, nums):

try:

# add synthetic <think> as its already part of the prompt and prefilled for the assistant to more easily match the regex

completion = "<think>" + completion

# Check if the format is correct

match = re.search(r"<answer>(.*?)<\/answer>", completion)

if match is None:

rewards.append(0.0)

continue

# Extract the "answer" part from the completion

equation = match.group(1).strip()

# Extract all numbers from the equation

used_numbers = [int(n) for n in re.findall(r'\d+', equation)]

# Check if all numbers are used exactly once

if sorted(used_numbers) != sorted(numbers):

rewards.append(0.0)

continue

# Define a regex pattern that only allows numbers, operators, parentheses, and whitespace

allowed_pattern = r'^[\d+\-*/().\s]+$'

if not re.match(allowed_pattern, equation):

rewards.append(0.0)

continue

# Evaluate the equation with restricted globals and locals

result = eval(equation, {"__builtins__": None}, {})

# Check if the equation is correct and matches the ground truth

if abs(float(result) - float(gt)) < 1e-5:

rewards.append(1.0)

else:

rewards.append(0.0)

except Exception:

# If evaluation fails, reward is 0

rewards.append(0.0)

return rewards让我们用一个样本来尝试我们的奖励函数。

注意:这些示例中没有一个是以<think>开头的,因为我们是人为地将其添加到提示中的。

correct_sample_1 = """We need to find an equation using the numbers 19, 36, 55, and 7

exactly once, with basic arithmetic operations, that equals 65. One possible

combination is 55 + 36 - 19 + 7... </think>

<answer> 55 + 36 - 7 - 19 </answer>"""

correct_sample_2 = """ ... </think>

<answer> 55 + 36 - 7 - 19 </answer>"""

wrong_format = """User: Using the numbers [19, 36, 55, 7], create an equation that equals 65."""

wrong_format_2 = """To find the equation that equals 79 using the numbers 95, 78, 6, 88, I'll start by adding 88 and 95:

95 + 88 = 183

Now, let's subtract 104 from 183 to get 79:

183 - 104 = 79

<think> 183 - 104 = 79 </think><think> 183 - 104 = 79 </think><answer> 183 - 104 = 79 </answer>"""

wrong_result = """ ... </think>

<answer> 55 + 36 - 7 - 18 </answer>"""

test_rewards = format_reward_func(completions=[correct_sample_1, correct_sample_2, wrong_format, wrong_format_2, wrong_result], target=["65", "65", "65", "65", "65"], nums=[[19, 36, 55, 7]] * 5)

assert test_rewards == [1.0, 1.0, 0.0, 0.0, 1.0], "Reward function is not working"

test_rewards = equation_reward_func(completions=[correct_sample_1, correct_sample_2, wrong_format, wrong_format_2, wrong_result], target=["65", "65", "65", "65", "65"], nums=[[19, 36, 55, 7]] * 5)

assert test_rewards == [1.0, 1.0, 0.0, 0.0, 0.0], "Reward function is not working"看起来不错,现在我们来定义剩余的训练参数,创建一个训练器并开始训练。

from trl import GRPOConfig, GRPOTrainer, get_peft_config, ModelConfig

# our model we are going to use as policy

model_config = ModelConfig(

model_name_or_path="Qwen/Qwen2.5-3B-Instruct",

torch_dtype="bfloat16",

attn_implementation="flash_attention_2",

use_peft=True,

load_in_4bit=True,

)

# Hyperparameters

training_args = GRPOConfig(

output_dir="qwen-r1-aha-moment",

learning_rate=5e-7,

lr_scheduler_type="cosine",

logging_steps=10,

max_steps=100,

per_device_train_batch_size=1,

gradient_accumulation_steps=1,

gradient_checkpointing=True,

gradient_checkpointing_kwargs={"use_reentrant": False},

bf16=True,

# GRPO specific parameters

max_prompt_length=256,

max_completion_length=1024, # max length of the generated output for our solution

num_generations=2,

beta=0.001,

)

trainer = GRPOTrainer(

model=model_config.model_name_or_path,

reward_funcs=[format_reward_func, equation_reward_func],

args=training_args,

train_dataset=train_dataset,

eval_dataset=test_dataset,

peft_config=get_peft_config(model_config),

)我们可以通过调用训练器实例上的train方法来开始训练。

注意:强化训练非常缓慢且计算密集。在1x L4上使用Q-LoRA,批量大小为1,并且每个样本仅生成2个结果,运行单步需要花费超过20分钟。

# Train and push the model to the Hub

trainer.train()

# Save model

trainer.save_model(training_args.output_dir)4. 使用 Deepspeed 和 vLLM 为 GRPO 进行分布式训练的示例

仅用 20 多分钟进行每一步且每个样本仅生成 2 次是不可行的。我们需要扩大训练规模。Hugging Face TRL 增加了对使用 Deepspeed 进行分布式训练以及使用 vLLM 加速生成的支持。我准备了一个 run_r1_grpo.py 脚本和一个 recipes/grpo-qwen-2.5-3b-deepseek-r1-countdown.yaml 配置文件来运行训练。

此配置已在配备 4 块 H100 80GB GPU 的节点上测试并验证,单步耗时约 45-60 秒,因为我们可以通过 vLLM 进行生成,并利用 Deepspeed 进行分布式训练。因此,我们需要确保正确设置 num_processes 为 GPU 数量减 1,因为最后一块 GPU 将用于 vLLM 的生成。如果你使用更多 GPU,则需要在配置文件中将 vllm_device 更改为最后一块 GPU 的索引,例如,如果你有 8 块 GPU,则需要设置 vllm_device=7,num_processes 为 7。

运行训练的命令:

accelerate launch --num_processes 3 --config_file configs/accelerate_configs/deepspeed_zero3.yaml scripts/run_r1_grpo.py --config receipes/grpo-qwen-2.5-3b-deepseek-r1-countdown.yaml经过优化的分布式训练,每步8次生成,使用4块H100 80GB显卡,大约需要45-60秒。完整的450步训练大约需要6小时。

5. 结果与训练观察

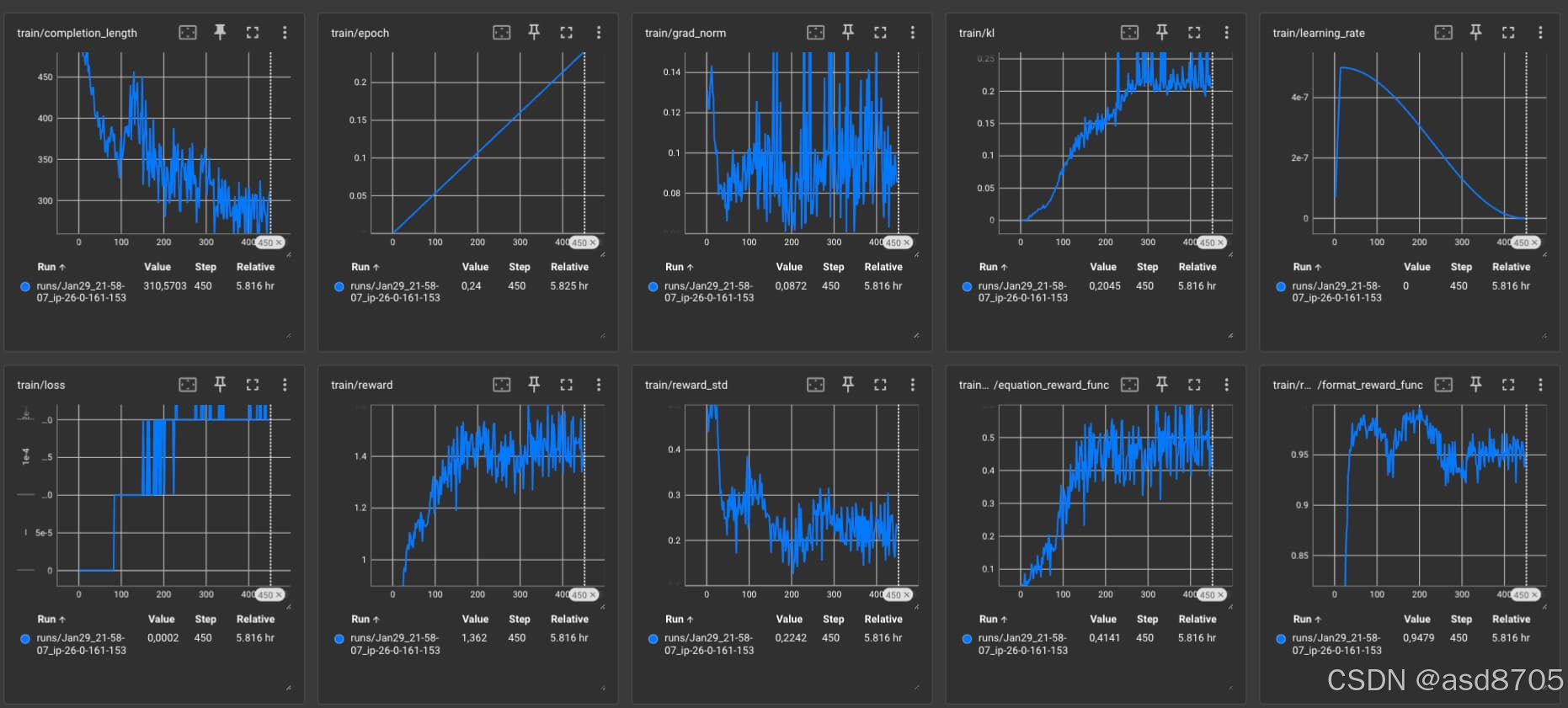

脚本将随机完成的结果保存到 completion_samples 文件夹中,您可以使用这些结果来检查模型的进展。它包括 completion_samples.txt 和 success_completion_samples.txt。completion_samples.txt 包含所有完成的结果,而 success_completion_samples.txt 包含正确解决方程的结果。以下是关于性能随时间变化的有趣训练观察,以及 Tensorboard 日志和成功的推理样本。

每 25 步保存一次检查点的模型可以在 philschmid/qwen-2.5-3b-r1-countdown 中找到。

超参数

我使用 DeepSeekMath 论文中提到的超参数开始实验,学习率为 1e-6,β(KL 系数)为 0.04,这导致在大约 150 步后训练变得不稳定。我进行了一些小规模的消融实验,并根据 OpenRLHF 的测试,将学习率降低到 5e-7,β 降低到 0.001。我没有测试将 num_generations 从 8 增加到 64 对训练的影响。64 是 DeepSeekMath 论文中使用的生成值。所有其他参数都可以在 grpo-qwen-2.5-3b-deepseek-r1-countdown.yaml 配置文件中找到。

训练观察:

在大约 50 步时,模型已经学会了正确的格式 <think>...</think>\n<answer>...</answer>。

在 100 步时,解决方程的成功率约为 25%。模型开始用文字进行“推理”,请参见下面的例子。

在 200 步时,性能的收敛速度似乎更慢,成功率为约 40%。模型开始学习一种新的“格式”,它以类似于编程的方式解决方程,通过尝试不同的组合并审查结果,请参见“200 步到 450 步之间的成功推理样本”。

在 450 步时,解决方程的成功率为 50%。性能仍在缓慢提升,模型保持了从 200 步开始的新格式。

我有三个可能的假设,解释为什么模型从“文字推理”转向“程序化执行”:

- Qwen 2.5 3B 不够强大或规模太小,DeepSeek 提到需要一个非常强大的基础模型。

- 奖励函数定义不够完善,导致模型通过奖励机制来解决方程。我们可以尝试强制它使用文字,例如设置一个数字到文字频率的条件。(我们对 DeepSeek 的奖励函数了解不多)

- 仅在 Countdown Game 任务上进行训练可能会迫使模型自然地学习解决方程的最有效方法,因为不需要其他格式。

- 我们没有训练足够长的时间,在 R1 论文中,他们展示了训练超过 8000 步的可视化结果。

Tensorboard 结果

成功推理样本,最多到第200步

<think> First, I need to think about the numbers we have: 14, 70, and 80. To get 85, I think 80 might be a good starting point because it's right in between 85. If I subtract or add 80, it won't lead to 85 easily. Next, 70 seems to be a good intermediate number, especially to use in conjunction with 14.

Now, I can think about different combinations using addition, subtraction and multiplication/division. If I take 80 - 70 = 10, which is not needed. So, I need to find a way to use 14. If I subtract 14 from 80, I get 66, which still isn't 85. If I subtract 70 from 80, I get 10 again, this isn't constructive yet.

Multiplication could create numbers larger than the target 85. Let's consider division: If I divide 70 by 14, I get 5. Then if I add this result to 80, I get 85. This combination seems promising: 80 - (70 / 14) = 80 - 5 = 75, this still isn't quite right. Let me correct my approach: Let's use division and then addition. If I take 80 / (70 / 14) → 80 / 5 = 16, and then 80 - 16 = 64, still incorrect. Let me simplify: 80 / (70 / 14) → 80 / 5 = 16, and then 80 - 16 gives 64 again, let me correct: 80 - (70 / 14) → 80 - 5 = 75, but I need 85. Thus I will use the combinations in a slightly different order to insert numbers: I get 80 + (70 / 14) = 80 + 5 = 85</think>

<answer> 80 + (70 / 14) </answer><think> First, I need to consider how I can use the numbers 1, 21, and 90 to reach 68. I can try different combinations of operations. Let's try to use subtraction and addition. If I subtract 90 from 21, I get -69, which is too far from 68. Now, if I add 1 to 90, I get 91, which is also not right. Another way is to see what happens if I subtract 21 from 90, which gives me 69. This is closer but still not 68. Let me use multiplication instead of subtraction. 90 * 1 = 90. Now, if I try to reduce this slightly by subtracting 21, I am not getting close to 68.

Now, let's try another multiplication. I'll think of 1 * (larger value) + (smaller value). Let's try 1 * 90 + 21. This evaluates to 90 + 21 = 111, which is too much. Instead, I will try: 90 / 1 + 21 - 7. But this gives a division by 7, which seems complex. Let's re-evaluate: I'll use 1 to divide 21: 21 / 1 = 21. Now, subtract 21 from 90, and add 1, we have 90 - 21 = 69, using one...

Hey, wait on re-evaluating I will subtract 21 from 90, and include 1 to complete the solution: 90 - (21 + 1) which would be 68.</think>

<answer> 90 - (21 + 1) </answer><think> First, I need to consider the numbers we have: 59, 80, and 66. I need to use these numbers to get 45. Let's try to use subtraction first since it can quickly reduce large numbers.

Let's see if we can get close by subtracting or adding these numbers. If I subtract 66 from 80, I get 14. Then I need to make this 14 equal 45 when combined with 59. That gives me 14 + 59 = 73, which is too high.

Now let's try adding numbers. If I add 59 and 66, I get 125. Then I would need to subtract 80 from 125 to get 45, but 80 is too large to fit well with this.

Let me try a different approach. What if I subtract 66 from 80 first and then add 59? That would be 14 + 59 = 73, which is still not 45.

Hmm, I need to think of another combination. What if I start with 59 and then add 80 and subtract 66? That would be (59 + 80) - 66, let me calculate it. That equals 139 - 66, which is 73.

That's still not 45, so let me try rearranging it slightly: maybe 80 - 66 + 59 would be closer to 45. Calculating again: 80 - 66 is 14, then adding 59 gives 73.

This way doesn't work either. Let's flip this: maybe (59 + 66) - 80. Let me check: that's 125 - 80, which equals 45.</think>

<answer>(59 + 66) - 80</answer><think> First, I need to find a combination of the numbers 61, 4, 94, and 21 that can be manipulated using basic arithmetic operations to equal 50. One way to approach this is to look for numbers that can be used to reach a target number close to 50 and then adjust with the remaining numbers.

Let's try combining 94 and 21 first:

94 + 21 = 115

Now, we need to get from 115 to 50. We can try subtracting 65:

115 - 65 = 50

Now we need to find a way to get 65 using the remaining numbers 61 and 4. We can try:

61 - 4 = 57

Now we need to get from 57 to 65. Adding 8 would give us that:

57 + 8 = 65

So, we need to find a way to get 8 using 61 and 4. We can try:

61 - 94 = -36

This is negative, so let's try dividing:

61 / 94 = 0.648987...

This is not helping, so let's try multiplication and division in a different way:

(61 - 4) * (94 / 21) = 57 * 4.476... = 254.4

This is not working either. Let's try a different approach:

(94 - 61) = 33

Now we have:

33 + 21 = 54

We need to adjust from 54 to 50, so we can:

54 - 4 = 50

So, the equation becomes:

(94 - 61) + (21 - 4) = 50

</think>

<answer>(94 - 61) + (21 - 4)</answer><think> First, I'll consider the possibility of directly subtracting two numbers to get close to 31. If I subtract 57 from 63, I get 7. Now, if I subtract this 7 from 25, I get 25 - 7 = 18. Far from 31, so this doesn't work.

Next, let's try adding and subtracting. Dividing 63 by 25 will give a decimal, so that's unlikely to work. Now, let's see if adding and subtracting in another order could work. For example, let's try 63 - 25, and then we see this gets us 38. Now, we need a way to get to 31 from here. Subtracting 7 from 38 would work, but we can't figure out how to create that 7 from the given numbers.

Hmm, let me try to incorporate all numbers by adding and subtracting. If I try 63 + 25, I get 88. This is quite far from 31, but if 88 - 57, I get 31 exactly!

This seems to be the equation: 63 + 25 - 57 equals 31.

</think>

<answer> 63 + 25 - 57 </answer>200至450步之间的成功推理样本

<think> We need to use the numbers 39, 16, and 55 with basic arithmetic operations to get 78. Let's try different combinations:

- 55 + 39 - 16 = 84 - 16 = 68 (too low)

- 55 + 16 - 39 = 71 - 39 = 32 (too low)

- 55 - 16 + 39 = 39 + 39 = 78 (this works)

So, the equation is 55 - 16 + 39 = 78. </think>

<answer> 55 - 16 + 39 </answer><think> We need to use the numbers 21, 5, and 40 with basic arithmetic operations to get 24. Let's try different combinations:

- 21 - 5 - 40 = -15

- 21 - 5 + 40 = 56

- 21 + 5 - 40 = -14

- 21 * 5 / 40 = 2.1 (not an integer, let's try another)

- 40 * 5 / 21 = 9.52 (not 24)

- 40 / 5 - 21 = 9 - 21 = -12

- 5 * 40 / 21 = 100 / 21 ~= 4.76 (not 24)

- 5 + 40 - 21 = 24 ! This works.

</think>

<answer> 5 + 40 - 21 </answer><think> We need to use the numbers 75, 99, 62, and 34 with basic arithmetic operations to get 72. Let's try different combinations:

- 99 - 75 + 62 - 34 = 92 - 34 = 58 (too high)

- 99 - 75 - 62 + 34 = 24 - 62 + 34 = -38 + 34 = -4 (too low)

- 99 + 75 - 62 - 34 = 174 - 96 = 78 (too high)

- 99 + 75 - 62 + 34 = 174 + 34 - 62 = 208 - 62 = 146 (too high)

- 99 - 75 + 62 + 34 = 24 + 96 = 120 (too high)

- 75 + 99 - 62 - 34 = 174 - 96 = 78 (too high)

- 75 + 99 - 62 + 34 = 174 + 34 - 62 = 208 - 62 = 146 (too high)

- 75 + 62 - 99 + 34 = 137 - 99 + 34 = 38 + 34 = 72

So, 75 + 62 - 99 + 34 equals 72.

</think>

<answer> 75 + 62 - 99 + 34 </answer>结论

DeepSeek R1的发布及其研究论文可能是开放科学和开源发展的转折点。在DeepSeek发布仅一周后,我们已经能够使用GRPO和倒计时游戏复现一个简单版本的R1所学到的“推理”能力。虽然我们的实现专注于特定任务,而非通用推理以及收敛到非常具体的“推理”格式,但它表明这种方法是有效的。

在我们的迷你R1实验中,我们使用了GRPO,并采用了两个基于规则的奖励,但已经需要大量的计算资源:4块H100 GPU运行6小时,才能完成一个30亿参数模型的450个训练步骤。这让我们对扩展强化学习所需的计算需求有了初步的概念。DeepSeek运行了一个6710亿参数模型,超过8000个步骤,他们可能进行了许多消融实验。

展望2025年,很明显我们正处于更重大进展的边缘。强化学习将变得更加易于获取和用户友好,更多研究人员和开发者将探索其潜力,但与以往相比,以及与有监督微调相比,它也将需要更多的计算资源。

我对2025年充满期待。如果你有任何问题或想法,请随时与我联系。

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献10条内容

已为社区贡献10条内容

所有评论(0)