ijkplayer源码分析 视频向音频同步

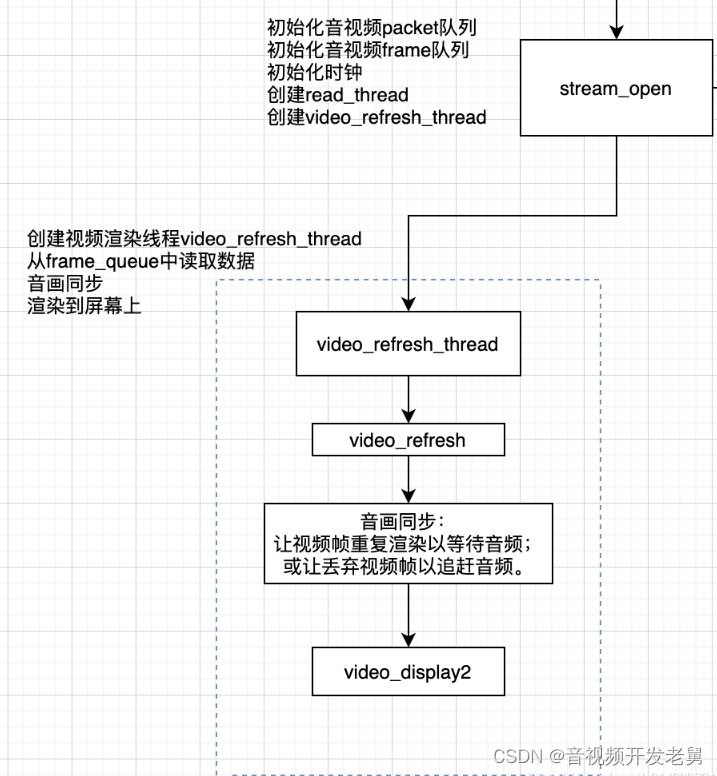

本系列如下:视频渲染流程音频播放流程read线程流程音频解码流程视频解码流程视频向音频同步start流程和buffering缓冲策略本文是流程分析的第六篇,分析ijkPlayer中的音视频同步,在video_refresh_thread中,如下流程图中所示。音视频同步基本概念因为音视频是独立线程解码和输出的,如果不进行音视频同步输出的话,则播放时会各播各的,会出现音画不同步的现象,所以需要进行音视

本系列如下:

视频渲染流程

音频播放流程

read线程流程

音频解码流程

视频解码流程

视频向音频同步

start流程和buffering缓冲策略

本文是流程分析的第六篇,分析ijkPlayer中的音视频同步,在video_refresh_thread中,如下流程图中所示。

音视频同步基本概念

因为音视频是独立线程解码和输出的,如果不进行音视频同步输出的话,则播放时会各播各的,会出现音画不同步的现象,所以需要进行音视频同步输出。

有三种同步策略

视频同步到音频,即音频为主时钟

音频同步到视频,即视频为主时钟

视频、音频同步到外部时钟,即外部时钟(系统时间)为主时钟

本文分析视频同步到音频的,这也是业界普遍采用的一种方式。因为人们对声音的敏感度比视觉高,优先保证音频流畅播放,视频向音频同步,即对视频帧进行适当的丢帧或重复渲染以追赶或等待音频。

本文福利, 免费领取C++音视频学习资料包、技术视频/代码,内容包括(音视频开发,面试题,FFmpeg ,webRTC ,rtmp ,hls ,rtsp ,ffplay ,编解码,推拉流,srs)↓↓↓↓↓↓见下面↓↓文章底部点击免费领取↓↓

音视频同步用到的计量

通过音视频帧里的pts在播放进行校准,让视频帧重复渲染以等待音频、或让丢弃视频帧以追赶音频。

时间单位

ijk里面的时间单位是s,全都转换成了s,所以pts相关的类型都是double

AVRational

ffmpeg里的一时间的刻度,标准基。

如(1,25),那么时间的可短就是1/25,每一份是0.04s。

那么pts=25时,即当前帧时刻为1s。

typedef struct AVRational{

int num; ///< Numerator ,分子

int den; ///< Denominator,分母

} AVRational;

typedef struct AVStream {

AVRational time_base;

}

- pts

frame_queue中Frame帧,音视频解码线程里解码Packet后入队前赋值pts:frame->pts = avframe->pts * av_q2d(tb)

// Frame

typedef struct Frame {

AVFrame *frame;

double pts;

}

// ffmpeg

typedef struct AVFrame {

int64_t pts;

}

frame_timer

frame_timer指当前帧时刻,用于和系统标准时间比较,推进播放流程。

在·fp_start_l流程上调用toggle_pause(0)进行初始化;

VideoState是在prepare阶段,stream_open中创建的,是全局的一个大杂烩的结构体,封装了各种运行阶段需要的东西。

struct VideoState {

double frame_timer;

}

- master clock,主时钟。

视频向音频对齐,在speed为0的情况下,该函数返回的就是pts。

static double get_master_clock(VideoState *is) {

return is->audclk.pts;

}

音视频同步代码分析

在音视频同步过程中,有三个地方进行了同步:

渲染时若当前时刻小于当前帧截止时刻(当前帧时刻+当前帧显示时长),则修改remainingtime,让渲染线程睡一会,让这帧多渲染会

渲染时如果队列里多帧等待渲染,若当前系统时刻时间大于当前帧截止时刻(当前帧时刻+当前帧显示时长),则直接丢弃当前帧,再重新走渲染逻辑

解码时落后时间大于AV_NOSYNC_THRESHOLD,进入丢帧逻辑

AV_NOSYNC_THRESHOLD,100s,只在100s内的数据进行同步,音视频差超过100s则不处理

渲染时音视频同步核心代码

渲染时若当前时刻小于当前帧截止时刻(当前帧时刻+当前帧显示时长),则修改remainingtime,让渲染线程睡一会,让这帧多渲染会

渲染时如果队列里多帧等待渲染,若当前系统时刻时间大于当前帧截止时刻(当前帧时刻+当前帧显示时长),则直接丢弃当前帧,再重新走渲染逻辑

static void video_refresh(FFPlayer *opaque, double *remaining_time) {

double last_duration, duration, delay;

Frame *vp, *lastvp;

/*

* lastvp:上一帧,也就是当前正在显示的帧

* vp: 要显示的帧

*

* vp这次将要显示的目标帧,lastvp是已经显示了的帧(也是当前屏幕上看到的帧),nextvp是下一次要显示的帧。

*/

lastvp = frame_queue_peek_last(&is->pictq);

vp = frame_queue_peek(&is->pictq);

// 当前视频帧应该播放多长时间,根据pts计算

last_duration = vp_duration(is, lastvp, vp);

/*

* 计算当前帧还应该显示多长时间,即真正要显示多长时间

*/

delay = compute_target_delay(ffp, last_duration, is);

// 系统时刻

time = av_gettime_relative() / 1000000.0;

/*

* 1. 当前时刻小于当前帧截止时刻,则修改remainingtime,让渲染线程睡一会,让这帧多渲染会

*/

if (time < is->frame_timer + delay) {

*remaining_time = FFMIN(is->frame_timer + delay - time, *remaining_time);

goto display; // 去渲染

}

/*

* 2. 队列里有多帧等待渲染,若当前系统时刻时间大于当前帧截止时刻,则直接丢弃当前帧,重新开始

*/

if (frame_queue_nb_remaining(&is->pictq) > 1) { // 队列里有多帧

Frame *nextvp = frame_queue_peek_next(&is->pictq);

duration = vp_duration(is, vp, nextvp);

if (!is->step &&

(ffp->framedrop > 0 ||

(ffp->framedrop && get_master_sync_type(is) != AV_SYNC_VIDEO_MASTER))

&& time > is->frame_timer + duration // 系统时刻 > 该帧截止时刻

) {

frame_queue_next(&is->pictq); // 丢弃,指针向下移一个

goto retry; // 重新开始

}

}

}

解码时音视频同步核心代码

解码时落后时间大于AV_NOSYNC_THRESHOLD,进入丢帧逻辑,即不把该帧入队。

是解码的时候满足条件就丢弃该帧,不是一直丢,必须要满足条件才能丢。

软解的实现是,ffpipenode_ffplay_vdec.c,

硬解的实现是,ffpipenode_android_mediacodec_vdec.c,

均有如下逻辑:

// 获取当前视频帧时刻

dpts = frame->pts * av_q2d(tb);

// frame_drop:允许丢帧么,同时也是丢帧的最大数据,即设置为120帧的话丢到120帧时就放过一帧,让这第121帧显示一下,后面的继续丢

if (ffp->framedrop > 0

|| (ffp->framedrop && ffp_get_master_sync_type(is) != AV_SYNC_VIDEO_MASTER)) {

ffp->stat.decode_frame_count++;

if (frame->pts != AV_NOPTS_VALUE) {

// 当前播放时刻与音频差值

double diff = dpts - ffp_get_master_clock(is);

/*

* #define AV_NOSYNC_THRESHOLD 100.0, 即 -100s =< diff <= 100

* frame_last_filter_delay一般为0,即diff < 0

* 即视频落后音频在100s内的话

*/

if (!isnan(diff)

&& fabs(diff) < AV_NOSYNC_THRESHOLD

&& diff - is->frame_last_filter_delay < 0

&& is->viddec.pkt_serial == is->vidclk.serial // 同一个序列

&& is->videoq.nb_packets) { // 队列至少有有一帧

is->frame_drops_early++;

is->continuous_frame_drops_early++;

if (is->continuous_frame_drops_early > ffp->framedrop) {

is->continuous_frame_drops_early = 0;

} else {

ffp->stat.drop_frame_count++;

ffp->stat.drop_frame_rate = (float)(ffp->stat.drop_frame_count) / (float)(ffp->stat.decode_frame_count);

if (frame->opaque) {

SDL_VoutAndroid_releaseBufferProxyP(opaque->weak_vout, (SDL_AMediaCodecBufferProxy **)&frame->opaque, false);

}

av_frame_unref(frame);

got_picture = 0; // 丢弃该帧

}

}

}

}

compute_target_delay函数解析

/*

* @param: delay:当前播放帧的持续时长

* @return: 当前帧真正应该持续多长时间

*/

static double compute_target_delay(FFPlayer *ffp, double delay, VideoState *is) {

double sync_threshold, diff = 0;

if (get_master_sync_type(is) != AV_SYNC_VIDEO_MASTER) {

// 当前视频帧与音频时钟的差,视频 - 音频

diff = get_clock(&is->vidclk) - get_master_clock(is);

/*

* 限制在[AV_SYNC_THRESHOLD_MIN,AV_SYNC_THRESHOLD_MAX]之间

* delay < AV_SYNC_THRESHOLD_MIN,sync_threshold取AV_SYNC_THRESHOLD_MIN

* delay > AV_SYNC_THRESHOLD_MAX,sync_threshold取AV_SYNC_THRESHOLD_MAX

* 在中间的取delay

*/

sync_threshold = FFMAX(AV_SYNC_THRESHOLD_MIN, FFMIN(AV_SYNC_THRESHOLD_MAX, delay));

if (!isnan(diff) && fabs(diff) < AV_NOSYNC_THRESHOLD) {

if (diff <= -sync_threshold) {

// 视频落后音频,让当前帧快快结束,播放下一阵

delay = FFMAX(0, delay + diff);

} else if (diff >= sync_threshold) {

if (delay > AV_SYNC_FRAMEDUP_THRESHOLD) {

// 当前帧已经显示了很多秒了,该动动了

delay = delay + diff;

} else {

// 视频超前,超太前了,让当前帧多播放一会

delay = 2 * delay;

}

} else {

// do nothing,在合理区间,返回去继续判断

}

}

}

if (ffp) {

ffp->stat.avdelay = delay;

ffp->stat.avdiff = diff;

}

return delay; // 返回的是delay

}

总代码

把代码贴一下,主要看注释,前面已经讲过核心了。

- 渲染时总代码:

-

#define REFRESH_RATE 0.01 // 默认情况每10ms轮询一次 static int video_refresh_thread(void *arg) { FFPlayer *ffp = arg; VideoState *is = ffp->is; double remaining_time = 0.0; while (!is->abort_request) { if (remaining_time > 0.0) av_usleep((int) (int64_t) (remaining_time * 1000000.0)); remaining_time = REFRESH_RATE; if (is->show_mode != SHOW_MODE_NONE && (!is->paused || is->force_refresh)) video_refresh(ffp, &remaining_time); // 动态修改睡眠时间remaining_time } return 0; } static void video_refresh(FFPlayer *opaque, double *remaining_time) { static void video_refresh(FFPlayer *opaque, double *remaining_time) { FFPlayer *ffp = opaque; VideoState *is = ffp->is; double time; Frame *sp, *sp2; if (!is->paused && get_master_sync_type(is) == AV_SYNC_EXTERNAL_CLOCK && is->realtime) check_external_clock_speed(is); if (!ffp->display_disable && is->show_mode != SHOW_MODE_VIDEO && is->audio_st) { time = av_gettime_relative() / 1000000.0; if (is->force_refresh || is->last_vis_time + ffp->rdftspeed < time) { video_display2(ffp); is->last_vis_time = time; } *remaining_time = FFMIN(*remaining_time, is->last_vis_time + ffp->rdftspeed - time); } if (is->video_st) { retry: if (frame_queue_nb_remaining(&is->pictq) == 0) { // nothing to do, no picture to display in the queue if (!ffp->first_video_frame_rendered) { live_log(ffp->inject_opaque, "[MTLive] video refresh, no picture to display\n"); av_log(ffp, AV_LOG_DEBUG, "[MTLive] video refresh, no picture to display\n"); } } else { av_log(ffp, AV_LOG_DEBUG, "音视频同步:start===========================================================\n"); if (!ffp->first_video_frame_rendered) { live_log(ffp->inject_opaque, "[MTLive] video refresh start\n"); av_log(ffp, AV_LOG_DEBUG, "[MTLive] video refresh start\n"); } double last_duration, duration, delay; Frame *vp, *lastvp; /* dequeue the picture */ /* * lastvp:上一帧,也就是当前正在显示的帧 * vp: 要显示的帧 * * vp这次将要显示的目标帧,lastvp是已经显示了的帧(也是当前屏幕上看到的帧),nextvp是下一次要显示的帧。 */ lastvp = frame_queue_peek_last(&is->pictq); vp = frame_queue_peek(&is->pictq); if (vp->serial != is->videoq.serial) { // 不同序列号直接跳过 frame_queue_next(&is->pictq); goto retry; } if (lastvp->serial != vp->serial) { av_log(ffp, AV_LOG_DEBUG, "音视频同步:frame_timer赋值1111111,%d, %d", lastvp->serial, vp->serial); is->frame_timer = av_gettime_relative() / 1000000.0; } if (is->paused && ffp->first_video_frame_rendered) goto display; /* compute nominal last_duration */ last_duration = vp_duration(is, lastvp, vp); // 当前视频帧应该播放多长时间,根据pts计算 /* * 计算当前帧还应该显示多长时间,即真正要显示多长时间 */ delay = compute_target_delay(ffp, last_duration, is); // 系统时刻 time = av_gettime_relative() / 1000000.0; av_log(ffp, AV_LOG_DEBUG, "音视频同步:last_duration: %f, delay: %f, time: %f, frame_timer: %f\n", last_duration, delay, time, is->frame_timer); // frame_timer > time,修正成time,跟系统时刻走 if (isnan(is->frame_timer) || time < is->frame_timer) { av_log(ffp, AV_LOG_DEBUG, "音视频同步:frame_timer赋值22222222"); is->frame_timer = time; } av_log(ffp, AV_LOG_DEBUG, "音视频同步:time: %f, frame_timer: %f\n", time, is->frame_timer); // 当前时刻小于当前帧截止时刻,则修改remainingtime,让渲染线程睡一会,让这帧多渲染会 if (time < is->frame_timer + delay) { *remaining_time = FFMIN(is->frame_timer + delay - time, *remaining_time); av_log(ffp, AV_LOG_DEBUG, "音视频同步:继续显示remaining_time: goto display: %f", *remaining_time); goto display; // 去渲染 } // 推进播放时刻 is->frame_timer += delay; // 播放时刻和系统时间的偏离太大 > AV_SYNC_THRESHOLD_MAX,则修正为系统时间 if (delay > 0 && time - is->frame_timer > AV_SYNC_THRESHOLD_MAX) { av_log(ffp, AV_LOG_DEBUG, "音视频同步:frame_timer赋值33333333"); is->frame_timer = time; } SDL_LockMutex(is->pictq.mutex); if (!isnan(vp->pts)) update_video_pts(is, vp->pts, vp->pos, vp->serial); SDL_UnlockMutex(is->pictq.mutex); av_log(ffp, AV_LOG_DEBUG, "音视频同步:frame_queue_nb_remaining %d\n", frame_queue_nb_remaining(&is->pictq)); // 队列里有多帧等待渲染,若当前系统时刻时间大于当前帧截止时刻,则直接丢弃当前帧,重新开始 if (frame_queue_nb_remaining(&is->pictq) > 1) { Frame *nextvp = frame_queue_peek_next(&is->pictq); duration = vp_duration(is, vp, nextvp); if (!is->step && (ffp->framedrop > 0 || (ffp->framedrop && get_master_sync_type(is) != AV_SYNC_VIDEO_MASTER)) && time > is->frame_timer + duration // 系统时刻 > 该帧截止时刻 ) { frame_queue_next(&is->pictq); // 丢弃,指针向下移一个 av_log(ffp, AV_LOG_DEBUG, "音视频同步:frame_queue_nb_remaining丢弃,goto retry\n"); av_log(ffp, AV_LOG_DEBUG, "音视频同步:===========================================================end\n\n"); goto retry; // 重新开始 } } frame_queue_next(&is->pictq); // 移动到下一帧 is->force_refresh = 1; SDL_LockMutex(ffp->is->play_mutex); if (is->step) { is->step = 0; if (!is->paused) stream_update_pause_l(ffp); } SDL_UnlockMutex(ffp->is->play_mutex); } display: /* display picture */ av_log(ffp, AV_LOG_DEBUG, "音视频同步:display: %d, %d\n", is->force_refresh, is->pictq.rindex_shown); if (!ffp->display_disable && is->force_refresh && is->show_mode == SHOW_MODE_VIDEO && is->pictq.rindex_shown) { av_log(ffp, AV_LOG_DEBUG, "音视频同步:video_display2\n"); av_log(ffp, AV_LOG_DEBUG, "音视频同步:===========================================================end\n\n"); video_display2(ffp); // 显示 } } is->force_refresh = 0; } /* * @param: delay:当前播放帧的持续时长 * @return: 当前帧真正应该持续多长时间 */ static double compute_target_delay(FFPlayer *ffp, double delay, VideoState *is) { double sync_threshold, diff = 0; /* update delay to follow master synchronisation source */ if (get_master_sync_type(is) != AV_SYNC_VIDEO_MASTER) { /* if video is slave, we try to correct big delays by duplicating or deleting a frame */ diff = get_clock(&is->vidclk) - get_master_clock(is); // 要播放的音视频帧pts的diff,视频 - 音频 /* skip or repeat frame. We take into account the delay to compute the threshold. I still don't know if it is the best guess */ /* * 限制在[AV_SYNC_THRESHOLD_MIN,AV_SYNC_THRESHOLD_MAX]之间 * delay < AV_SYNC_THRESHOLD_MIN,sync_threshold取AV_SYNC_THRESHOLD_MIN * delay > AV_SYNC_THRESHOLD_MAX,sync_threshold取AV_SYNC_THRESHOLD_MAX * 在中间的取delay */ sync_threshold = FFMAX(AV_SYNC_THRESHOLD_MIN, FFMIN(AV_SYNC_THRESHOLD_MAX, delay)); av_log(ffp, AV_LOG_DEBUG, "音视频同步:compute_target_delay#diff: %f, delay: %f, sync_threshold: %f\n", diff, delay, sync_threshold); /* -- by bbcallen: replace is->max_frame_duration with AV_NOSYNC_THRESHOLD */ // if (!isnan(diff) && fabs(diff) < AV_NOSYNC_THRESHOLD) { // if (diff <= -sync_threshold) { // // 视频落后音频,让当前帧快快结束,播放下一阵 // delay = FFMAX(0, delay + diff); // } else if (diff >= sync_threshold && delay > AV_SYNC_FRAMEDUP_THRESHOLD) { // // // delay = delay + diff; // } else if (diff >= sync_threshold) { // // 视频超前,超太前了,让当前帧多播放一会 // delay = 2 * delay; // } // } if (!isnan(diff) && fabs(diff) < AV_NOSYNC_THRESHOLD) { if (diff <= -sync_threshold) { // 视频落后音频,让当前帧快快结束,播放下一阵 delay = FFMAX(0, delay + diff); } else if (diff >= sync_threshold) { if (delay > AV_SYNC_FRAMEDUP_THRESHOLD) { // 当前帧已经显示了很多秒了,该动动了 delay = delay + diff; } else { // 视频超前,超太前了,让当前帧多播放一会 delay = 2 * delay; } } else { // do nothing,在合理区间,返回去继续判断 } } } if (ffp) { ffp->stat.avdelay = delay; ffp->stat.avdiff = diff; } #ifdef FFP_SHOW_AUDIO_DELAY av_log(NULL, AV_LOG_TRACE, "video: delay=%0.3f A-V=%f\n", delay, -diff); #endif return delay; } // 就是两帧pts之差 static double vp_duration(VideoState *is, Frame *vp, Frame *nextvp) { if (vp->serial == nextvp->serial) { double duration = nextvp->pts - vp->pts; if (isnan(duration) || duration <= 0 || duration > is->max_frame_duration) return vp->duration; else return duration; } else { return 0.0; } } static double get_master_clock(VideoState *is) { double val; switch (get_master_sync_type(is)) { case AV_SYNC_VIDEO_MASTER: val = get_clock(&is->vidclk); break; case AV_SYNC_AUDIO_MASTER: val = get_clock(&is->audclk); break; default: val = get_clock(&is->extclk); break; } return val; } // 在speed为0的情况下,return的就是pts static double get_clock(Clock *c) { if (*c->queue_serial != c->serial) return NAN; if (c->paused) { return c->pts; } else { double time = av_gettime_relative() / 1000000.0; return c->pts_drift + time - (time - c->last_updated) * (1.0 - c->speed); } } static void set_clock_at(Clock *c, double pts, int serial, double time) { c->pts = pts; c->last_updated = time; c->pts_drift = c->pts - time; c->serial = serial; } - 软解码时总代码

-

static int get_video_frame(FFPlayer *ffp, AVFrame *frame) { VideoState *is = ffp->is; int got_picture; ffp_video_statistic_l(ffp); if ((got_picture = decoder_decode_frame(ffp, &is->viddec, frame, NULL)) < 0) { error_log(ffp->inject_opaque, __func__, -1, "decoder_decode_frame() failed"); live_log(ffp->inject_opaque, "decoder_decode_frame() failed"); return -1; } // 针对丢帧的处理 if (got_picture) { double dpts = NAN; if (frame->pts != AV_NOPTS_VALUE) dpts = av_q2d(is->video_st->time_base) * frame->pts; frame->sample_aspect_ratio = av_guess_sample_aspect_ratio(is->ic, is->video_st, frame); if (ffp->framedrop > 0 || (ffp->framedrop && get_master_sync_type(is) != AV_SYNC_VIDEO_MASTER)) { ffp->stat.decode_frame_count++; if (frame->pts != AV_NOPTS_VALUE) { double diff = dpts - get_master_clock(is); // 当前播放时刻与音频差值 av_log(NULL, AV_LOG_INFO, "音视频同步:get_video_frame, diff:%f, frame_last_filter_delay: %f, nb_packets: %d, continuous_frame_drops_early: %d\n", diff, is->frame_last_filter_delay, is->videoq.nb_packets, is->continuous_frame_drops_early); /* * #define AV_NOSYNC_THRESHOLD 100.0, 即 -100s =< diff <= 100 * frame_last_filter_delay一般为0,即diff < 0 * 即视频落后音频在100s内的话 */ if (!isnan(diff) && fabs(diff) < AV_NOSYNC_THRESHOLD && diff - is->frame_last_filter_delay < 0 && is->viddec.pkt_serial == is->vidclk.serial && is->videoq.nb_packets) { is->frame_drops_early++; is->continuous_frame_drops_early++; // 丢帧丢到framedrop个 if (is->continuous_frame_drops_early > ffp->framedrop) { av_log(NULL, AV_LOG_INFO, "get_video_frame, continuous_frame_drops_early:%d\n", is->continuous_frame_drops_early); is->continuous_frame_drops_early = 0; } else { ffp->stat.drop_frame_count++; ffp->stat.drop_frame_rate = (float) (ffp->stat.drop_frame_count) / (float) (ffp->stat.decode_frame_count); av_frame_unref(frame); got_picture = 0; av_log(NULL, AV_LOG_INFO, "get_video_frame, av_frame_unref"); } } } } } return got_picture; }本文福利, 免费领取C++音视频学习资料包、技术视频/代码,内容包括(音视频开发,面试题,FFmpeg ,webRTC ,rtmp ,hls ,rtsp ,ffplay ,编解码,推拉流,srs)↓↓↓↓↓↓见下面↓↓文章底部点击免费领取↓↓

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献29条内容

已为社区贡献29条内容

所有评论(0)