深度学习YOLOv5烟雾检测系统(深度学习代码+UI界面实现+训练数据集)

YOLOv5 是一种基于深度学习的目标检测算法,它具有速度快、精度高、易于训练等优点。YOLOv5 采用了一种端到端的检测方式,能够直接从输入图像中预测出目标的类别和位置。该算法的核心思想是将输入图像划分为多个网格,每个网格负责预测中心位于该网格内的目标。通过在不同尺度的特征图上进行预测,YOLOv5 能够检测到不同大小的目标。此外,YOLOv5 还引入了一些先进的技术,如注意力机制、数据增强和模

目录

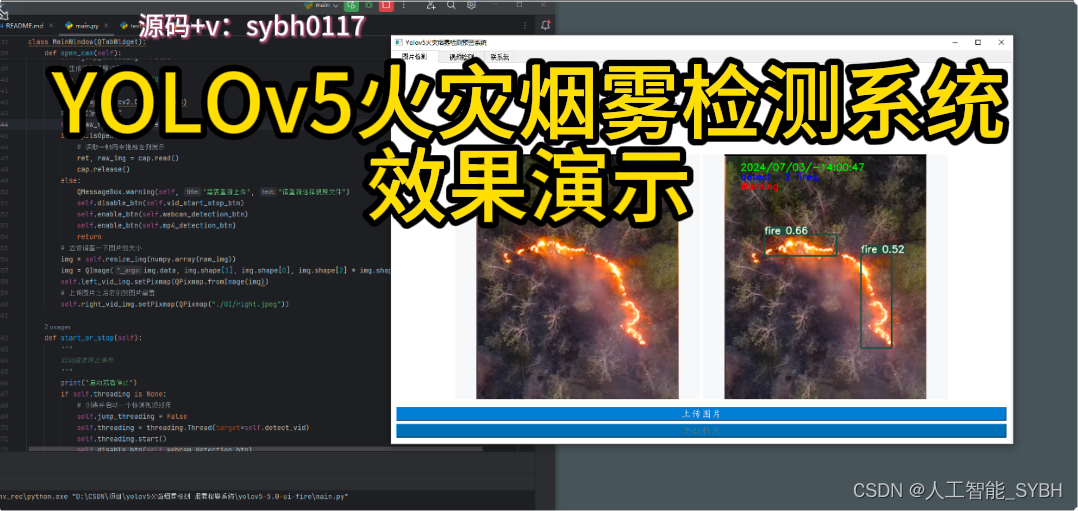

效果展示(完整源码请私信,并留下联系方式)

基于YOLOv5的火焰与烟雾检测系统演示与介绍

完整资源中包含数据集及训练代码,环境配置与界面中文字、图片、logo等的修改方法请见视频,项目完整文件下载请见演示与介绍视频的简介处给出:➷➷➷

基于YOLOv5的火焰与烟雾检测系统演示与介绍_哔哩哔哩_bilibili

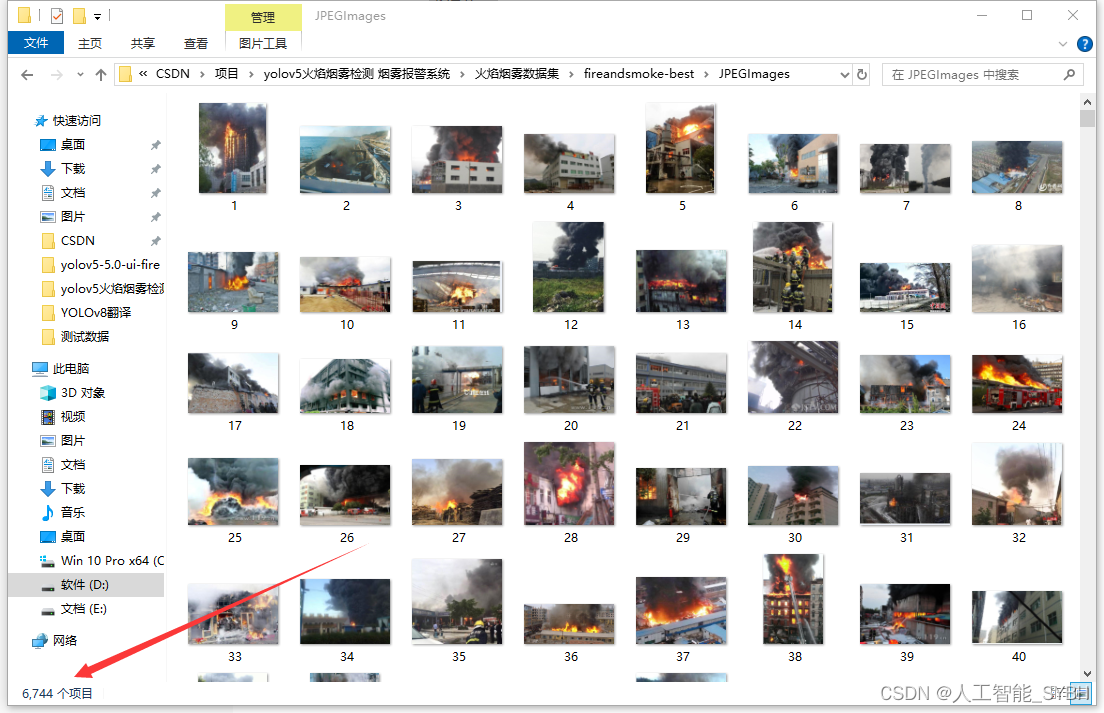

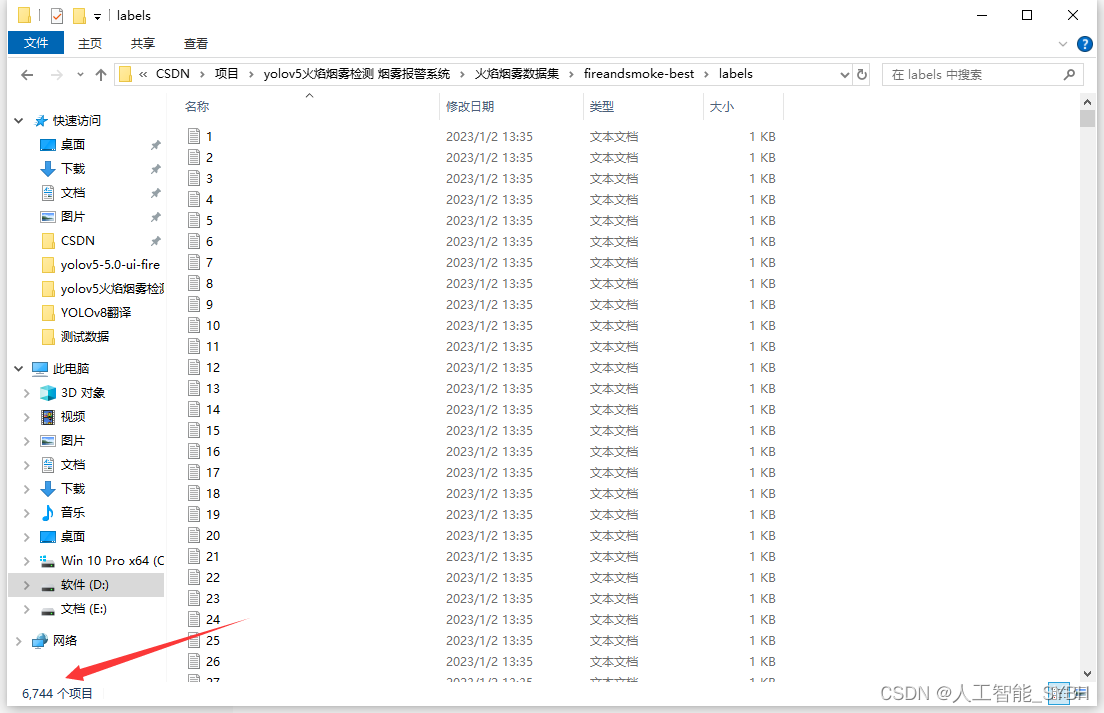

数据集

数据集准备了6744张已经标注好的数据集

环境安装

runs文件夹中,存放训练和评估的结果图

环境安装:

请按照给定的python版本配置环境,否则可能会因依赖不兼容而出错,

在文件目录下cmd进入终端

(1)使用anaconda新建python3.10环境:

conda create -n env_rec python=3.10

(2)激活创建的环境:

conda activate env_rec

(3)使用pip安装所需的依赖,可通过requirements.txt:

pip install -r requirements.txt

在settings中找到project python interpreter 点击Add Interpreter

点击conda,在Use existing environment中选择刚才创建的虚拟环境 ,最后点击确定。如果conda Executable中路径没有,那就把anaconda3的路径添加上

在当今社会,森林火灾是对生态环境和人类生命财产安全的重大威胁之一。及时、准确地检测森林火灾的发生对于采取有效的灭火措施、减少损失至关重要。近年来,随着人工智能技术的飞速发展,YOLOv5 算法在森林火灾检测领域展现出了巨大的潜力。

一、森林火灾的危害与传统检测方法的局限性

森林火灾不仅会烧毁大量的树木和植被,导致生物多样性减少,还会释放大量的二氧化碳等温室气体,加剧气候变化。此外,火灾产生的烟雾和灰尘会对空气质量造成严重影响,威胁到周边居民的健康。

传统的森林火灾检测方法主要依赖于人工瞭望塔、卫星监测和地面巡逻等。然而,这些方法存在着诸多局限性。人工瞭望塔的监测范围有限,容易受到天气和地形的影响;卫星监测虽然能够覆盖较大的区域,但存在时间分辨率低和数据处理复杂的问题;地面巡逻则需要耗费大量的人力和物力,且效率低下。

二、YOLOv5 算法简介

YOLOv5 是一种基于深度学习的目标检测算法,它具有速度快、精度高、易于训练等优点。YOLOv5 采用了一种端到端的检测方式,能够直接从输入图像中预测出目标的类别和位置。

该算法的核心思想是将输入图像划分为多个网格,每个网格负责预测中心位于该网格内的目标。通过在不同尺度的特征图上进行预测,YOLOv5 能够检测到不同大小的目标。此外,YOLOv5 还引入了一些先进的技术,如注意力机制、数据增强和模型压缩等,进一步提高了检测性能。

三、YOLOv5 在森林火灾检测中的应用

为了将 YOLOv5 应用于森林火灾检测,我们首先需要收集大量的森林火灾图像数据,并对这些数据进行标注。标注的信息包括火灾的位置、大小和类别等。

然后,我们使用标注好的数据对 YOLOv5 模型进行训练。在训练过程中,模型学习如何从图像中提取特征,并根据这些特征预测火灾的存在和位置。

经过训练的 YOLOv5 模型可以部署在监控摄像头、无人机等设备上,实时对森林区域进行监测。当模型检测到火灾时,会立即发出警报,通知相关人员采取措施。

四、YOLOv5 森林火灾检测的优势

与传统的检测方法相比,YOLOv5 森林火灾检测具有以下显著优势:

- 高实时性:YOLOv5 能够在短时间内处理大量的图像数据,实现实时检测,从而为及时采取灭火措施争取宝贵的时间。

- 高精度:通过深度学习的强大特征提取能力,YOLOv5 能够准确地识别出森林火灾,减少误报和漏报的情况。

- 适应复杂环境:YOLOv5 可以在不同的天气条件(如晴天、阴天、雾天)和光照条件下工作,对复杂的森林环境具有较强的适应性。

- 多目标检测:能够同时检测多个火灾区域,提高检测的全面性。

五、实际应用案例与效果展示

在实际应用中,YOLOv5 森林火灾检测系统已经取得了显著的成效。例如,在某地区的森林保护区中,部署了基于 YOLOv5 的监控系统。该系统成功地在火灾发生的早期阶段检测到了火情,并及时发出警报,使得消防部门能够迅速响应,将火灾损失控制在最小范围内。

通过展示实际的检测效果图像和数据,我们可以更直观地看到 YOLOv5 在森林火灾检测中的出色表现。

源码(完整源码请私信,并留下联系方式)

# -*- coding: UTF-8 -*-

"""

@Author: mz

@Date : 2022/3/6 20:43

@version V1.0

"""

import os

import random

import sys

import threading

import time

import cv2

import numpy

import torch

import torch.backends.cudnn as cudnn

from PyQt5.QtCore import *

from PyQt5.QtGui import *

from PyQt5.QtWidgets import *

from models.experimental import attempt_load

from utils.datasets import LoadImages, LoadStreams

from utils.general import check_img_size, non_max_suppression, scale_coords

from utils.plots import plot_one_box

from utils.torch_utils import select_device, time_synchronized

model_path = 'weights/best.pt'

# 添加一个关于界面

# 窗口主类

class MainWindow(QTabWidget):

# 基本配置不动,然后只动第三个界面

def __init__(self):

# 初始化界面

super().__init__()

self.setWindowTitle('Yolov5火灾烟雾检测预警系统')

self.resize(1200, 800)

self.setWindowIcon(QIcon("./UI/xf.jpg"))

# 图片读取进程

self.output_size = 480

self.img2predict = ""

# 空字符串会自己进行选择,首选cuda

self.device = ''

# # 初始化视频读取线程

self.vid_source = '0' # 初始设置为摄像头

# 检测视频的线程

self.threading = None

# 是否跳出当前循环的线程

self.jump_threading: bool = False

self.image_size = 640

self.confidence = 0.25

self.iou_threshold = 0.45

# 指明模型加载的位置的设备

self.model = self.model_load(weights=model_path,

device=self.device)

self.initUI()

self.reset_vid()

@torch.no_grad()

def model_load(self,

weights="", # model.pt path(s)

device='', # cuda device, i.e. 0 or 0,1,2,3 or cpu

):

"""

模型初始化

"""

device = self.device = select_device(device)

# half = device.type != 'cpu' # half precision only supported on CUDA

half = device.type != 0

# Load model

model = attempt_load(weights, map_location=device) # load FP32 model

self.stride = int(model.stride.max()) # model stride

self.image_size = check_img_size(self.image_size, s=self.stride) # check img_size

if half:

model.half() # to FP16

# Run inference

if device.type != 'cpu':

print("Run inference")

model(torch.zeros(1, 3, self.image_size, self.image_size).to(device).type_as(

next(model.parameters()))) # run once

print("模型加载完成!")

return model

def reset_vid(self):

"""

界面重置事件

"""

self.webcam_detection_btn.setEnabled(True)

self.mp4_detection_btn.setEnabled(True)

self.left_vid_img.setPixmap(QPixmap("./UI/up.jpeg"))

self.vid_source = '0'

self.disable_btn(self.det_img_button)

self.disable_btn(self.vid_start_stop_btn)

self.jump_threading = False

def initUI(self):

"""

界面初始化

"""

# 图片检测子界面

font_title = QFont('楷体', 16)

font_main = QFont('楷体', 14)

font_general = QFont('楷体', 10)

# 图片识别界面, 两个按钮,上传图片和显示结果

img_detection_widget = QWidget()

img_detection_layout = QVBoxLayout()

img_detection_title = QLabel("图片识别功能")

img_detection_title.setFont(font_title)

mid_img_widget = QWidget()

mid_img_layout = QHBoxLayout()

self.left_img = QLabel()

self.right_img = QLabel()

self.left_img.setPixmap(QPixmap("./UI/up.jpeg"))

self.right_img.setPixmap(QPixmap("./UI/right.jpeg"))

self.left_img.setAlignment(Qt.AlignCenter)

self.right_img.setAlignment(Qt.AlignCenter)

self.left_img.setMinimumSize(480, 480)

self.left_img.setStyleSheet("QLabel{background-color: #f6f8fa;}")

mid_img_layout.addWidget(self.left_img)

self.right_img.setMinimumSize(480, 480)

self.right_img.setStyleSheet("QLabel{background-color: #f6f8fa;}")

mid_img_layout.addStretch(0)

mid_img_layout.addWidget(self.right_img)

mid_img_widget.setLayout(mid_img_layout)

self.up_img_button = QPushButton("上传图片")

self.det_img_button = QPushButton("开始检测")

self.up_img_button.clicked.connect(self.upload_img)

self.det_img_button.clicked.connect(self.detect_img)

self.up_img_button.setFont(font_main)

self.det_img_button.setFont(font_main)

self.up_img_button.setStyleSheet("QPushButton{color:white}"

"QPushButton:hover{background-color: rgb(2,110,180);}"

"QPushButton{background-color:rgb(48,124,208)}"

"QPushButton{border:2px}"

"QPushButton{border-radius:5px}"

"QPushButton{padding:5px 5px}"

"QPushButton{margin:5px 5px}")

self.det_img_button.setStyleSheet("QPushButton{color:white}"

"QPushButton:hover{background-color: rgb(2,110,180);}"

"QPushButton{background-color:rgb(48,124,208)}"

"QPushButton{border:2px}"

"QPushButton{border-radius:5px}"

"QPushButton{padding:5px 5px}"

"QPushButton{margin:5px 5px}")

img_detection_layout.addWidget(img_detection_title, alignment=Qt.AlignCenter)

img_detection_layout.addWidget(mid_img_widget, alignment=Qt.AlignCenter)

img_detection_layout.addWidget(self.up_img_button)

img_detection_layout.addWidget(self.det_img_button)

img_detection_widget.setLayout(img_detection_layout)

# 视频识别界面

# 视频识别界面的逻辑比较简单,基本就从上到下的逻辑

vid_detection_widget = QWidget()

vid_detection_layout = QVBoxLayout()

vid_title = QLabel("视频检测功能")

vid_title.setFont(font_title)

self.left_vid_img = QLabel()

self.right_vid_img = QLabel()

self.left_vid_img.setPixmap(QPixmap("./UI/up.jpeg"))

self.right_vid_img.setPixmap(QPixmap("./UI/right.jpeg"))

self.left_vid_img.setAlignment(Qt.AlignCenter)

self.left_vid_img.setMinimumSize(480, 480)

self.left_vid_img.setStyleSheet("QLabel{background-color: #f6f8fa;}")

self.right_vid_img.setAlignment(Qt.AlignCenter)

self.right_vid_img.setMinimumSize(480, 480)

self.right_vid_img.setStyleSheet("QLabel{background-color: #f6f8fa;}")

mid_img_widget = QWidget()

mid_img_layout = QHBoxLayout()

mid_img_layout.addWidget(self.left_vid_img)

mid_img_layout.addStretch(0)

mid_img_layout.addWidget(self.right_vid_img)

mid_img_widget.setLayout(mid_img_layout)

self.webcam_detection_btn = QPushButton("摄像头实时监测")

self.mp4_detection_btn = QPushButton("视频文件检测")

self.vid_start_stop_btn = QPushButton("启动/停止检测")

self.webcam_detection_btn.setFont(font_main)

self.mp4_detection_btn.setFont(font_main)

self.vid_start_stop_btn.setFont(font_main)

self.webcam_detection_btn.setStyleSheet("QPushButton{color:white}"

"QPushButton:hover{background-color: rgb(2,110,180);}"

"QPushButton{background-color:rgb(48,124,208)}"

"QPushButton{border:2px}"

"QPushButton{border-radius:5px}"

"QPushButton{padding:5px 5px}"

"QPushButton{margin:5px 5px}")

self.mp4_detection_btn.setStyleSheet("QPushButton{color:white}"

"QPushButton:hover{background-color: rgb(2,110,180);}"

"QPushButton{background-color:rgb(48,124,208)}"

"QPushButton{border:2px}"

"QPushButton{border-radius:5px}"

"QPushButton{padding:5px 5px}"

"QPushButton{margin:5px 5px}")

self.vid_start_stop_btn.setStyleSheet("QPushButton{color:white}"

"QPushButton:hover{background-color: rgb(2,110,180);}"

"QPushButton{background-color:rgb(48,124,208)}"

"QPushButton{border:2px}"

"QPushButton{border-radius:5px}"

"QPushButton{padding:5px 5px}"

"QPushButton{margin:5px 5px}")

self.webcam_detection_btn.clicked.connect(self.open_cam)

self.mp4_detection_btn.clicked.connect(self.open_mp4)

self.vid_start_stop_btn.clicked.connect(self.start_or_stop)

# 添加fps显示

fps_container = QWidget()

fps_container.setStyleSheet("QWidget{background-color: #f6f8fa;}")

fps_container_layout = QHBoxLayout()

fps_container.setLayout(fps_container_layout)

# 左容器

fps_left_container = QWidget()

fps_left_container.setStyleSheet("QWidget{background-color: #f6f8fa;}")

fps_left_container_layout = QHBoxLayout()

fps_left_container.setLayout(fps_left_container_layout)

# 右容器

fps_right_container = QWidget()

fps_right_container.setStyleSheet("QWidget{background-color: #f6f8fa;}")

fps_right_container_layout = QHBoxLayout()

fps_right_container.setLayout(fps_right_container_layout)

# 将左容器和右容器添加到fps_container_layout中

fps_container_layout.addWidget(fps_left_container)

fps_container_layout.addStretch(0)

fps_container_layout.addWidget(fps_right_container)

# 左容器中添加fps显示

raw_fps_label = QLabel("原始帧率:")

raw_fps_label.setFont(font_general)

raw_fps_label.setAlignment(Qt.AlignLeft)

raw_fps_label.setStyleSheet("QLabel{margin-left:80px}")

self.raw_fps_value = QLabel("0")

self.raw_fps_value.setFont(font_general)

self.raw_fps_value.setAlignment(Qt.AlignLeft)

fps_left_container_layout.addWidget(raw_fps_label)

fps_left_container_layout.addWidget(self.raw_fps_value)

# 右容器中添加fps显示

detect_fps_label = QLabel("检测帧率:")

detect_fps_label.setFont(font_general)

detect_fps_label.setAlignment(Qt.AlignRight)

self.detect_fps_value = QLabel("0")

self.detect_fps_value.setFont(font_general)

self.detect_fps_value.setAlignment(Qt.AlignRight)

self.detect_fps_value.setStyleSheet("QLabel{margin-right:96px}")

fps_right_container_layout.addWidget(detect_fps_label)

fps_right_container_layout.addWidget(self.detect_fps_value)

# 添加组件到布局上

vid_detection_layout.addWidget(vid_title, alignment=Qt.AlignCenter)

vid_detection_layout.addWidget(fps_container)

vid_detection_layout.addWidget(mid_img_widget, alignment=Qt.AlignCenter)

vid_detection_layout.addWidget(self.webcam_detection_btn)

vid_detection_layout.addWidget(self.mp4_detection_btn)

vid_detection_layout.addWidget(self.vid_start_stop_btn)

vid_detection_widget.setLayout(vid_detection_layout)

# 关于界面

about_widget = QWidget()

about_layout = QVBoxLayout()

about_title = QLabel('欢迎使用目标检测系统\n\n 可以进行知识交流\n\n wx:sybh0117') # 修改欢迎词语

about_title.setFont(QFont('楷体', 18))

about_title.setAlignment(Qt.AlignCenter)

about_img = QLabel()

about_img.setPixmap(QPixmap('./UI/qq.png'))

about_img.setAlignment(Qt.AlignCenter)

label_super = QLabel() # 更换作者信息

label_super.setText("<a href='https://blog.csdn.net/m0_68036862?type=blog'>或者你可以在这里找到我-->mz</a>")

label_super.setFont(QFont('楷体', 16))

label_super.setOpenExternalLinks(True)

# label_super.setOpenExternalLinks(True)

label_super.setAlignment(Qt.AlignRight)

about_layout.addWidget(about_title)

about_layout.addStretch()

about_layout.addWidget(about_img)

about_layout.addStretch()

about_layout.addWidget(label_super)

about_widget.setLayout(about_layout)

self.addTab(img_detection_widget, '图片检测')

self.addTab(vid_detection_widget, '视频检测')

self.addTab(about_widget, '联系我')

self.setTabIcon(0, QIcon('./UI/lufei.png'))

self.setTabIcon(1, QIcon('./UI/lufei.png'))

def disable_btn(self, pushButton: QPushButton):

pushButton.setDisabled(True)

pushButton.setStyleSheet("QPushButton{background-color: rgb(2,110,180);}")

def enable_btn(self, pushButton: QPushButton):

pushButton.setEnabled(True)

pushButton.setStyleSheet(

"QPushButton{background-color: rgb(48,124,208);}"

"QPushButton{color:white}"

)

def detect(self, source: str, left_img: QLabel, right_img: QLabel):

"""

@param source: file/dir/URL/glob, 0 for webcam

@param left_img: 将左侧QLabel对象传入,用于显示图片

@param right_img: 将右侧QLabel对象传入,用于显示图片

"""

model = self.model

img_size = [self.image_size, self.image_size] # inference size (pixels)

conf_threshold = self.confidence # confidence threshold

iou_threshold = self.iou_threshold # NMS IOU threshold

device = self.device # cuda device, i.e. 0 or 0,1,2,3 or cpu

classes = None # filter by class: --class 0, or --class 0 2 3

agnostic_nms = False # class-agnostic NMS

augment = False # augmented inference

half = device.type != 'cpu' # half precision only supported on CUDA

if source == "":

self.disable_btn(self.det_img_button)

QMessageBox.warning(self, "请上传", "请先上传视频或图片再进行检测")

else:

source = str(source)

webcam = source.isnumeric()

# Set Dataloader

if webcam:

cudnn.benchmark = True # set True to speed up constant image size inference

dataset = LoadStreams(source, img_size=img_size, stride=self.stride)

else:

dataset = LoadImages(source, img_size=img_size, stride=self.stride)

# Get names and colors

names = model.module.names if hasattr(model, 'module') else model.names

colors = [[random.randint(0, 255) for _ in range(3)] for _ in names]

# 用来记录处理的图片数量

count = 0

# 计算帧率开始时间

fps_start_time = time.time()

for path, img, im0s, vid_cap in dataset:

# 直接跳出for,结束线程

if self.jump_threading:

# 清除状态

self.jump_threading = False

break

count += 1

img = torch.from_numpy(img).to(device)

img = img.half() if half else img.float() # uint8 to fp16/32

img /= 255.0 # 0 - 255 to 0.0 - 1.0

if img.ndimension() == 3:

img = img.unsqueeze(0)

# Inference

t1 = time_synchronized()

pred = model(img, augment=augment)[0]

# Apply NMS

pred = non_max_suppression(pred, conf_threshold, iou_threshold, classes=classes, agnostic=agnostic_nms)

t2 = time_synchronized()

# Process detections

for i, det in enumerate(pred): # detections per image

if webcam: # batch_size >= 1

s, im0 = 'detect : ', im0s[i].copy()

else:

s, im0 = 'detect : ', im0s.copy()

# s += '%gx%g ' % img.shape[2:] # print string

if len(det):

# Rescale boxes from img_size to im0 size

det[:, :4] = scale_coords(img.shape[2:], det[:, :4], im0.shape).round()

# Print results

for c in det[:, -1].unique():

n = (det[:, -1] == c).sum() # detections per class

s += f"{n} {names[int(c)]}{'s' * (n > 1)}, " # add to string

# Write results

for *xyxy, conf, cls in reversed(det):

label = f'{names[int(cls)]} {conf:.2f}'

plot_one_box(xyxy, im0, label=label, color=colors[int(cls)], line_thickness=3)

if names[int(cls)]=="fire" or names[int(cls)]=="smoke":

im0 = cv2.putText(im0, "Warning", (50, 110), cv2.FONT_HERSHEY_SIMPLEX,

1, (0, 0, 255), 2, cv2.LINE_AA)

if webcam or vid_cap is not None:

if webcam: # batch_size >= 1

img = im0s[i]

else:

img = im0s

img = self.resize_img(img)

img = QImage(img.data, img.shape[1], img.shape[0], img.shape[2] * img.shape[1],

QImage.Format_RGB888)

left_img.setPixmap(QPixmap.fromImage(img))

# 计算一次帧率

if count % 10 == 0:

fps = int(10 / (time.time() - fps_start_time))

self.detect_fps_value.setText(str(fps))

fps_start_time = time.time()

# 应该调整一下图片的大小

# 时间显示

timenumber = time.strftime('%Y/%m/%d/-%H:%M:%S', time.localtime(time.time()))

im0 = cv2.putText(im0, timenumber, (50, 50), cv2.FONT_HERSHEY_SIMPLEX,

1, (0, 255, 0), 2, cv2.LINE_AA)

im0 = cv2.putText(im0, s, (50, 80), cv2.FONT_HERSHEY_SIMPLEX,

1, (255, 0, 0), 2, cv2.LINE_AA)

img = self.resize_img(im0)

img = QImage(img.data, img.shape[1], img.shape[0], img.shape[2] * img.shape[1],

QImage.Format_RGB888)

right_img.setPixmap(QPixmap.fromImage(img))

# Print time (inference + NMS)

print(f'{s}Done. ({t2 - t1:.3f}s)')

# 使用完摄像头释放资源

if webcam:

for cap in dataset.caps:

cap.release()

else:

dataset.cap and dataset.cap.release()

def resize_img(self, img):

"""

调整图片大小,方便用来显示

@param img: 需要调整的图片

"""

resize_scale = min(self.output_size / img.shape[0], self.output_size / img.shape[1])

img = cv2.resize(img, (0, 0), fx=resize_scale, fy=resize_scale)

img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

return img

def upload_img(self):

"""

上传图片

"""

# # 选择录像文件进行读取

# fileName, fileType = QFileDialog.getOpenFileName(self, 'Choose file', '', '*.jpg *.png *.tif *.jpeg')

# if fileName:

# self.img2predict = fileName

# # 将上传照片和进行检测做成互斥的

# self.enable_btn(self.det_img_button)

# self.disable_btn(self.up_img_button)

# # 进行左侧原图展示

# img = cv2.imread(fileName)

# # 应该调整一下图片的大小

# img = self.resize_img(img)

# img = QImage(img.data, img.shape[1], img.shape[0], img.shape[2] * img.shape[1], QImage.Format_RGB888)

# self.left_img.setPixmap(QPixmap.fromImage(img))

# # 上传图片之后右侧的图片重置

# self.right_img.setPixmap(QPixmap("./UI/right.jpeg"))

fileName, fileType = QFileDialog.getOpenFileName(self, 'Choose file', '', '*.jpg *.png *.tif *.jpeg')

if fileName:

# 检查文件是否存在

if not os.path.exists(fileName):

print("File does not exist:", fileName)

return

self.img2predict = fileName

# 设置按钮状态

self.enable_btn(self.det_img_button)

self.disable_btn(self.up_img_button)

# 读取图像文件

img = cv2.imread(fileName)

if img is None:

print(fileName, fileType)

print("Error: Could not read image. Check file format or integrity.")

return

# 调整图像大小

img = self.resize_img(img)

# 转换为 QImage

height, width, channel = img.shape

bytesPerLine = channel * width

qImg = QImage(img.data, width, height, bytesPerLine, QImage.Format_RGB888)

# 设置左侧图像展示

self.left_img.setPixmap(QPixmap.fromImage(qImg))

# 重置右侧图像展示

self.right_img.setPixmap(QPixmap("./UI/right.jpeg"))

else:

print("No file selected.")

def detect_img(self):

"""

检测图片

"""

# 重置跳出线程状态,防止其他位置使用的影响

self.jump_threading = False

self.detect(self.img2predict, self.left_img, self.right_img)

# 将上传照片和进行检测做成互斥的

self.enable_btn(self.up_img_button)

self.disable_btn(self.det_img_button)

def open_mp4(self):

"""

开启视频文件检测事件

"""

print("开启视频文件检测")

fileName, fileType = QFileDialog.getOpenFileName(self, 'Choose file', '', '*.mp4 *.avi')

if fileName:

self.disable_btn(self.webcam_detection_btn)

self.disable_btn(self.mp4_detection_btn)

self.enable_btn(self.vid_start_stop_btn)

# 生成读取视频对象

print(fileName)

cap = cv2.VideoCapture(fileName)

# 获取视频的帧率

fps = cap.get(cv2.CAP_PROP_FPS)

# 显示原始视频帧率

self.raw_fps_value.setText(str(fps))

if cap.isOpened():

# 读取一帧用来提前左侧展示

ret, raw_img = cap.read()

cap.release()

else:

QMessageBox.warning(self, "需要重新上传", "请重新选择视频文件")

self.disable_btn(self.vid_start_stop_btn)

self.enable_btn(self.webcam_detection_btn)

self.enable_btn(self.mp4_detection_btn)

return

# 应该调整一下图片的大小

img = self.resize_img(numpy.array(raw_img))

img = QImage(img.data, img.shape[1], img.shape[0], img.shape[2] * img.shape[1], QImage.Format_RGB888)

self.left_vid_img.setPixmap(QPixmap.fromImage(img))

# 上传图片之后右侧的图片重置

self.right_vid_img.setPixmap(QPixmap("./UI/right.jpeg"))

self.vid_source = fileName

self.jump_threading = False

def open_cam(self):

"""

打开摄像头事件

"""

print("打开摄像头")

self.disable_btn(self.webcam_detection_btn)

self.disable_btn(self.mp4_detection_btn)

self.enable_btn(self.vid_start_stop_btn)

self.vid_source = "0"

self.jump_threading = False

# 生成读取视频对象

cap = cv2.VideoCapture(0)

# 获取视频的帧率

fps = cap.get(cv2.CAP_PROP_FPS)

# 显示原始视频帧率

self.raw_fps_value.setText(str(fps))

if cap.isOpened():

# 读取一帧用来提前左侧展示

ret, raw_img = cap.read()

cap.release()

else:

QMessageBox.warning(self, "需要重新上传", "请重新选择视频文件")

self.disable_btn(self.vid_start_stop_btn)

self.enable_btn(self.webcam_detection_btn)

self.enable_btn(self.mp4_detection_btn)

return

# 应该调整一下图片的大小

img = self.resize_img(numpy.array(raw_img))

img = QImage(img.data, img.shape[1], img.shape[0], img.shape[2] * img.shape[1], QImage.Format_RGB888)

self.left_vid_img.setPixmap(QPixmap.fromImage(img))

# 上传图片之后右侧的图片重置

self.right_vid_img.setPixmap(QPixmap("./UI/right.jpeg"))

def start_or_stop(self):

"""

启动或者停止事件

"""

print("启动或者停止")

if self.threading is None:

# 创造并启动一个检测视频线程

self.jump_threading = False

self.threading = threading.Thread(target=self.detect_vid)

self.threading.start()

self.disable_btn(self.webcam_detection_btn)

self.disable_btn(self.mp4_detection_btn)

else:

# 停止当前线程

# 线程属性置空,恢复状态

self.threading = None

self.jump_threading = True

self.enable_btn(self.webcam_detection_btn)

self.enable_btn(self.mp4_detection_btn)

def detect_vid(self):

"""

视频检测

视频和摄像头的主函数是一样的,不过是传入的source不同罢了

"""

print("视频开始检测")

self.detect(self.vid_source, self.left_vid_img, self.right_vid_img)

print("视频检测结束")

# 执行完进程,刷新一下和进程有关的状态,只有self.threading是None,

# 才能说明是正常结束的线程,需要被刷新状态

if self.threading is not None:

self.start_or_stop()

def closeEvent(self, event):

"""

界面关闭事件

"""

reply = QMessageBox.question(

self,

'quit',

"Are you sure?",

QMessageBox.Yes | QMessageBox.No,

QMessageBox.No

)

if reply == QMessageBox.Yes:

self.jump_threading = True

self.close()

event.accept()

else:

event.ignore()

if __name__ == "__main__":

app = QApplication(sys.argv)

mainWindow = MainWindow()

mainWindow.show()

sys.exit(app.exec_())

训练代码(train.py)

import argparse

import logging

import math

import os

import random

import time

from copy import deepcopy

from pathlib import Path

from threading import Thread

import numpy as np

import torch.distributed as dist

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

import torch.optim.lr_scheduler as lr_scheduler

import torch.utils.data

import yaml

from torch.cuda import amp

from torch.nn.parallel import DistributedDataParallel as DDP

from torch.utils.tensorboard import SummaryWriter

from tqdm import tqdm

import test # import test.py to get mAP after each epoch

from models.experimental import attempt_load

from models.yolo import Model

from utils.autoanchor import check_anchors

from utils.datasets import create_dataloader

from utils.general import labels_to_class_weights, increment_path, labels_to_image_weights, init_seeds, \

fitness, strip_optimizer, get_latest_run, check_dataset, check_file, check_git_status, check_img_size, \

check_requirements, print_mutation, set_logging, one_cycle, colorstr

from utils.google_utils import attempt_download

from utils.loss import ComputeLoss

from utils.plots import plot_images, plot_labels, plot_results, plot_evolution

from utils.torch_utils import ModelEMA, select_device, intersect_dicts, torch_distributed_zero_first, is_parallel

from utils.wandb_logging.wandb_utils import WandbLogger, check_wandb_resume

logger = logging.getLogger(__name__)

def train(hyp, opt, device, tb_writer=None):

logger.info(colorstr('hyperparameters: ') + ', '.join(f'{k}={v}' for k, v in hyp.items()))

save_dir, epochs, batch_size, total_batch_size, weights, rank = \

Path(opt.save_dir), opt.epochs, opt.batch_size, opt.total_batch_size, opt.weights, opt.global_rank

# Directories

wdir = save_dir / 'weights'

wdir.mkdir(parents=True, exist_ok=True) # make dir

last = wdir / 'last.pt'

best = wdir / 'best.pt'

results_file = save_dir / 'results.txt'

# Save run settings

with open(save_dir / 'hyp.yaml', 'w') as f:

yaml.dump(hyp, f, sort_keys=False)

with open(save_dir / 'opt.yaml', 'w') as f:

yaml.dump(vars(opt), f, sort_keys=False)

# Configure

plots = not opt.evolve # create plots

cuda = device.type != 'cpu'

init_seeds(2 + rank)

with open(opt.data) as f:

data_dict = yaml.load(f, Loader=yaml.SafeLoader) # data dict

is_coco = opt.data.endswith('coco.yaml')

# Logging- Doing this before checking the dataset. Might update data_dict

loggers = {'wandb': None} # loggers dict

if rank in [-1, 0]:

opt.hyp = hyp # add hyperparameters

run_id = torch.load(weights).get('wandb_id') if weights.endswith('.pt') and os.path.isfile(weights) else None

wandb_logger = WandbLogger(opt, Path(opt.save_dir).stem, run_id, data_dict)

loggers['wandb'] = wandb_logger.wandb

data_dict = wandb_logger.data_dict

if wandb_logger.wandb:

weights, epochs, hyp = opt.weights, opt.epochs, opt.hyp # WandbLogger might update weights, epochs if resuming

nc = 1 if opt.single_cls else int(data_dict['nc']) # number of classes

names = ['item'] if opt.single_cls and len(data_dict['names']) != 1 else data_dict['names'] # class names

assert len(names) == nc, '%g names found for nc=%g dataset in %s' % (len(names), nc, opt.data) # check

# Model

pretrained = weights.endswith('.pt')

if pretrained:

with torch_distributed_zero_first(rank):

attempt_download(weights) # download if not found locally

ckpt = torch.load(weights, map_location=device) # load checkpoint

model = Model(opt.cfg or ckpt['model'].yaml, ch=3, nc=nc, anchors=hyp.get('anchors')).to(device) # create

exclude = ['anchor'] if (opt.cfg or hyp.get('anchors')) and not opt.resume else [] # exclude keys

state_dict = ckpt['model'].float().state_dict() # to FP32

state_dict = intersect_dicts(state_dict, model.state_dict(), exclude=exclude) # intersect

model.load_state_dict(state_dict, strict=False) # load

logger.info('Transferred %g/%g items from %s' % (len(state_dict), len(model.state_dict()), weights)) # report

else:

model = Model(opt.cfg, ch=3, nc=nc, anchors=hyp.get('anchors')).to(device) # create

with torch_distributed_zero_first(rank):

check_dataset(data_dict) # check

train_path = data_dict['train']

test_path = data_dict['val']

# Freeze

freeze = [] # parameter names to freeze (full or partial)

for k, v in model.named_parameters():

v.requires_grad = True # train all layers

if any(x in k for x in freeze):

print('freezing %s' % k)

v.requires_grad = False

# Optimizer

nbs = 64 # nominal batch size

accumulate = max(round(nbs / total_batch_size), 1) # accumulate loss before optimizing

hyp['weight_decay'] *= total_batch_size * accumulate / nbs # scale weight_decay

logger.info(f"Scaled weight_decay = {hyp['weight_decay']}")

pg0, pg1, pg2 = [], [], [] # optimizer parameter groups

for k, v in model.named_modules():

if hasattr(v, 'bias') and isinstance(v.bias, nn.Parameter):

pg2.append(v.bias) # biases

if isinstance(v, nn.BatchNorm2d):

pg0.append(v.weight) # no decay

elif hasattr(v, 'weight') and isinstance(v.weight, nn.Parameter):

pg1.append(v.weight) # apply decay

if opt.adam:

optimizer = optim.Adam(pg0, lr=hyp['lr0'], betas=(hyp['momentum'], 0.999)) # adjust beta1 to momentum

else:

optimizer = optim.SGD(pg0, lr=hyp['lr0'], momentum=hyp['momentum'], nesterov=True)

optimizer.add_param_group({'params': pg1, 'weight_decay': hyp['weight_decay']}) # add pg1 with weight_decay

optimizer.add_param_group({'params': pg2}) # add pg2 (biases)

logger.info('Optimizer groups: %g .bias, %g conv.weight, %g other' % (len(pg2), len(pg1), len(pg0)))

del pg0, pg1, pg2

# Scheduler https://arxiv.org/pdf/1812.01187.pdf

# https://pytorch.org/docs/stable/_modules/torch/optim/lr_scheduler.html#OneCycleLR

if opt.linear_lr:

lf = lambda x: (1 - x / (epochs - 1)) * (1.0 - hyp['lrf']) + hyp['lrf'] # linear

else:

lf = one_cycle(1, hyp['lrf'], epochs) # cosine 1->hyp['lrf']

scheduler = lr_scheduler.LambdaLR(optimizer, lr_lambda=lf)

# plot_lr_scheduler(optimizer, scheduler, epochs)

# EMA

ema = ModelEMA(model) if rank in [-1, 0] else None

# Resume

start_epoch, best_fitness = 0, 0.0

if pretrained:

# Optimizer

if ckpt['optimizer'] is not None:

optimizer.load_state_dict(ckpt['optimizer'])

best_fitness = ckpt['best_fitness']

# EMA

if ema and ckpt.get('ema'):

ema.ema.load_state_dict(ckpt['ema'].float().state_dict())

ema.updates = ckpt['updates']

# Results

if ckpt.get('training_results') is not None:

results_file.write_text(ckpt['training_results']) # write results.txt

# Epochs

start_epoch = ckpt['epoch'] + 1

if opt.resume:

assert start_epoch > 0, '%s training to %g epochs is finished, nothing to resume.' % (weights, epochs)

if epochs < start_epoch:

logger.info('%s has been trained for %g epochs. Fine-tuning for %g additional epochs.' %

(weights, ckpt['epoch'], epochs))

epochs += ckpt['epoch'] # finetune additional epochs

del ckpt, state_dict

# Image sizes

gs = max(int(model.stride.max()), 32) # grid size (max stride)

nl = model.model[-1].nl # number of detection layers (used for scaling hyp['obj'])

imgsz, imgsz_test = [check_img_size(x, gs) for x in opt.img_size] # verify imgsz are gs-multiples

# DP mode

if cuda and rank == -1 and torch.cuda.device_count() > 1:

model = torch.nn.DataParallel(model)

# SyncBatchNorm

if opt.sync_bn and cuda and rank != -1:

model = torch.nn.SyncBatchNorm.convert_sync_batchnorm(model).to(device)

logger.info('Using SyncBatchNorm()')

# Trainloader

dataloader, dataset = create_dataloader(train_path, imgsz, batch_size, gs, opt,

hyp=hyp, augment=True, cache=opt.cache_images, rect=opt.rect, rank=rank,

world_size=opt.world_size, workers=opt.workers,

image_weights=opt.image_weights, quad=opt.quad, prefix=colorstr('train: '))

mlc = np.concatenate(dataset.labels, 0)[:, 0].max() # max label class

nb = len(dataloader) # number of batches

assert mlc < nc, 'Label class %g exceeds nc=%g in %s. Possible class labels are 0-%g' % (mlc, nc, opt.data, nc - 1)

# Process 0

if rank in [-1, 0]:

testloader = create_dataloader(test_path, imgsz_test, batch_size * 2, gs, opt, # testloader

hyp=hyp, cache=opt.cache_images and not opt.notest, rect=True, rank=-1,

world_size=opt.world_size, workers=opt.workers,

pad=0.5, prefix=colorstr('val: '))[0]

if not opt.resume:

labels = np.concatenate(dataset.labels, 0)

c = torch.tensor(labels[:, 0]) # classes

# cf = torch.bincount(c.long(), minlength=nc) + 1. # frequency

# model._initialize_biases(cf.to(device))

if plots:

plot_labels(labels, names, save_dir, loggers)

if tb_writer:

tb_writer.add_histogram('classes', c, 0)

# Anchors

if not opt.noautoanchor:

check_anchors(dataset, model=model, thr=hyp['anchor_t'], imgsz=imgsz)

model.half().float() # pre-reduce anchor precision

# DDP mode

if cuda and rank != -1:

model = DDP(model, device_ids=[opt.local_rank], output_device=opt.local_rank,

# nn.MultiheadAttention incompatibility with DDP https://github.com/pytorch/pytorch/issues/26698

find_unused_parameters=any(isinstance(layer, nn.MultiheadAttention) for layer in model.modules()))

# Model parameters

hyp['box'] *= 3. / nl # scale to layers

hyp['cls'] *= nc / 80. * 3. / nl # scale to classes and layers

hyp['obj'] *= (imgsz / 640) ** 2 * 3. / nl # scale to image size and layers

hyp['label_smoothing'] = opt.label_smoothing

model.nc = nc # attach number of classes to model

model.hyp = hyp # attach hyperparameters to model

model.gr = 1.0 # iou loss ratio (obj_loss = 1.0 or iou)

model.class_weights = labels_to_class_weights(dataset.labels, nc).to(device) * nc # attach class weights

model.names = names

# Start training

t0 = time.time()

nw = max(round(hyp['warmup_epochs'] * nb), 1000) # number of warmup iterations, max(3 epochs, 1k iterations)

# nw = min(nw, (epochs - start_epoch) / 2 * nb) # limit warmup to < 1/2 of training

maps = np.zeros(nc) # mAP per class

results = (0, 0, 0, 0, 0, 0, 0) # P, R, mAP@.5, mAP@.5-.95, val_loss(box, obj, cls)

scheduler.last_epoch = start_epoch - 1 # do not move

scaler = amp.GradScaler(enabled=cuda)

compute_loss = ComputeLoss(model) # init loss class

logger.info(f'Image sizes {imgsz} train, {imgsz_test} test\n'

f'Using {dataloader.num_workers} dataloader workers\n'

f'Logging results to {save_dir}\n'

f'Starting training for {epochs} epochs...')

for epoch in range(start_epoch, epochs): # epoch ------------------------------------------------------------------

model.train()

# Update image weights (optional)

if opt.image_weights:

# Generate indices

if rank in [-1, 0]:

cw = model.class_weights.cpu().numpy() * (1 - maps) ** 2 / nc # class weights

iw = labels_to_image_weights(dataset.labels, nc=nc, class_weights=cw) # image weights

dataset.indices = random.choices(range(dataset.n), weights=iw, k=dataset.n) # rand weighted idx

# Broadcast if DDP

if rank != -1:

indices = (torch.tensor(dataset.indices) if rank == 0 else torch.zeros(dataset.n)).int()

dist.broadcast(indices, 0)

if rank != 0:

dataset.indices = indices.cpu().numpy()

# Update mosaic border

# b = int(random.uniform(0.25 * imgsz, 0.75 * imgsz + gs) // gs * gs)

# dataset.mosaic_border = [b - imgsz, -b] # height, width borders

mloss = torch.zeros(4, device=device) # mean losses

if rank != -1:

dataloader.sampler.set_epoch(epoch)

pbar = enumerate(dataloader)

logger.info(('\n' + '%10s' * 8) % ('Epoch', 'gpu_mem', 'box', 'obj', 'cls', 'total', 'labels', 'img_size'))

if rank in [-1, 0]:

pbar = tqdm(pbar, total=nb) # progress bar

optimizer.zero_grad()

for i, (imgs, targets, paths, _) in pbar: # batch -------------------------------------------------------------

ni = i + nb * epoch # number integrated batches (since train start)

imgs = imgs.to(device, non_blocking=True).float() / 255.0 # uint8 to float32, 0-255 to 0.0-1.0

# Warmup

if ni <= nw:

xi = [0, nw] # x interp

# model.gr = np.interp(ni, xi, [0.0, 1.0]) # iou loss ratio (obj_loss = 1.0 or iou)

accumulate = max(1, np.interp(ni, xi, [1, nbs / total_batch_size]).round())

for j, x in enumerate(optimizer.param_groups):

# bias lr falls from 0.1 to lr0, all other lrs rise from 0.0 to lr0

x['lr'] = np.interp(ni, xi, [hyp['warmup_bias_lr'] if j == 2 else 0.0, x['initial_lr'] * lf(epoch)])

if 'momentum' in x:

x['momentum'] = np.interp(ni, xi, [hyp['warmup_momentum'], hyp['momentum']])

# Multi-scale

if opt.multi_scale:

sz = random.randrange(imgsz * 0.5, imgsz * 1.5 + gs) // gs * gs # size

sf = sz / max(imgs.shape[2:]) # scale factor

if sf != 1:

ns = [math.ceil(x * sf / gs) * gs for x in imgs.shape[2:]] # new shape (stretched to gs-multiple)

imgs = F.interpolate(imgs, size=ns, mode='bilinear', align_corners=False)

# Forward

with amp.autocast(enabled=cuda):

pred = model(imgs) # forward

loss, loss_items = compute_loss(pred, targets.to(device)) # loss scaled by batch_size

if rank != -1:

loss *= opt.world_size # gradient averaged between devices in DDP mode

if opt.quad:

loss *= 4.

# Backward

scaler.scale(loss).backward()

# Optimize

if ni % accumulate == 0:

scaler.step(optimizer) # optimizer.step

scaler.update()

optimizer.zero_grad()

if ema:

ema.update(model)

# Print

if rank in [-1, 0]:

mloss = (mloss * i + loss_items) / (i + 1) # update mean losses

mem = '%.3gG' % (torch.cuda.memory_reserved() / 1E9 if torch.cuda.is_available() else 0) # (GB)

s = ('%10s' * 2 + '%10.4g' * 6) % (

'%g/%g' % (epoch, epochs - 1), mem, *mloss, targets.shape[0], imgs.shape[-1])

pbar.set_description(s)

# Plot

if plots and ni < 3:

f = save_dir / f'train_batch{ni}.jpg' # filename

Thread(target=plot_images, args=(imgs, targets, paths, f), daemon=True).start()

# if tb_writer:

# tb_writer.add_image(f, result, dataformats='HWC', global_step=epoch)

# tb_writer.add_graph(torch.jit.trace(model, imgs, strict=False), []) # add model graph

elif plots and ni == 10 and wandb_logger.wandb:

wandb_logger.log({"Mosaics": [wandb_logger.wandb.Image(str(x), caption=x.name) for x in

save_dir.glob('train*.jpg') if x.exists()]})

# end batch ------------------------------------------------------------------------------------------------

# end epoch ----------------------------------------------------------------------------------------------------

# Scheduler

lr = [x['lr'] for x in optimizer.param_groups] # for tensorboard

scheduler.step()

# DDP process 0 or single-GPU

if rank in [-1, 0]:

# mAP

ema.update_attr(model, include=['yaml', 'nc', 'hyp', 'gr', 'names', 'stride', 'class_weights'])

final_epoch = epoch + 1 == epochs

if not opt.notest or final_epoch: # Calculate mAP

wandb_logger.current_epoch = epoch + 1

results, maps, times = test.test(data_dict,

batch_size=batch_size * 2,

imgsz=imgsz_test,

model=ema.ema,

single_cls=opt.single_cls,

dataloader=testloader,

save_dir=save_dir,

verbose=nc < 50 and final_epoch,

plots=plots and final_epoch,

wandb_logger=wandb_logger,

compute_loss=compute_loss,

is_coco=is_coco)

# Write

with open(results_file, 'a') as f:

f.write(s + '%10.4g' * 7 % results + '\n') # append metrics, val_loss

if len(opt.name) and opt.bucket:

os.system('gsutil cp %s gs://%s/results/results%s.txt' % (results_file, opt.bucket, opt.name))

# Log

tags = ['train/box_loss', 'train/obj_loss', 'train/cls_loss', # train loss

'metrics/precision', 'metrics/recall', 'metrics/mAP_0.5', 'metrics/mAP_0.5:0.95',

'val/box_loss', 'val/obj_loss', 'val/cls_loss', # val loss

'x/lr0', 'x/lr1', 'x/lr2'] # params

for x, tag in zip(list(mloss[:-1]) + list(results) + lr, tags):

if tb_writer:

tb_writer.add_scalar(tag, x, epoch) # tensorboard

if wandb_logger.wandb:

wandb_logger.log({tag: x}) # W&B

# Update best mAP

fi = fitness(np.array(results).reshape(1, -1)) # weighted combination of [P, R, mAP@.5, mAP@.5-.95]

if fi > best_fitness:

best_fitness = fi

wandb_logger.end_epoch(best_result=best_fitness == fi)

# Save model

if (not opt.nosave) or (final_epoch and not opt.evolve): # if save

ckpt = {'epoch': epoch,

'best_fitness': best_fitness,

'training_results': results_file.read_text(),

'model': deepcopy(model.module if is_parallel(model) else model).half(),

'ema': deepcopy(ema.ema).half(),

'updates': ema.updates,

'optimizer': optimizer.state_dict(),

'wandb_id': wandb_logger.wandb_run.id if wandb_logger.wandb else None}

# Save last, best and delete

torch.save(ckpt, last)

if best_fitness == fi:

torch.save(ckpt, best)

if wandb_logger.wandb:

if ((epoch + 1) % opt.save_period == 0 and not final_epoch) and opt.save_period != -1:

wandb_logger.log_model(

last.parent, opt, epoch, fi, best_model=best_fitness == fi)

del ckpt

# end epoch ----------------------------------------------------------------------------------------------------

# end training

if rank in [-1, 0]:

# Plots

if plots:

plot_results(save_dir=save_dir) # save as results.png

if wandb_logger.wandb:

files = ['results.png', 'confusion_matrix.png', *[f'{x}_curve.png' for x in ('F1', 'PR', 'P', 'R')]]

wandb_logger.log({"Results": [wandb_logger.wandb.Image(str(save_dir / f), caption=f) for f in files

if (save_dir / f).exists()]})

# Test best.pt

logger.info('%g epochs completed in %.3f hours.\n' % (epoch - start_epoch + 1, (time.time() - t0) / 3600))

if opt.data.endswith('coco.yaml') and nc == 80: # if COCO

for m in (last, best) if best.exists() else (last): # speed, mAP tests

results, _, _ = test.test(opt.data,

batch_size=batch_size * 2,

imgsz=imgsz_test,

conf_thres=0.001,

iou_thres=0.7,

model=attempt_load(m, device).half(),

single_cls=opt.single_cls,

dataloader=testloader,

save_dir=save_dir,

save_json=True,

plots=False,

is_coco=is_coco)

# Strip optimizers

final = best if best.exists() else last # final model

for f in last, best:

if f.exists():

strip_optimizer(f) # strip optimizers

if opt.bucket:

os.system(f'gsutil cp {final} gs://{opt.bucket}/weights') # upload

if wandb_logger.wandb and not opt.evolve: # Log the stripped model

wandb_logger.wandb.log_artifact(str(final), type='model',

name='run_' + wandb_logger.wandb_run.id + '_model',

aliases=['last', 'best', 'stripped'])

wandb_logger.finish_run()

else:

dist.destroy_process_group()

torch.cuda.empty_cache()

return results

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--weights', type=str, default='weights/yolov5s.pt', help='initial weights path')

parser.add_argument('--cfg', type=str, default='models/yolov5s.yaml', help='model.yaml path')

parser.add_argument('--data', type=str, default='data/voc.yaml', help='data.yaml path')

parser.add_argument('--hyp', type=str, default='data/hyp.scratch.yaml', help='hyperparameters path')

parser.add_argument('--epochs', type=int, default=300)

parser.add_argument('--batch-size', type=int, default=16, help='total batch size for all GPUs')

parser.add_argument('--img-size', nargs='+', type=int, default=[640, 640], help='[train, test] image sizes')

parser.add_argument('--rect', action='store_true', help='rectangular training')

parser.add_argument('--resume', nargs='?', const=True, default=False, help='resume most recent training')

parser.add_argument('--nosave', action='store_true', help='only save final checkpoint')

parser.add_argument('--notest', action='store_true', help='only test final epoch')

parser.add_argument('--noautoanchor', action='store_true', help='disable autoanchor check')

parser.add_argument('--evolve', action='store_true', help='evolve hyperparameters')

parser.add_argument('--bucket', type=str, default='', help='gsutil bucket')

parser.add_argument('--cache-images', action='store_true', help='cache images for faster training')

parser.add_argument('--image-weights', action='store_true', help='use weighted image selection for training')

parser.add_argument('--device', default='', help='cuda device, i.e. 0 or 0,1,2,3 or cpu')

parser.add_argument('--multi-scale', action='store_true', help='vary img-size +/- 50%%')

parser.add_argument('--single-cls', action='store_true', help='train multi-class data as single-class')

parser.add_argument('--adam', action='store_true', help='use torch.optim.Adam() optimizer')

parser.add_argument('--sync-bn', action='store_true', help='use SyncBatchNorm, only available in DDP mode')

parser.add_argument('--local_rank', type=int, default=-1, help='DDP parameter, do not modify')

parser.add_argument('--workers', type=int, default=0, help='maximum number of dataloader workers')

parser.add_argument('--project', default='runs/train', help='save to project/name')

parser.add_argument('--entity', default=None, help='W&B entity')

parser.add_argument('--name', default='exp', help='save to project/name')

parser.add_argument('--exist-ok', action='store_true', help='existing project/name ok, do not increment')

parser.add_argument('--quad', action='store_true', help='quad dataloader')

parser.add_argument('--linear-lr', action='store_true', help='linear LR')

parser.add_argument('--label-smoothing', type=float, default=0.0, help='Label smoothing epsilon')

parser.add_argument('--upload_dataset', action='store_true', help='Upload dataset as W&B artifact table')

parser.add_argument('--bbox_interval', type=int, default=-1, help='Set bounding-box image logging interval for W&B')

parser.add_argument('--save_period', type=int, default=-1, help='Log model after every "save_period" epoch')

parser.add_argument('--artifact_alias', type=str, default="latest", help='version of dataset artifact to be used')

opt = parser.parse_args()

# Set DDP variables

opt.world_size = int(os.environ['WORLD_SIZE']) if 'WORLD_SIZE' in os.environ else 1

opt.global_rank = int(os.environ['RANK']) if 'RANK' in os.environ else -1

set_logging(opt.global_rank)

if opt.global_rank in [-1, 0]:

check_git_status()

check_requirements()

# Resume

wandb_run = check_wandb_resume(opt)

if opt.resume and not wandb_run: # resume an interrupted run

ckpt = opt.resume if isinstance(opt.resume, str) else get_latest_run() # specified or most recent path

assert os.path.isfile(ckpt), 'ERROR: --resume checkpoint does not exist'

apriori = opt.global_rank, opt.local_rank

with open(Path(ckpt).parent.parent / 'opt.yaml') as f:

opt = argparse.Namespace(**yaml.load(f, Loader=yaml.SafeLoader)) # replace

opt.cfg, opt.weights, opt.resume, opt.batch_size, opt.global_rank, opt.local_rank = '', ckpt, True, opt.total_batch_size, *apriori # reinstate

logger.info('Resuming training from %s' % ckpt)

else:

# opt.hyp = opt.hyp or ('hyp.finetune.yaml' if opt.weights else 'hyp.scratch.yaml')

opt.data, opt.cfg, opt.hyp = check_file(opt.data), check_file(opt.cfg), check_file(opt.hyp) # check files

assert len(opt.cfg) or len(opt.weights), 'either --cfg or --weights must be specified'

opt.img_size.extend([opt.img_size[-1]] * (2 - len(opt.img_size))) # extend to 2 sizes (train, test)

opt.name = 'evolve' if opt.evolve else opt.name

opt.save_dir = increment_path(Path(opt.project) / opt.name, exist_ok=opt.exist_ok | opt.evolve) # increment run

# DDP mode

opt.total_batch_size = opt.batch_size

device = select_device(opt.device, batch_size=opt.batch_size)

if opt.local_rank != -1:

assert torch.cuda.device_count() > opt.local_rank

torch.cuda.set_device(opt.local_rank)

device = torch.device('cuda', opt.local_rank)

dist.init_process_group(backend='nccl', init_method='env://') # distributed backend

assert opt.batch_size % opt.world_size == 0, '--batch-size must be multiple of CUDA device count'

opt.batch_size = opt.total_batch_size // opt.world_size

# Hyperparameters

with open(opt.hyp) as f:

hyp = yaml.load(f, Loader=yaml.SafeLoader) # load hyps

# Train

logger.info(opt)

if not opt.evolve:

tb_writer = None # init loggers

if opt.global_rank in [-1, 0]:

prefix = colorstr('tensorboard: ')

logger.info(f"{prefix}Start with 'tensorboard --logdir {opt.project}', view at http://localhost:6006/")

tb_writer = SummaryWriter(opt.save_dir) # Tensorboard

train(hyp, opt, device, tb_writer)

# Evolve hyperparameters (optional)

else:

# Hyperparameter evolution metadata (mutation scale 0-1, lower_limit, upper_limit)

meta = {'lr0': (1, 1e-5, 1e-1), # initial learning rate (SGD=1E-2, Adam=1E-3)

'lrf': (1, 0.01, 1.0), # final OneCycleLR learning rate (lr0 * lrf)

'momentum': (0.3, 0.6, 0.98), # SGD momentum/Adam beta1

'weight_decay': (1, 0.0, 0.001), # optimizer weight decay

'warmup_epochs': (1, 0.0, 5.0), # warmup epochs (fractions ok)

'warmup_momentum': (1, 0.0, 0.95), # warmup initial momentum

'warmup_bias_lr': (1, 0.0, 0.2), # warmup initial bias lr

'box': (1, 0.02, 0.2), # box loss gain

'cls': (1, 0.2, 4.0), # cls loss gain

'cls_pw': (1, 0.5, 2.0), # cls BCELoss positive_weight

'obj': (1, 0.2, 4.0), # obj loss gain (scale with pixels)

'obj_pw': (1, 0.5, 2.0), # obj BCELoss positive_weight

'iou_t': (0, 0.1, 0.7), # IoU training threshold

'anchor_t': (1, 2.0, 8.0), # anchor-multiple threshold

'anchors': (2, 2.0, 10.0), # anchors per output grid (0 to ignore)

'fl_gamma': (0, 0.0, 2.0), # focal loss gamma (efficientDet default gamma=1.5)

'hsv_h': (1, 0.0, 0.1), # image HSV-Hue augmentation (fraction)

'hsv_s': (1, 0.0, 0.9), # image HSV-Saturation augmentation (fraction)

'hsv_v': (1, 0.0, 0.9), # image HSV-Value augmentation (fraction)

'degrees': (1, 0.0, 45.0), # image rotation (+/- deg)

'translate': (1, 0.0, 0.9), # image translation (+/- fraction)

'scale': (1, 0.0, 0.9), # image scale (+/- gain)

'shear': (1, 0.0, 10.0), # image shear (+/- deg)

'perspective': (0, 0.0, 0.001), # image perspective (+/- fraction), range 0-0.001

'flipud': (1, 0.0, 1.0), # image flip up-down (probability)

'fliplr': (0, 0.0, 1.0), # image flip left-right (probability)

'mosaic': (1, 0.0, 1.0), # image mixup (probability)

'mixup': (1, 0.0, 1.0)} # image mixup (probability)

assert opt.local_rank == -1, 'DDP mode not implemented for --evolve'

opt.notest, opt.nosave = True, True # only test/save final epoch

# ei = [isinstance(x, (int, float)) for x in hyp.values()] # evolvable indices

yaml_file = Path(opt.save_dir) / 'hyp_evolved.yaml' # save best result here

if opt.bucket:

os.system('gsutil cp gs://%s/evolve.txt .' % opt.bucket) # download evolve.txt if exists

for _ in range(300): # generations to evolve

if Path('evolve.txt').exists(): # if evolve.txt exists: select best hyps and mutate

# Select parent(s)

parent = 'single' # parent selection method: 'single' or 'weighted'

x = np.loadtxt('evolve.txt', ndmin=2)

n = min(5, len(x)) # number of previous results to consider

x = x[np.argsort(-fitness(x))][:n] # top n mutations

w = fitness(x) - fitness(x).min() # weights

if parent == 'single' or len(x) == 1:

# x = x[random.randint(0, n - 1)] # random selection

x = x[random.choices(range(n), weights=w)[0]] # weighted selection

elif parent == 'weighted':

x = (x * w.reshape(n, 1)).sum(0) / w.sum() # weighted combination

# Mutate

mp, s = 0.8, 0.2 # mutation probability, sigma

npr = np.random

npr.seed(int(time.time()))

g = np.array([x[0] for x in meta.values()]) # gains 0-1

ng = len(meta)

v = np.ones(ng)

while all(v == 1): # mutate until a change occurs (prevent duplicates)

v = (g * (npr.random(ng) < mp) * npr.randn(ng) * npr.random() * s + 1).clip(0.3, 3.0)

for i, k in enumerate(hyp.keys()): # plt.hist(v.ravel(), 300)

hyp[k] = float(x[i + 7] * v[i]) # mutate

# Constrain to limits

for k, v in meta.items():

hyp[k] = max(hyp[k], v[1]) # lower limit

hyp[k] = min(hyp[k], v[2]) # upper limit

hyp[k] = round(hyp[k], 5) # significant digits

# Train mutation

results = train(hyp.copy(), opt, device)

# Write mutation results

print_mutation(hyp.copy(), results, yaml_file, opt.bucket)

# Plot results

plot_evolution(yaml_file)

print(f'Hyperparameter evolution complete. Best results saved as: {yaml_file}\n'

f'Command to train a new model with these hyperparameters: $ python train.py --hyp {yaml_file}')

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献69条内容

已为社区贡献69条内容

所有评论(0)