大数据-基于拉勾网职位数据的可视化实验-小白教程(六、Hive处理数据)

基于拉勾网职位数据的数据可视化,获取数据,清洗数据,对数据处理,通过ssm,echarts可视化数据。

首先将数据下载下来:

hdfs dfs -put /export/servers/part-r-00000 /JobData/output先检查mysql是否启动用

mysql -uroot -p123456进入hive的目录

cd /export/servers/apache-hive-1.2.1-bin/bin/启动hive

./hive一、将数据加载到数据仓库中

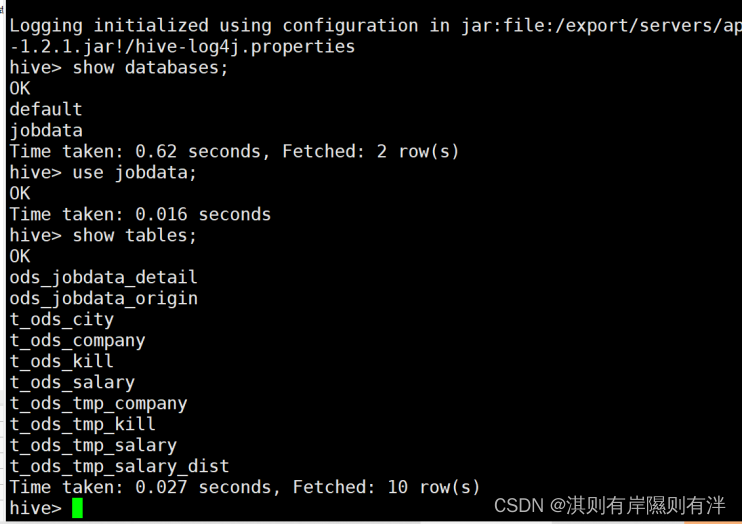

1.创建数据仓库 jobdata

create database jobdata;

use jobdata;2.创建事实表 ods_jobdata_origin

CREATE TABLE ods_jobdata_origin(

city string COMMENT '城市',

salary array<String> COMMENT '薪资',

company array<String> COMMENT '福利',

kill array<String> COMMENT '技能')

COMMENT '原始职位数据表'

ROW FORMAT DELIMITED FIELDS TERMINATED BY ','

COLLECTION ITEMS TERMINATED BY '-'

STORED AS TEXTFILE;3.(1)将HDFS上的预处理数据导入到事实表中,命令如下:

LOAD DATA INPATH '/JobData/output/part-r-00000' OVERWRITE INTO TABLE ods_jobdata_origin;(2)查看事实表中的数据,验证数据是否导入成功,命令如下:

select * from ods_jobdata_origin;4.创建明细表ods_jobdata_detail用于存储事实表细化的数据内容

CREATE TABLE ods_jobdata_detail(

city string COMMENT '城市',

salary array<String> COMMENT '薪资',

company array<String> COMMENT '福利',

kill array<String> COMMENT '技能',

low_salary int COMMENT '低薪资',

high_salary int COMMENT '高薪资',

avg_salary double COMMENT '平均薪资')

COMMENT '职位数据明细表'

ROW FORMAT DELIMITED FIELDS TERMINATED BY ','

STORED AS TEXTFILE;5.向明细表ods_jobdata_detail表中加载数据

insert overwrite table ods_jobdata_detail

select city,salary,company,kill,salary[0],salary[1],(salary[0]+salary[1])/2

from ods_jobdata_origin;6.对薪资字段内容进行扁平化处理,将处理结果储存到临时中间表t_ods_tmp_s

create table t_ods_tmp_salary as select explode(ojo.salary) from ods_jobdata_origin ojo;7. 对t_ods_tmp_salary表的每一条数据进行泛化处理,将处理结果储存到中间表t_ods_tmp_salary_dist中,命令如下

create table t_ods_tmp_salary_dist as

select case when col>=0 and col<=5 then "0-5"

when col>=6 and col<=10 then "6-10"

when col>=11 and col<=15 then "11-15"

when col>=16 and col<=20 then "16-20"

when col>=21 and col<=25 then "21-25"

when col>=26 and col<=30 then "26-30"

when col>=31 and col<=35 then "31-35"

when col>=36 and col<=40 then "36-40"

when col>=41 and col<=45 then "41-45"

when col>=46 and col<=50 then "46-50"

when col>=51 and col<=55 then "51-55"

when col>=56 and col<=60 then "56-60"

when col>=61 and col<=65 then "61-65"

when col>=66 and col<=70 then "66-70"

when col>=71 and col<=75 then "71-75"

when col>=76 and col<=80 then "76-80"

when col>=81 and col<=85 then "81-85"

when col>=86 and col<=90 then "86-90"

when col>=91 and col<=95 then "91-95"

when col>=96 and col<=100 then "96-100"

when col>=101 then ">101" end from t_ods_tmp_salary;8.对福利标签字段内容进行扁平化处理,将处理结果储存到临时中间表t_ods_tmp_company,命令如下:

create table t_ods_tmp_company as select explode(ojo.company) from ods_jobdata_origin ojo;9.对技能标签字段内容进行扁平化处理,将处理结果储存到临时中间表t_ods_tmp_kill,命令如下:

create table t_ods_tmp_kill as select explode(ojo.kill) from ods_jobdata_origin ojo;10.创建维度表t_ods_kill,用于储存技能标签的统计结果,命令如下

create table t_ods_kill(

every_kill String comment '技能标签',

count int comment '词频')

COMMENT '技能标签词频统计'

ROW FORMAT DELIMITED

fields terminated by ','

STORED AS TEXTFILE;11.创建维度表t_ods_company,用于储存福利标签的统计结果,命令如下

create table t_ods_company(

every_company String comment '福利标签',

count int comment '词频')

COMMENT '福利标签词频统计'

ROW FORMAT DELIMITED

fields terminated by ','

STORED AS TEXTFILE;12.创建维度表t_ods_salary,用于储存薪资分布的统计结果,命令如下

create table t_ods_salary(

every_partition String comment '薪资分布',

count int comment '聚合统计')

COMMENT '薪资分布聚合统计'

ROW FORMAT DELIMITED

fields terminated by ','

STORED AS TEXTFILE;13.创建维度表t_ods_city,用于储存城市的统计结果,命令如下

create table t_ods_city(

every_city String comment '城市',

count int comment '词频')

COMMENT '城市统计'

ROW FORMAT DELIMITED

fields terminated by ','

STORED AS TEXTFILE;

二、分析数据

1.通过对大数据相关职位分布的分析,使读者了解该职位在全国各城市的需求状况,命令如下

insert overwrite table t_ods_city

select city,count(1) from ods_jobdata_origin group by city;2.查看维度表t_ods_city中的分析结果,命令如下

select * from t_ods_city sort by count desc;3.分析大数据职位全国薪资分布情况,将分析结果储存在维度表t_ods_salary中,命令如下

insert overwrite table t_ods_salary

select `_c0`,count(1) from t_ods_tmp_salary_dist group by `_c0`;4.查看维度表t_ods_salary中的分析结果,命令如下

select * from t_ods_salary sort by count desc;5.分析薪资的平均值,命令如下

select avg(avg_salary) from ods_jobdata_detail;6.分析薪资的众数,命令如下

select avg_salary,count(1) as cnt from ods_jobdata_detail group by avg_salary order by cnt desc limit 1;7.分析薪资的中位数,命令如下

select percentile(cast(avg_salary as BIGINT), 0.5) from ods_jobdata_detail;8.分析各城市平均薪资待遇,命令如下

select city,count(city),round(avg(avg_salary),2) as cnt from ods_jobdata_detail group by city order by cnt desc;9.通过对公司福利字段进行分析,了解大数据职位相关公司对员工福利常用的标签都有哪些,命令如下

insert overwrite table t_ods_company select col,count(1) from t_ods_tmp_company group by col;10.查看维度表t_ods_company中的分析结果,命令如下

select every_company,count from t_ods_company sort by count desc limit 10;11. 通过对技能标签分析,了解要从事大数据相关工作需要掌握哪些技能,招聘公司比较重视哪些技能,命令如下

insert overwrite table t_ods_kill select col,count(1) from t_ods_tmp_kill group by col;12.查看维度表t_ods_kill中的分析结果,命令如下

select every_kill,count from t_ods_kill sort by count desc limit 3;之后你会在你的hive表中看到:

这样我们就把数据处理完了,时候我们需要通过sqoop工具将数据迁移至mysql中。我们下回来说!

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)