视觉识别及抓取——ROS2焊接机械臂(六)

本文介绍了基于ROS2和MoveIt的机械臂末端固定点运动控制方法。首先通过C++代码创建move_to_pose节点,使用MoveGroupInterface设置目标位姿并规划运动轨迹。然后详细说明了工作空间配置流程,包括创建C++包、编写CMakeLists.txt文件、配置Launch文件以加载机器人参数。最后展示了如何编译运行程序,实现机械臂从当前位置移动到指定目标点的完整过程。文中还提供

这次,我们根据配置好的工作空间,进行视觉的识别及抓取,如果需要上次配置的工作空间,可以后台私信我。

文章目录

一,末端移动到固定点

流程:

- (1)写入位置参数(单位:米)和 姿态参数(四元数表示)

- (2)调用 move_group_interface.plan(my_plan) 方法

- 功能:生成从当前位置到目标位置的安全运动轨迹

- (3)规划成功后调用 move_group_interface.execute(my_plan)

系统将:- 发送轨迹指令给控制器

- 驱动 Gazebo 仿真环境中的关节电机运动

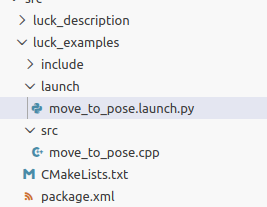

1.新建C++工作空间

ros2 pkg create --build-type ament_cmake --dependencies rclcpp moveit_ros_planning_interface geometry_msgs moveit_msgs --destination-directory src luck_examples

写cpp文件(里面可修改目标点):

#include <rclcpp/rclcpp.hpp>

#include <moveit/move_group_interface/move_group_interface.h>

#include <geometry_msgs/msg/pose.hpp>

int main(int argc, char** argv)

{

rclcpp::init(argc, argv);

auto node = rclcpp::Node::make_shared("move_to_pose_node");

// 使用 "test_group" 规划组

static const std::string PLANNING_GROUP = "test_group";

// 创建 MoveGroupInterface

using moveit::planning_interface::MoveGroupInterface;

auto move_group_interface = MoveGroupInterface(node, PLANNING_GROUP);

// 获取当前位姿作为参考 (可选)

// geometry_msgs::msg::Pose current_pose = move_group_interface.getCurrentPose().pose;

// RCLCPP_INFO(node->get_logger(), "Current Pose: x=%f, y=%f, z=%f",

// current_pose.position.x, current_pose.position.y, current_pose.position.z);

// 设置目标位姿

geometry_msgs::msg::Pose target_pose;

target_pose.orientation.w = 1.0;

target_pose.position.x = 0.3;

target_pose.position.y = 0.0;

target_pose.position.z = 0.5;

move_group_interface.setPoseTarget(target_pose);

// 创建规划

moveit::planning_interface::MoveGroupInterface::Plan my_plan;

bool success = (move_group_interface.plan(my_plan) == moveit::core::MoveItErrorCode::SUCCESS);

if (success)

{

RCLCPP_INFO(node->get_logger(), "Planning successful, executing...");

move_group_interface.execute(my_plan);

}

else

{

RCLCPP_ERROR(node->get_logger(), "Planning failed!");

}

rclcpp::shutdown();

return 0;

}

修改CMakelists.txt文件

cmake_minimum_required(VERSION 3.8)

project(luck_examples)

if(CMAKE_COMPILER_IS_GNUCXX OR CMAKE_CXX_COMPILER_ID MATCHES "Clang")

add_compile_options(-Wall -Wextra -Wpedantic)

endif()

# find dependencies

find_package(ament_cmake REQUIRED)

find_package(rclcpp REQUIRED)

find_package(moveit_ros_planning_interface REQUIRED)

find_package(geometry_msgs REQUIRED)

find_package(moveit_msgs REQUIRED)

add_executable(move_to_pose src/move_to_pose.cpp)

ament_target_dependencies(move_to_pose

rclcpp

moveit_ros_planning_interface

geometry_msgs

moveit_msgs

)

install(TARGETS move_to_pose

DESTINATION lib/${PROJECT_NAME}

)

install(DIRECTORY launch

DESTINATION share/${PROJECT_NAME}

)

if(BUILD_TESTING)

find_package(ament_lint_auto REQUIRED)

# the following line skips the linter which checks for copyrights

# comment the line when a copyright and license is added to all source files

set(ament_cmake_copyright_FOUND TRUE)

# the following line skips cpplint (only works in a git repo)

# comment the line when this package is in a git repo and when

# a copyright and license is added to all source files

set(ament_cmake_cpplint_FOUND TRUE)

ament_lint_auto_find_test_dependencies()

endif()

ament_package()

配置 (Launch 文件)

为什么不能直接 ros2 run 而需要 ros2 launch?

参数加载: MoveIt 的节点(即我们的 C++ 程序)启动时,必须知道机器人的身体结构(URDF)和语义信息(SRDF)。

move_to_pose.launch.py 的作用就是先读取这些配置文件,把它们变成参数传给节点,节点才能正常工作。

创建launch文件(move_to_pose.launch.py)

from launch import LaunchDescription

from launch_ros.actions import Node

from moveit_configs_utils import MoveItConfigsBuilder

def generate_launch_description():

moveit_config = MoveItConfigsBuilder("luck_description", package_name="luck_robot").to_moveit_configs()

return LaunchDescription([

Node(

package="luck_examples",

executable="move_to_pose",

name="move_to_pose_node",

output="screen",

parameters=[

moveit_config.robot_description,

moveit_config.robot_description_semantic,

moveit_config.robot_description_kinematics,

{"use_sim_time": True},

],

)

])

重新编译:

因为是 C++ 代码,修改后必须编译才能生效。

colcon build --packages-select luck_examples

source install/setup.bash

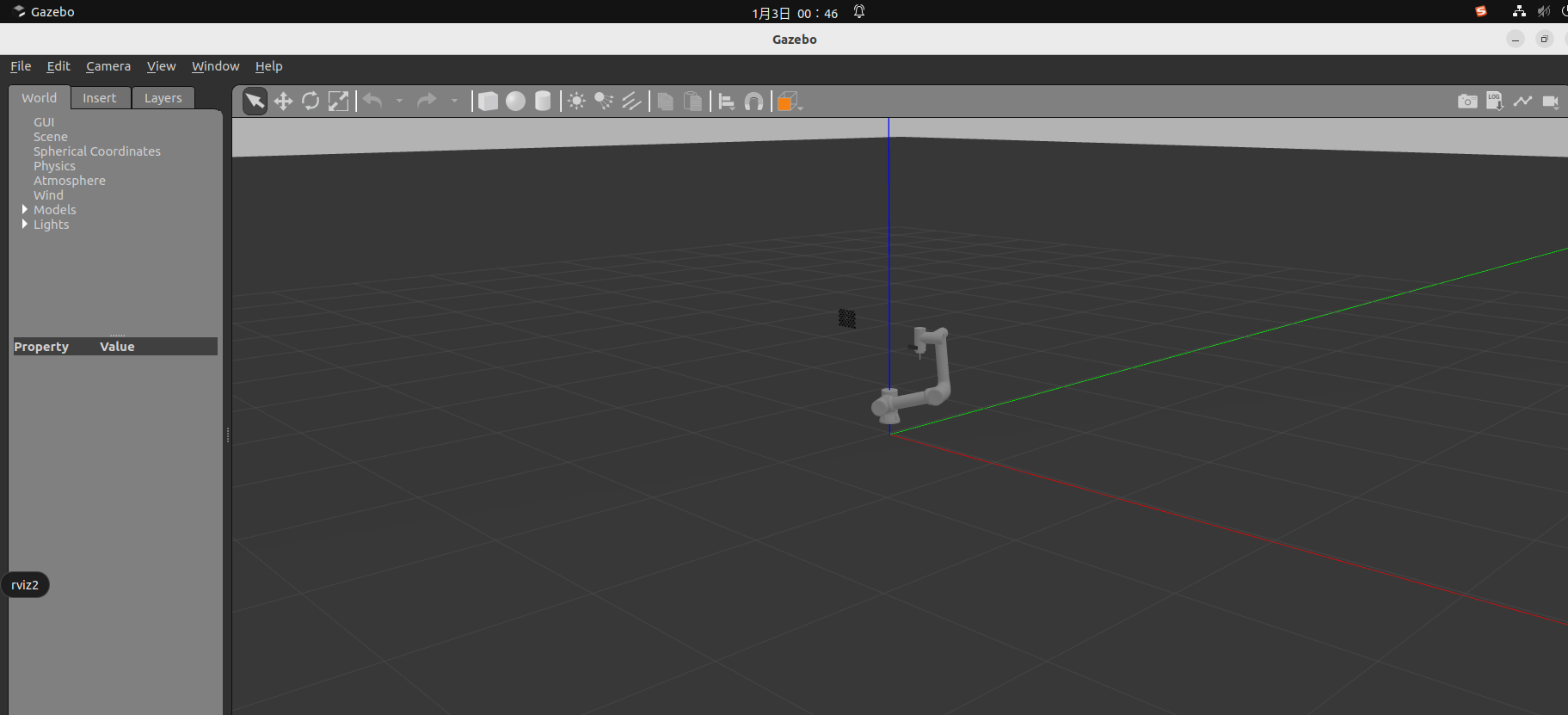

运行:

在第一个终端运行总启动文件后,再运行这个launch文件

ros2 launch luck_gazebo gazebo_moveit.launch.py

ros2 launch luck_examples move_to_pose.launch.py

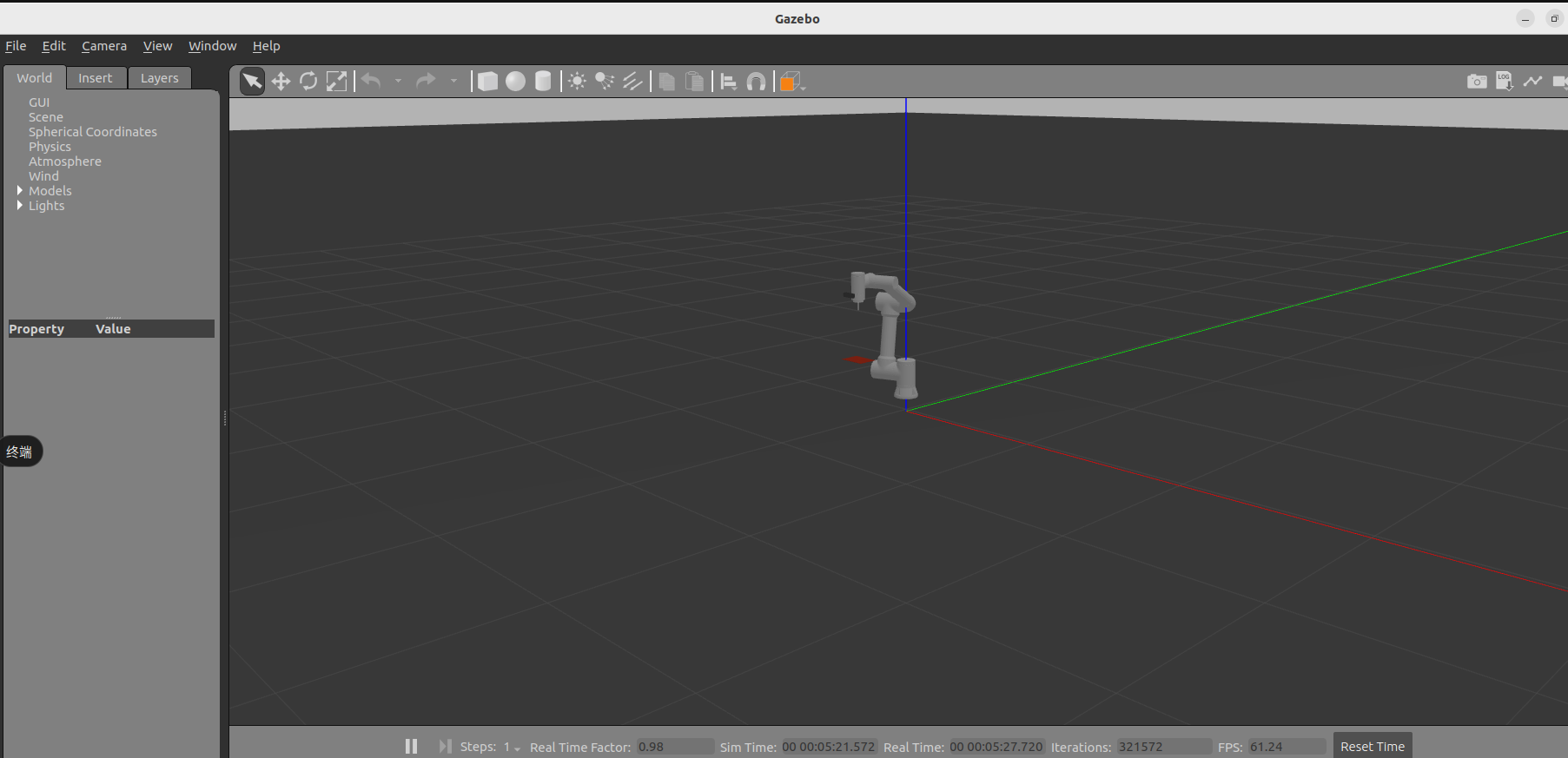

效果图如下

二,配置红色目标板

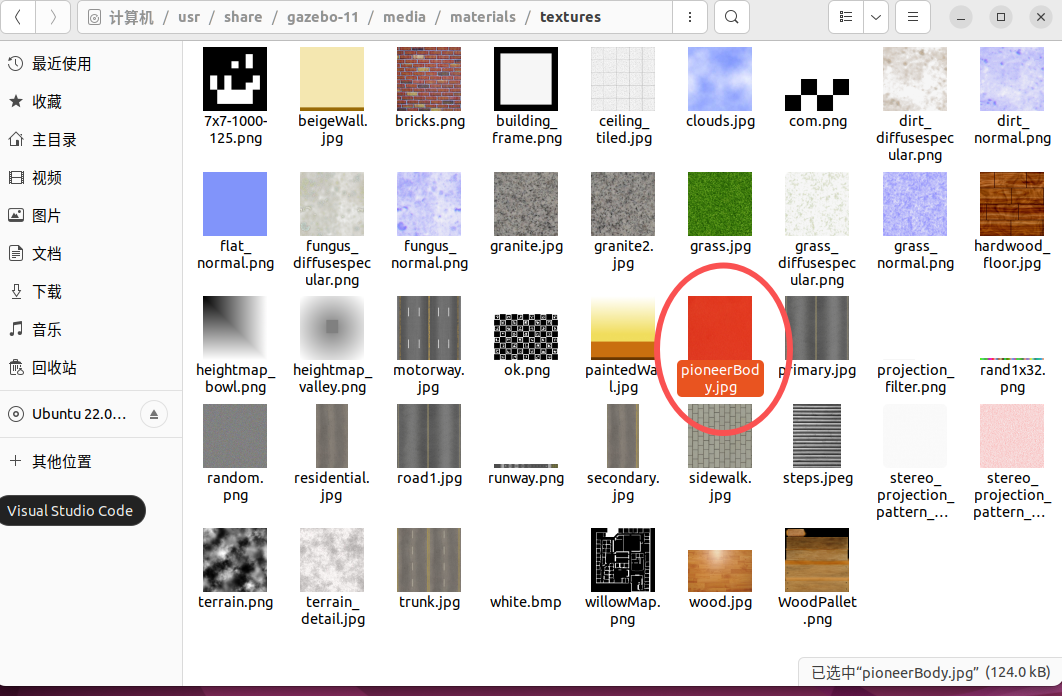

2.1在scripts文件夹下配置aruco.material文件。

(可以根据自己电脑gazebo文件夹路径放,不一定和我这个一样,可以自己找一下)

cd /usr/share/gazebo-11/media/materials/scripts/

sudo nano aruco.material

将下面内容放进去,并保存

// /usr/share/gazebo-9/media/materials/scripts/aruco.material

material Gazebo/aruco-36

{

technique

{

pass

{

lighting on

shading gouraud

// 环境光和漫反射设置为白色,确保纹理不暗

ambient 1.0 1.0 1.0 1.0

diffuse 1.0 1.0 1.0 1.0

specular 0.2 0.2 0.2 1.0 50.0

texture_unit

{

// 使用您的图片文件名

texture pioneerBody.jpg

// 修正拼写错误:anistropic → anisotropic

filtering anisotropic

max_anisotropy 16

// 添加纹理缩放设置

scale 1.0 1.0

// 确保纹理重复

scroll 0 0

wave_xform scroll sine 0 0 0 0

}

}

}

}

对应的就是这个图片

2.2将目标板添加到rviz和gazebo中

在我的机械臂urdf文件(src/luck_description/urdf/luck_description.urdf)最后添加下面内容,可以加载到rviz2中

<!-- 在world上直接添加标定板 -->

<link name="calibration_board">

<visual name="board_visual">

<geometry>

<box size="0.165 0.001 0.12"/> <!-- 薄板 -->

</geometry>

</visual>

<!-- 可选的碰撞体 -->

<collision name="board_collision">

<geometry>

<box size="0.165 0.001 0.12"/>

</geometry>

</collision>

</link>

<!-- 将标定板固定在世界坐标系中 -->

<joint name="world_to_board_joint" type="fixed">

<parent link="world"/>

<child link="calibration_board"/>

<!-- 关键:设置标定板在世界中的位置 -->

<origin xyz="0 -0.4 0.4" rpy="1.5708 0 0"/> <!-- 在工作空间中的固定位置 -->

</joint>

<!-- Gazebo配置:为标定板应用ArUco材质 -->

<gazebo reference="calibration_board">

<material>Gazebo/aruco-36</material>

<static>true</static> <!-- 标记为静态,不会掉落 -->

</gazebo>

创建src/luck_gazebo/models/calibration_board/calibration_board.sdf文件,可以导入gazebo。

<?xml version="1.0" ?>

<sdf version="1.6">

<model name="calibration_board">

<static>true</static>

<link name="calibration_board">

<!-- 位姿:与之前 URDF 中 world_to_board_joint 的 origin 保持一致 -->

<pose>0 -0.4 0.4 1.5708 0 0</pose>

<visual name="board_visual">

<geometry>

<box>

<size>0.165 0.001 0.12</size>

</box>

</geometry>

<material>

<!-- 使用 Gazebo 自带的 aruco 材质;若缺失可改为其它材质 -->

<script>

<uri>file://media/materials/scripts/gazebo.material</uri>

<name>Gazebo/aruco-36</name>

</script>

</material>

</visual>

<collision name="board_collision">

<geometry>

<box>

<size>0.1 0.001 0.1</size>

</box>

</geometry>

</collision>

</link>

</model>

</sdf>

修改总launch文件(添加启动文件,和回调文件),总的文件如下

#!/usr/bin/env python3

"""

完整的Gazebo + MoveIt + RViz联合调试Launch文件

"""

import os

from launch import LaunchDescription

from launch.actions import ExecuteProcess, TimerAction, IncludeLaunchDescription

from launch.launch_description_sources import PythonLaunchDescriptionSource

from launch.substitutions import PathJoinSubstitution

from launch_ros.actions import Node

from launch_ros.substitutions import FindPackageShare

from ament_index_python.packages import get_package_share_directory

from moveit_configs_utils import MoveItConfigsBuilder

import xacro

def generate_launch_description():

# 包路径

pkg_luck_robot = get_package_share_directory('luck_robot')

pkg_luck_gazebo = get_package_share_directory('luck_gazebo')

pkg_luck_description = get_package_share_directory('luck_description')

calibration_board_model = os.path.join(

pkg_luck_gazebo, 'models', 'calibration_board', 'calibration_board.sdf'

)

# 若未安装到 install/share,回退到源码路径

if not os.path.exists(calibration_board_model):

ws_root = os.path.abspath(os.path.join(pkg_luck_gazebo, '..', '..', '..', '..'))

calibration_board_model = os.path.join(

ws_root, 'src', 'luck_gazebo', 'models', 'calibration_board', 'calibration_board.sdf'

)

# 控制器配置

controller_config = os.path.join(pkg_luck_gazebo, 'config', 'controllers.yaml')

# MoveIt配置

moveit_config = MoveItConfigsBuilder("luck_description", package_name="luck_robot").to_moveit_configs()

# 处理xacro生成URDF - 使用命令行参数方式

initial_positions_file = os.path.join(pkg_luck_robot, 'config', 'initial_positions.yaml')

# 直接调用xacro命令处理文件

import subprocess

xacro_file = os.path.join(pkg_luck_robot, 'config', 'luck_description.urdf.xacro')

result = subprocess.run(

['xacro', xacro_file,

f'initial_positions_file:={initial_positions_file}',

'use_sim:=true'],

capture_output=True,

text=True,

check=True

)

robot_description_content = result.stdout

# 动态注入controller配置到Gazebo插件

robot_description_content = robot_description_content.replace(

'<plugin filename="libgazebo_ros2_control.so" name="gazebo_ros2_control">\n </plugin>',

f'<plugin filename="libgazebo_ros2_control.so" name="gazebo_ros2_control">\n <parameters>{controller_config}</parameters>\n </plugin>'

)

# 替换mesh路径为绝对路径

robot_description_content = robot_description_content.replace(

'package://luck_description',

f'file://{pkg_luck_description}'

)

pkg_realsense_description = get_package_share_directory('realsense2_description')

robot_description_content = robot_description_content.replace(

'package://realsense2_description',

f'file://{pkg_realsense_description}'

)

# Robot State Publisher

robot_state_publisher = Node(

package='robot_state_publisher',

executable='robot_state_publisher',

name='robot_state_publisher',

output='screen',

parameters=[{

'robot_description': robot_description_content,

'use_sim_time': True

}]

)

# 启动Gazebo

gazebo = ExecuteProcess(

cmd=['gazebo', '--verbose', '-s', 'libgazebo_ros_init.so', '-s', 'libgazebo_ros_factory.so'],

output='screen'

)

# Spawn机器人

spawn_entity = Node(

package='gazebo_ros',

executable='spawn_entity.py',

arguments=[

'-topic', 'robot_description',

'-entity', 'luck_robot',

'-x', '0',

'-y', '0',

'-z', '0.1'

],

output='screen'

)

# Spawn标定板(静态)

spawn_calibration_board = Node(

package='gazebo_ros',

executable='spawn_entity.py',

arguments=[

'-file', calibration_board_model,

'-entity', 'calibration_board'

],

output='screen'

)

# 加载并激活joint_state_broadcaster

joint_state_broadcaster_spawner = Node(

package="controller_manager",

executable="spawner",

arguments=["joint_state_broadcaster"],

output='screen'

)

# 加载并激活test_group_controller

test_group_controller_spawner = Node(

package="controller_manager",

executable="spawner",

arguments=["test_group_controller"],

output='screen'

)

# MoveGroup节点 - 增加trajectory execution参数

trajectory_execution_params = {

'moveit_manage_controllers': True,

'trajectory_execution.allowed_execution_duration_scaling': 2.0,

'trajectory_execution.allowed_goal_duration_margin': 5.0,

'trajectory_execution.allowed_start_tolerance': 0.5, # 增大到0.5弧度

'trajectory_execution.execution_duration_monitoring': False,

}

move_group_params = [

moveit_config.to_dict(),

trajectory_execution_params,

{'use_sim_time': True}

]

move_group_node = Node(

package='moveit_ros_move_group',

executable='move_group',

output='screen',

parameters=move_group_params,

)

# RViz配置 - 使用实际路径而不是Substitution

rviz_config_file = os.path.join(pkg_luck_robot, "config", "moveit.rviz")

# RViz节点

rviz_node = Node(

package="rviz2",

executable="rviz2",

name="rviz2",

output="screen", # 改为screen以便查看错误信息

arguments=["-d", rviz_config_file],

parameters=[

{"robot_description": robot_description_content}, # 添加robot_description

moveit_config.robot_description_semantic,

moveit_config.robot_description_kinematics,

moveit_config.planning_pipelines,

moveit_config.joint_limits,

{"use_sim_time": True},

],

)

return LaunchDescription([

# 1. 启动Gazebo和robot_state_publisher

robot_state_publisher,

gazebo,

# 2. 8秒后spawn机器人

TimerAction(

period=8.0,

actions=[spawn_entity]

),

# 2.5 6秒后spawn标定板

TimerAction(

period=6.0,

actions=[spawn_calibration_board]

),

# 3. 10秒后加载控制器(关键:防止机械臂下落)

TimerAction(

period=10.0,

actions=[joint_state_broadcaster_spawner]

),

# 4. 11秒后激活轨迹控制器

TimerAction(

period=11.0,

actions=[test_group_controller_spawner]

),

# 5. 12秒后启动MoveGroup(给控制器充分时间稳定)

TimerAction(

period=12.0,

actions=[move_group_node]

),

# 6. 17秒后启动RViz

TimerAction(

period=17.0,

actions=[rviz_node]

),

])

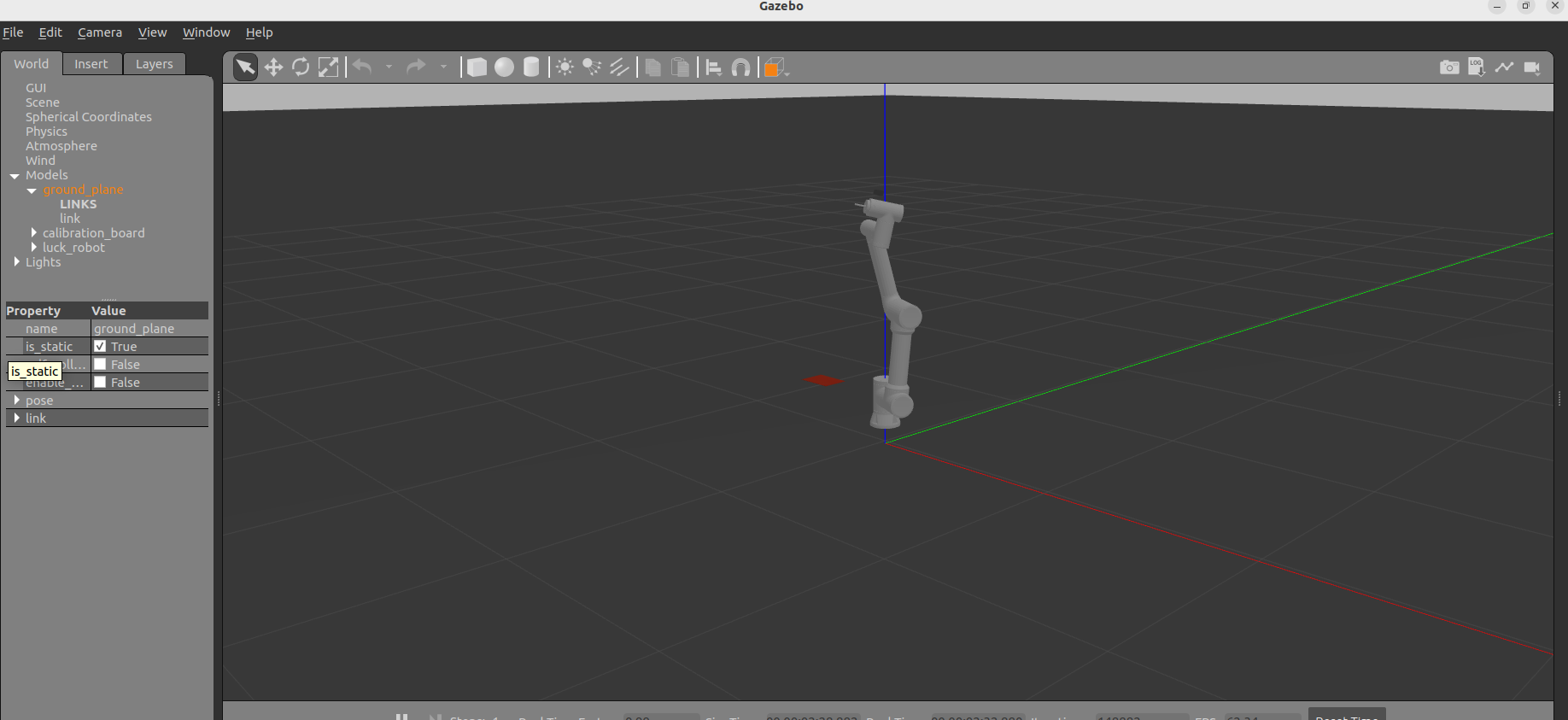

然后进行colcon build和source,就可以看到gazebo中有红色板了。

三,视觉识别位置并运动

先让机械臂运动到特定位置,以便于摄像头能看到红板,然后使用坐标变换计算出目红板中心点的实际空间位置(有一点点偏差),并让机械臂运动到上方20cm处。

move_to_pose.cpp文件修改

#include <rclcpp/rclcpp.hpp>

#include <moveit/move_group_interface/move_group_interface.h>

#include <geometry_msgs/msg/pose.hpp>

#include <sensor_msgs/msg/image.hpp>

#include <sensor_msgs/msg/camera_info.hpp>

#include <cv_bridge/cv_bridge.h>

#include <opencv2/opencv.hpp>

#include <tf2_ros/transform_listener.h>

#include <tf2_ros/buffer.h>

#include <tf2_geometry_msgs/tf2_geometry_msgs.hpp>

#include <tf2/LinearMath/Transform.h>

#include <tf2/LinearMath/Vector3.h>

#include <thread>

#include <atomic>

class ImageProcessor : public rclcpp::Node

{

public:

ImageProcessor() : Node("move_and_detect_node")

{

tf_buffer_ = std::make_unique<tf2_ros::Buffer>(this->get_clock());

tf_listener_ = std::make_shared<tf2_ros::TransformListener>(*tf_buffer_);

}

void start_processing()

{

// 订阅相机信息 (只订阅一次)

camera_info_sub_ = this->create_subscription<sensor_msgs::msg::CameraInfo>(

"/camera/camera_sensor/camera_info", 10,

[this](const sensor_msgs::msg::CameraInfo::SharedPtr msg) {

if (!has_camera_info_) {

fx_ = msg->k[0];

fy_ = msg->k[4];

cx_ = msg->k[2];

cy_ = msg->k[5];

has_camera_info_ = true;

RCLCPP_INFO(this->get_logger(), "获取到相机内参: fx=%.2f, fy=%.2f, cx=%.2f, cy=%.2f", fx_, fy_, cx_, cy_);

}

});

// 订阅图像话题

image_sub_ = this->create_subscription<sensor_msgs::msg::Image>(

"/camera/camera_sensor/image_raw", 10,

std::bind(&ImageProcessor::image_callback, this, std::placeholders::_1));

RCLCPP_INFO(this->get_logger(), "视觉节点已启动,等待图像...");

}

bool is_done() const { return done_; }

// 获取计算出的目标位置

bool get_target_pose(geometry_msgs::msg::Pose& pose) {

if (done_) {

pose = target_pose_;

return true;

}

return false;

}

private:

void image_callback(const sensor_msgs::msg::Image::SharedPtr msg)

{

if (done_ || !has_camera_info_) return;

try

{

cv::Mat frame = cv_bridge::toCvCopy(msg, "bgr8")->image;

cv::Mat hsv;

cv::cvtColor(frame, hsv, cv::COLOR_BGR2HSV);

cv::Mat mask1, mask2, mask;

cv::inRange(hsv, cv::Scalar(0, 43, 46), cv::Scalar(10, 255, 255), mask1);

cv::inRange(hsv, cv::Scalar(156, 43, 46), cv::Scalar(180, 255, 255), mask2);

mask = mask1 | mask2;

std::vector<std::vector<cv::Point>> contours;

cv::findContours(mask, contours, cv::RETR_EXTERNAL, cv::CHAIN_APPROX_SIMPLE);

if (!contours.empty())

{

auto largest_contour = std::max_element(contours.begin(), contours.end(),

[](const std::vector<cv::Point>& a, const std::vector<cv::Point>& b) {

return cv::contourArea(a) < cv::contourArea(b);

});

if (cv::contourArea(*largest_contour) > 500)

{

cv::Rect rect = cv::boundingRect(*largest_contour);

double u = rect.x + rect.width / 2.0;

double v = rect.y + rect.height / 2.0;

// 输出像素坐标

RCLCPP_INFO(this->get_logger(), ">>> 红板中心像素坐标: (u=%.1f, v=%.1f) <<<", u, v);

// ---------------------------------------------------------

// 坐标计算核心逻辑 (修正版)

// 问题背景:

// 1. Gazebo仿真中,相机传感器挂载在 'camera_link' (物理位置)。

// 2. 图像数据遵循 Optical Frame 定义 (Z轴向前),对应的TF帧是 'camera_color_optical_frame'。

// 3. 两者之间存在约1.5cm的物理偏移。

//

// 解决方案: 混合使用两个Frame

// - 原点 (Origin): 使用 'camera_link' 的位置,消除平移误差。

// - 方向 (Direction): 使用 'camera_color_optical_frame' 的旋转,保证视轴方向正确。

// ---------------------------------------------------------

std::string optical_frame = "camera_color_optical_frame"; // 用于方向

std::string sensor_frame = "camera_link"; // 用于原点

// 1. 获取TF变换

geometry_msgs::msg::TransformStamped tf_optical_msg, tf_sensor_msg;

try {

// 获取方向变换 (Base -> Optical)

tf_optical_msg = tf_buffer_->lookupTransform("base_link", optical_frame, tf2::TimePointZero);

// 获取位置变换 (Base -> Sensor)

tf_sensor_msg = tf_buffer_->lookupTransform("base_link", sensor_frame, tf2::TimePointZero);

} catch (tf2::TransformException &ex) {

RCLCPP_WARN(this->get_logger(), "TF变换获取失败: %s", ex.what());

return;

}

// 2. 转换数据类型

tf2::Transform transform_optical, transform_sensor;

tf2::fromMsg(tf_optical_msg.transform, transform_optical);

tf2::fromMsg(tf_sensor_msg.transform, transform_sensor);

// 3. 构建射线

// 射线原点: 使用物理传感器的位置

tf2::Vector3 cam_origin = transform_sensor.getOrigin();

// 射线方向: 在Optical Frame中构建向量 (x, y, 1.0),然后旋转到世界坐标系

// P_pixel(u,v) -> P_optical(x,y,1)

double ray_x = (u - cx_) / fx_;

double ray_y = (v - cy_) / fy_;

double ray_z = 1.0;

tf2::Vector3 ray_optical(ray_x, ray_y, ray_z);

// 应用旋转 (只旋转,不平移)

tf2::Vector3 ray_world = transform_optical.getBasis() * ray_optical;

ray_world.normalize(); // 归一化方向向量

RCLCPP_INFO(this->get_logger(), "相机位置 (World): X=%.3f, Y=%.3f, Z=%.3f",

cam_origin.x(), cam_origin.y(), cam_origin.z());

RCLCPP_INFO(this->get_logger(), "射线方向 (World): X=%.3f, Y=%.3f, Z=%.3f",

ray_world.x(), ray_world.y(), ray_world.z());

// 4. 射线-平面求交 (计算落地坐标)

double object_z = 0.4; // 目标平面高度 (红板高度)

// 确保射线是指向下的 (Z分量为负) 且不平行于地面

if (ray_world.z() < -1e-6) {

// t = (Z_plane - Z_origin) / Z_dir

double t = (object_z - cam_origin.z()) / ray_world.z();

if (t > 0) {

tf2::Vector3 intersection = cam_origin + (ray_world * t);

RCLCPP_INFO(this->get_logger(), ">>> 推算的3D坐标: X=%.3f, Y=%.3f, Z=%.3f <<<",

intersection.x(), intersection.y(), intersection.z());

// 设置目标位置 (保持Z轴悬停高度)

target_pose_.position.x = intersection.x();

target_pose_.position.y = intersection.y() + 0.015; // 手动补偿RGB传感器物理偏移 (1.5cm)

target_pose_.position.z = 0.6; // 移动到物体上方 (0.4 + 0.2)

RCLCPP_INFO(this->get_logger(), ">>> 修正后目标坐标: X=%.3f, Y=%.3f, Z=%.3f <<<",

target_pose_.position.x, target_pose_.position.y, target_pose_.position.z);

// 保持末端垂直向下

target_pose_.orientation.w = 1.0;

target_pose_.orientation.x = 0.0;

target_pose_.orientation.y = 0.0;

target_pose_.orientation.z = 0.0;

done_ = true;

}

} else {

RCLCPP_WARN(this->get_logger(), "射线未指向下方或平行于地面,无法计算交点。");

}

}

}

}

catch (cv_bridge::Exception& e)

{

RCLCPP_ERROR(this->get_logger(), "cv_bridge exception: %s", e.what());

}

}

rclcpp::Subscription<sensor_msgs::msg::Image>::SharedPtr image_sub_;

rclcpp::Subscription<sensor_msgs::msg::CameraInfo>::SharedPtr camera_info_sub_;

std::unique_ptr<tf2_ros::Buffer> tf_buffer_;

std::shared_ptr<tf2_ros::TransformListener> tf_listener_;

std::atomic<bool> done_{false};

bool has_camera_info_{false};

double fx_, fy_, cx_, cy_;

geometry_msgs::msg::Pose target_pose_;

};

int main(int argc, char** argv)

{

rclcpp::init(argc, argv);

auto node = std::make_shared<ImageProcessor>();

rclcpp::executors::SingleThreadedExecutor executor;

executor.add_node(node);

std::thread spinner([&executor]() { executor.spin(); });

static const std::string PLANNING_GROUP = "test_group";

using moveit::planning_interface::MoveGroupInterface;

auto move_group_interface = MoveGroupInterface(node, PLANNING_GROUP);

// 1. 先移动到观测位置

geometry_msgs::msg::Pose observe_pose;

observe_pose.orientation.w = 1.0;

observe_pose.orientation.x = 0.0;

observe_pose.orientation.y = 0.0;

observe_pose.orientation.z = 0.0;

observe_pose.position.x = 0.0;

observe_pose.position.y = -0.4;

observe_pose.position.z = 0.7;

move_group_interface.setPoseTarget(observe_pose);

RCLCPP_INFO(node->get_logger(), "正在移动到观测位置 (0, -0.4, 0.7)...");

moveit::planning_interface::MoveGroupInterface::Plan my_plan;

if (move_group_interface.plan(my_plan) == moveit::core::MoveItErrorCode::SUCCESS)

{

move_group_interface.execute(my_plan);

// 2. 等待8秒

RCLCPP_INFO(node->get_logger(), "到达观测位置,等待2秒...");

std::this_thread::sleep_for(std::chrono::seconds(2));

// 3. 开启视觉处理

RCLCPP_INFO(node->get_logger(), "开始视觉识别...");

node->start_processing();

// 等待识别结果

int timeout = 200; // 20s

while (!node->is_done() && timeout > 0 && rclcpp::ok())

{

std::this_thread::sleep_for(std::chrono::milliseconds(100));

timeout--;

}

if (node->is_done()) {

geometry_msgs::msg::Pose target_pose;

node->get_target_pose(target_pose);

RCLCPP_INFO(node->get_logger(), "视觉定位成功,正在前往目标...");

RCLCPP_INFO(node->get_logger(), "最终目标坐标: X=%.3f, Y=%.3f, Z=%.3f",

target_pose.position.x, target_pose.position.y, target_pose.position.z);

// 执行移动

move_group_interface.setPoseTarget(target_pose);

if (move_group_interface.plan(my_plan) == moveit::core::MoveItErrorCode::SUCCESS) {

move_group_interface.execute(my_plan);

} else {

RCLCPP_ERROR(node->get_logger(), "目标位置规划失败!");

}

} else {

RCLCPP_WARN(node->get_logger(), "超时: 未能定位到红色目标。");

}

}

else

{

RCLCPP_ERROR(node->get_logger(), "观测位置规划失败!");

}

executor.cancel();

spinner.join();

rclcpp::shutdown();

return 0;

}

重新编译:

修改后必须编译才能生效

colcon build --packages-select luck_examples

source install/setup.bash

运行:

在第一个终端运行总启动文件后,再运行这个launch文件

ros2 launch luck_gazebo gazebo_moveit.launch.py

ros2 launch luck_examples move_to_pose.launch.py

效果图如下:

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献3条内容

已为社区贡献3条内容

所有评论(0)