Apriori算法的python实现

极简版Apriori关联规则挖掘算法的python实现

·

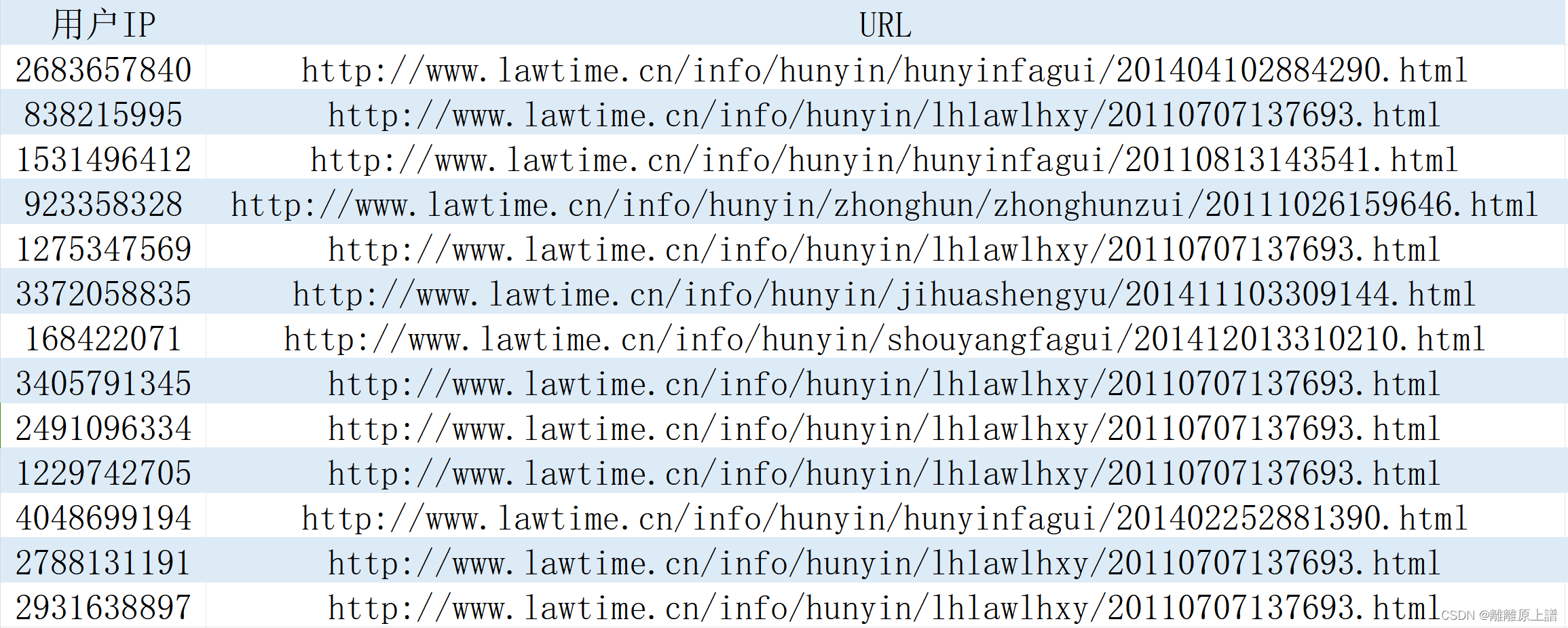

1.数据格式

2.代码

(1)自编写:速度更快(大规模数据集推荐)

import pandas as pd

from collections import Counter

def apriori(D, minSup):

def get_support_count(D, key):

return sum(1 for transaction in D if set(key).issubset(set(transaction)))

C1 = Counter(item for transaction in D for item in transaction)

keys1 = [[item] for item in C1 if C1[item] / len(D) >= minSup]

keys = keys1

all_keys = []

support_counts = {}

while keys:

C = [get_support_count(D, key) for key in keys]

cutKeys = [key for key, count in zip(keys, C) if count / len(D) >= minSup]

for key, count in zip(cutKeys, C):

support_counts[tuple(key)] = count / len(D)

all_keys.extend(cutKeys)

keys = aproiri_gen(cutKeys)

return all_keys, support_counts

def aproiri_gen(keys1):

keys2 = []

for k1 in keys1:

for k2 in keys1:

if k1 != k2:

key = sorted(list(set(k1) | set(k2)))

if key not in keys2 and key not in keys1:

keys2.append(key)

return keys2

def Dataset_generation(file):

global mapping

df = pd.read_csv(file,encoding='gbk')

print(df)

print(df.nunique())

df_deduped = df.drop_duplicates(subset=[df.columns[0]])

print(df_deduped)

print(df_deduped.nunique())

mapping = {val: idx for idx, val in enumerate(df['URL'].unique())}

df['URL'] = df['URL'].map(mapping)

df['URL'] = df['URL'].astype(str)

ip_url_dict = {}

for index, row in df_deduped.iterrows():

ip = row['用户IP']

urls = df[df['用户IP'] == ip]['URL'].tolist()

ip_url_dict[ip] = urls

return [v for k, v in ip_url_dict.items()]

new_list = Dataset_generation('acc_records(1).csv')

F, support_counts = apriori(new_list, 1e-2)

print("Frequent Itemsets:")

for itemset in F:

mapped_itemset = [list(mapping.keys())[list(mapping.values()).index(int(item))] for item in itemset]

print(mapped_itemset, "Support:", support_counts[tuple(itemset)])(2)调用库:结构更简洁

from mlxtend.frequent_patterns import apriori

import pandas as pd

def Dataset_generation(file):

global mapping

df = pd.read_csv(file,encoding='gbk')

print(df)

print(df.nunique())

df_deduped = df.drop_duplicates(subset=[df.columns[0]])

print(df_deduped)

print(df_deduped.nunique())

mapping = {val: idx for idx, val in enumerate(df['URL'].unique())}

df['URL'] = df['URL'].map(mapping)

df['URL'] = df['URL'].astype(str)

ip_url_dict = {}

for index, row in df_deduped.iterrows():

ip = row['用户IP']

urls = df[df['用户IP'] == ip]['URL'].tolist()

ip_url_dict[ip] = urls

return [v for k, v in ip_url_dict.items()]

new_list = Dataset_generation('acc_records(1).csv')

df = pd.DataFrame(new_list)

df = df.stack().str.get_dummies().groupby(level=0).sum().astype(bool)

frequent_itemsets = apriori(df, min_support=1e-2, use_colnames=True)

frequent_itemsets['itemsets'] = (frequent_itemsets['itemsets']

.apply(lambda x: [list(mapping.keys())[list(mapping.values())

.index(int(item))] for item in x]))

print(frequent_itemsets)

3.后续更新中

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)