HPL-并行测试

HPL-并行测试

上篇:

HPL-用于分布式内存计算机的高性能Linpack基准的便携式实现测试_好记忆不如烂笔头abc的博客-CSDN博客两节点互信配置:

两节点操作

cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.52.167 hpl1

192.168.52.168 hpl2

ssh-keygen -t rsa

cat .ssh/id_*.pub|ssh root@hpl2 'cat >> .ssh/authorized_keys'

cat .ssh/id_*.pub|ssh root@hpl1 'cat >> .ssh/authorized_keys'

[root@hpl2 ~]# ssh hpl1 date

Wed Jun 15 13:38:16 CST 2022

[root@hpl2 ~]# ssh hpl2 date

Wed Jun 15 13:38:19 CST 2022

[root@hpl2 ~]# ssh hpl2

Last login: Wed Jun 15 13:25:58 2022 from 192.168.52.1

[root@hpl2 ~]# ssh hpl1 date

Wed Jun 15 13:38:27 CST 2022

[root@hpl2 ~]# ssh hpl2 date

Wed Jun 15 13:38:30 CST 2022

准备hosts文件

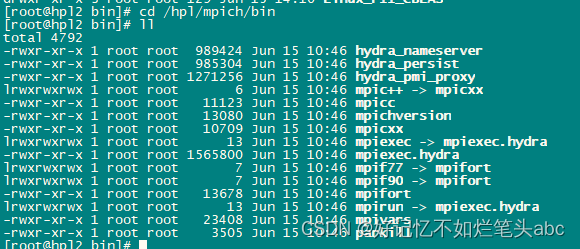

[root@hpl2 Linux_PII_CBLAS]# cat /hpl/hydra/hosts

hpl1:4

hpl2:4

执行:

mpiexec -f /hpl/hydra/hosts -n 4 ./cpi

如果设置了环境变量,可直接mpiexec -n 4 ./cpi

[root@heavydb examples]# cat /hpl/hydra/hosts

hpl1:4

hpl2:4

heavydb:4

[root@heavydb examples]# cat /root/.bashrc

# .bashrc

# User specific aliases and functions

alias rm='rm -i'

alias cp='cp -i'

alias mv='mv -i'

# Source global definitions

if [ -f /etc/bashrc ]; then

. /etc/bashrc

fi

export HYDRA_HOST_FILE=/hpl/hydra/hosts

[root@heavydb examples]# cat /hpl/hydra/hosts

hpl1:4

hpl2:4

heavydb:4

[root@heavydb examples]# mpiexec -f /hpl/hydra/hosts -n 9 ./cpi

Process 0 of 9 is on heavydb

Process 1 of 9 is on heavydb

Process 3 of 9 is on heavydb

Process 2 of 9 is on heavydb

Process 4 of 9 is on hpl2

Process 5 of 9 is on hpl2

Process 6 of 9 is on hpl2

Process 7 of 9 is on hpl2

Process 8 of 9 is on heavydb

pi is approximately 3.1415926544231256, Error is 0.0000000008333325

wall clock time = 0.012438

[root@heavydb examples]# mpiexec -n 9 ./cpi

Process 0 of 9 is on heavydb

Process 1 of 9 is on heavydb

Process 3 of 9 is on heavydb

Process 5 of 9 is on hpl2

Process 7 of 9 is on hpl2

Process 2 of 9 is on heavydb

Process 4 of 9 is on hpl2

Process 6 of 9 is on hpl2

Process 8 of 9 is on heavydb

pi is approximately 3.1415926544231256, Error is 0.0000000008333325

wall clock time = 0.029941

更多使用参考:

mpich/Using_the_Hydra_Process_Manager.md at main · pmodels/mpich · GitHub

mpirun和mpiexec基本上是相同的 - 许多MPI实现中的进程启动器的名称。 MPI标准没有提到如何启动和控制等级,但它建议(尽管不要求),如果有任何类型的启动器,它应该被命名为mpiexec。一些MPI实现以mpirun开始,然后采用mpiexec以实现兼容性。其他实现则相反。最后,大多数实现都使用两个名称来提供它们的启动器。在实践中,mpirun和mpiexec所做的事情应该没有什么不同。

不同的MPI实现有不同的启动和控制过程的方法。 MPICH从一个名为MPD(多用途守护进程或其他)的基础架构开始。然后切换到新的Hydra流程管理器。由于Hydra的功能与MPD不同,因此基于Hydra的mpiexec采用的命令行参数不同于基于MPD的命令行参数,并且使用户可以明确选择基于Hydra的命令行参数,因此它可用作mpiexec.hydra。旧的称为mpiexec.mpd。可能有一个基于MPICH的MPI库只提供Hydra启动程序,然后mpiexec和mpiexec.hydra将是相同的可执行文件。英特尔MPI基于MPICH,其新版本使用Hydra进程管理器。

Open MPI建立在开放运行环境(ORTE)的基础上,其自身的进程启动器被称为orterun。为了兼容,orterun也符号链接为mpirun和mpiexec。

总结:

mpiexec.something是MPI进程启动的给定实现的特定版本mpiexec和mpirun是通用名称的符号链接到实际发射通常副本或- 都

mpiexec和mpirun应该这样做 - 某些实现命名他们的发射器

mpiexec,有些人命名它mpirun,有人将其命名为两者,当系统路径中同时有多个MPI实现可用时,这通常是混淆的来源(例如,当从发行版安装时)

Description Mpiexec is a replacement program for the script mpirun, which is part of the mpich package. It is used to initialize a parallel job from within a PBS batch or interactive environment. Mpiexec uses the task manager library of PBS to spawn copies of the executable on the nodes in a PBS allocation. Reasons to use mpiexec rather than a script (mpirun) or an external daemon (mpd):

- Starting tasks with the TM interface is much faster than invoking a separate rsh or ssh once for each process.

- Resources used by the spawned processes are accounted correctly with mpiexec, and reported in the PBS logs, because all the processes of a parallel job remain under the control of PBS, unlike when using startup scripts such as mpirun.

- Tasks that exceed their assigned limits of CPU time, wallclock time, memory usage, or disk space are killed cleanly by PBS. It is quite hard for processes to escape control of the resource manager when using mpiexec.

- You can use mpiexec to enforce a security policy. If all jobs are required to startup using mpiexec and the PBS execution environment, it is not necessary to enable rsh or ssh access to the compute nodes in the cluster.

参考:https://www.osc.edu/~djohnson/mpiexec/

Linpack性能测试环境搭建_iteye_3126的博客-CSDN博客

傻瓜式Linpack安装(Mpich+Openblas+Hpl)_kongfu_cat的博客-CSDN博客

linpack环境搭建:Openmpi+Openblas+HPL安装教程_HowsenFisher的博客-CSDN博客_ubuntu 安装hpl

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献47条内容

已为社区贡献47条内容

所有评论(0)