python gzip pickle_为什么pickle+gzip在重复数据集上的表现优于h5py?

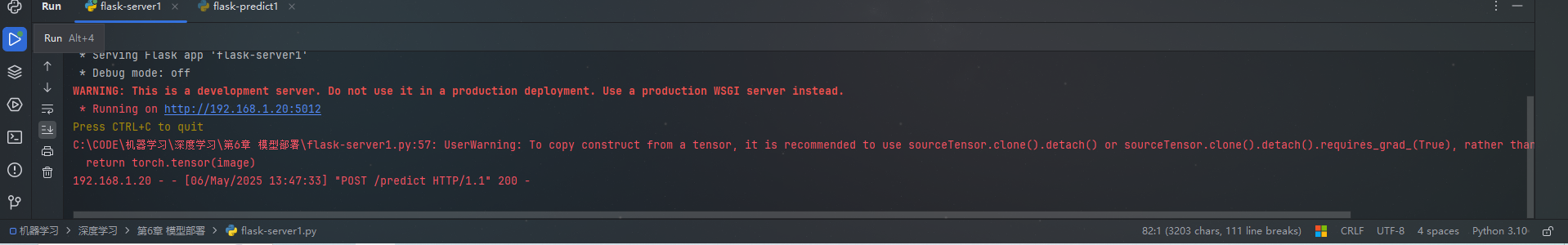

答案是用tcas建议的。我猜压缩是在每个块上单独执行的,并且块的默认大小很小,因此数据中没有足够的冗余,压缩无法从中获益。在下面是给出一个想法的代码:import numpy as npimport gzipimport cPickle as pklimport h5pya = np.random.randn(100000, 10)b = np.hstack( [a[cnt:a.shape[0]-

答案是用tcas建议的。我猜压缩是在每个块上单独执行的,并且块的默认大小很小,因此数据中没有足够的冗余,压缩无法从中获益。在

下面是给出一个想法的代码:import numpy as np

import gzip

import cPickle as pkl

import h5py

a = np.random.randn(100000, 10)

b = np.hstack( [a[cnt:a.shape[0]-10+cnt+1] for cnt in range(10)] )

f_hdf5_chunk_1 = h5py.File('noise_chunk_1.hdf5', 'w')

f_hdf5_chunk_1.create_dataset('b', data = b, compression = 'gzip', compression_opts = 9, chunks = (1,100))

f_hdf5_chunk_1.close()

f_hdf5_chunk_10 = h5py.File('noise_chunk_10.hdf5', 'w')

f_hdf5_chunk_10.create_dataset('b', data = b, compression = 'gzip', compression_opts = 9, chunks = (10,100))

f_hdf5_chunk_10.close()

f_hdf5_chunk_100 = h5py.File('noise_chunk_100.hdf5', 'w')

f_hdf5_chunk_100.create_dataset('b', data = b, compression = 'gzip', compression_opts = 9, chunks = (100,100))

f_hdf5_chunk_100.close()

f_hdf5_chunk_1000 = h5py.File('noise_chunk_1000.hdf5', 'w')

f_hdf5_chunk_1000.create_dataset('b', data = b, compression = 'gzip', compression_opts = 9, chunks = (1000,100))

f_hdf5_chunk_1000.close()

f_hdf5_chunk_10000 = h5py.File('noise_chunk_10000.hdf5', 'w')

f_hdf5_chunk_10000.create_dataset('b', data = b, compression = 'gzip', compression_opts = 9, chunks = (10000,100))

f_hdf5_chunk_10000.close()

结果是:

^{pr2}$

因此,当块变小时,文件的大小也会增加。在

魔乐社区(Modelers.cn) 是一个中立、公益的人工智能社区,提供人工智能工具、模型、数据的托管、展示与应用协同服务,为人工智能开发及爱好者搭建开放的学习交流平台。社区通过理事会方式运作,由全产业链共同建设、共同运营、共同享有,推动国产AI生态繁荣发展。

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)